The AMD Threadripper 2990WX 32-Core and 2950X 16-Core Review

by Dr. Ian Cutress on August 13, 2018 9:00 AM ESTCore to Core to Core: Design Trade Offs

AMD’s approach to these big processors is to take a small repeating unit, such as the 4-core complex or 8-core silicon die (which has two complexes on it), and put several on a package to get the required number of cores and threads. The upside of this is that there are a lot of replicated units, such as memory channels and PCIe lanes. The downside is how cores and memory have to talk to each other.

In a standard monolithic (single) silicon design, each core is on an internal interconnect to the memory controller and can hop out to main memory with a low latency. The speed between the cores and the memory controller is usually low, and the routing mechanism (a ring or a mesh) can determine bandwidth or latency or scalability, and the final performance is usually a trade-off.

In a multiple silicon design, where each die has access to specific memory locally but also has access to other memory via a jump, we then come across a non-uniform memory architecture, known in the business as a NUMA design. Performance can be limited by this abnormal memory delay, and software has to be ‘NUMA-aware’ in order to maximize both the latency and the bandwidth. The extra jumps between silicon and memory controllers also burn some power.

We saw this before with the first generation Threadripper: having two active silicon dies on the package meant that there was a hop if the data required was in the memory attached to the other silicon. With the second generation Threadripper, it gets a lot more complex.

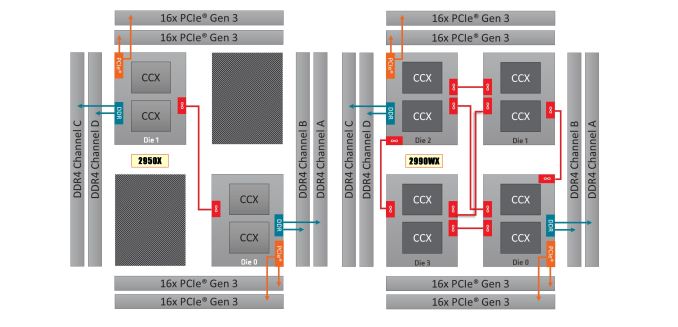

On the left is the 1950X/2950X design, with two active silicon dies. Each die has direct access to 32 PCIe lanes and two memory channels each, which when combined gives 60/64 PCIe lanes and four memory channels. The cores that have direct access to the memory/PCIe connected to the die are faster than going off-die.

For the 2990WX and 2970WX, the two ‘inactive’ dies are now enabled, but do not have extra access to memory or PCIe. For these cores, there is no ‘local’ memory or connectivity: every access to main memory requires an extra hop. There is also extra die-to-die interconnects using AMD’s Infinity Fabric (IF), which consumes power.

The reason that these extra cores do not have direct access is down to the platform: the TR4 platform for the Threadripper processors is set at quad-channel memory and 60 PCIe lanes. If the other two dies had their memory and PCIe enabled, it would require new motherboards and memory arrangements.

Users might ask, well can we not change it so each silicon die has one memory channel, and one set of 16 PCIe lanes? The answer is that yes, this change could occur. However the platform is somewhat locked in how the pins and traces are managed on the socket and motherboards. The firmware is expecting two memory channels per die, and also for electrical and power reasons, the current motherboards on the market are not set up in this way. This is going to be an important point when get into the performance in the review, so keep this in mind.

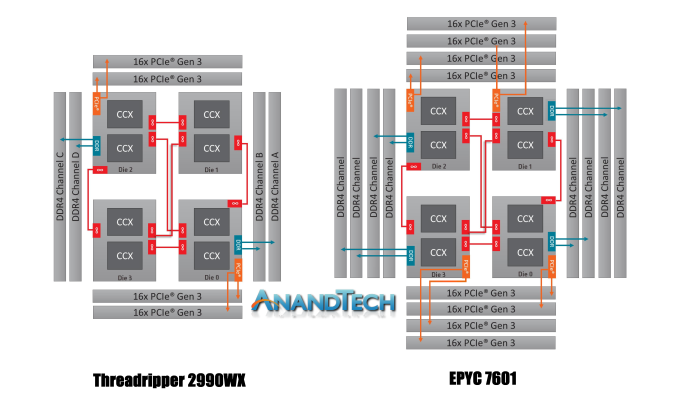

It is worth noting that this new second generation of Threadripper and AMD’s server platform, EPYC, are cousins. They are both built from the same package layout and socket, but EPYC has all the memory channels (eight) and all the PCIe lanes (128) enabled:

Where Threadripper 2 falls down on having some cores without direct access to memory, EPYC has direct memory available everywhere. This has the downside of requiring more power, but it offers a more homogenous core-to-core traffic layout.

Going back to Threadripper 2, it is important to understand how the chip is going to be loaded. We confirmed this with AMD, but for the most part the scheduler will load up the cores that are directly attached to memory first, before using the other cores. What happens is that each core has a priority weighting, based on performance, thermals, and power – the ones closest to memory get a higher priority, however as those fill up, the cores nearby get demoted due to thermal inefficiencies. This means that while the CPU will likely fill up the cores close to memory first, it will not be a simple case of filling up all of those cores first – the system may get to 12-14 cores loaded before going out to the two new bits of silicon.

171 Comments

View All Comments

HStewart - Monday, August 13, 2018 - link

"I highly highly doubt that Intel would postpone 10nm just to “shut down AMD""Probably right - AMD is not that big of threat in the real world - just go in to BestBuy - yes they have some game machines. a very few laptops including older generations

Spunjji - Tuesday, August 14, 2018 - link

That is some impressive goalpost moving that you just did *on your own claim*.Intel's issues have nothing to do with AMD, but they will allow a resurgent AMD to become more competitive over time. Pointing to how little of a threat AMD are *right now* and/or making up weird conspiracy theories that place Intel as the only mover and shaker in the entire industry won't change that.

Relic74 - Wednesday, August 29, 2018 - link

Consumer based computers is but a small portion of the market. Servers, millions of them needed every year to fill the demand needed by, well, everyone who hosts a site, government, networking farms a mile long, etc. The server market is huge and is growing almost faster than tech companies can provide. It's why I always thought Apple getting out if the server market was kind of a stupid ideal. All of the servers they ever created were sold before they were even created. I guess the margains were to small for them, greedy bastards. Why only make double the profits when you make 5x with consumer products. Seriously, an iPhone X costs less than $200 to make now, it used to be $250 but now its $200, greedy bastards. Oh, did you know it costs Apple less than $3 to go from 64GB to 128GB, ugh.Ozymankos - Sunday, January 27, 2019 - link

it matters what you consider as costsdo you calculate the shipping costs,the marketing costs,the salaries of everyone involved,the making of new facilities?

Eastman - Tuesday, August 14, 2018 - link

Intel isn't finished. They are still king of single thread performance. We will see if Zen 2 will surpass Intel's single thread performance.seanlivingstone - Monday, August 13, 2018 - link

Do you know that Jensen Huang is Lisa Su's uncle? Intel is done.f1nalpr1m3 - Thursday, October 25, 2018 - link

Expected Results vs Actual:Stats Expected Q3 2018 Results Actual Q3 2018 Results

Revenue($B) $18.1 $19.2

EPS $1.15 $1.40

UnNameless - Tuesday, August 14, 2018 - link

Sadly this is true. AMD tries hard and in the most part succeeds. Intel frankly showed some kind of panic for the niche market of top end processors with that chilled fiasco of a 5 GHz CPU. This means AMD puts quite some pressure onto themOutlander_04 - Tuesday, August 14, 2018 - link

AMD have bounced back very quickly . Mostly because people are starting to accept how over priced intel have beenhttps://wccftech.com/intel-coffee-lake-amd-ryzen-c...

twtech - Wednesday, August 15, 2018 - link

I don't think branding issues is going to stop purchases of AMD chips when they are the best fit for a particular use-case, but the lack of direct access to memory for half of the cores in the 2990wx is going to keep it from being the knockout punch for HEDT that it could have been.Looking at these benchmark results, that has seriously gimped the performance of the 32-core TR to the point where it is slower than the 16 core in some threaded workloads.

Sure, you can just go ahead and buy the 16-core 2950x instead, but then you're reduced back to being in 7980xe territory - albeit at a cheaper price point - but the point is, it's not the clear win that a relatively high clocked 32-core CPU probably could have been without the memory access issue.