The AMD Radeon VII Review: An Unexpected Shot At The High-End

by Nate Oh on February 7, 2019 9:00 AM EST

For AMD’s Radeon Technologies Group, 2018 was a bit of a breather year. After launching the Polaris architecture in 2016 and the Vega architecture in 2017, for 2018 AMD set about to enjoy their first full year of Vega. Instead of having to launch a third architecture in three years, the company would focus on further expanding the family by bringing Vega's laptop and server variants to market. And while AMD's laptop efforts have gone in an odd direction, their Radeon Instinct server efforts have put some pep back in their figurative step, giving company the claim to the first 7nm GPU.

Following the launch of a late-generation product refresh in November, in the form of the Radeon RX 590, we had expected AMD's consumer side to be done for a while. Instead, AMD made a rather unexpected announcement at CES 2019 last month: the company would be releasing a new high-end consumer card, the Radeon VII (Seven). Based on their aforementioned server GPU and positioned as their latest flagship graphics card for gamers and content creators alike, Radeon VII would once again be AMD’s turn to court enthusiast gamers. Now launching today – on the 7th, appropriately enough – we're taking a look at AMD's latest card, to see how the Radeon VII measures up to the challenge.

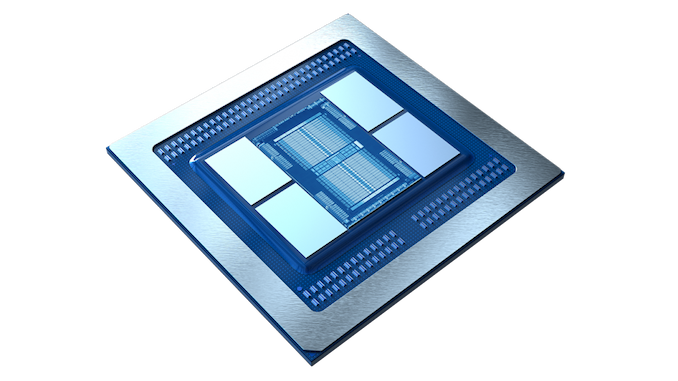

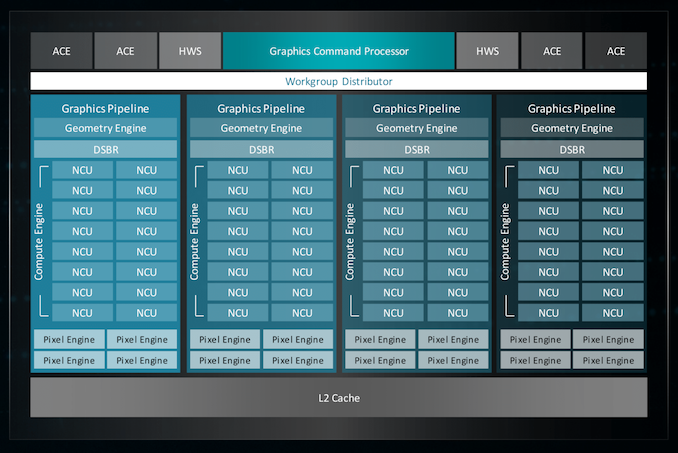

On the surface, the Radeon VII would seem to be straightforward. The silicon underpinning the card is AMD's Vega 20 GPU, a derivative of the original Vega 10 that has been enhanced for scientific compute and machine learning, and built on TSMC's cutting-edge 7nm process for improved performance. An important milestone for AMD's server GPU efforts – it's essentially their first high-end server-class GPU since Hawaii all the way back in 2013 – AMD has been eager to show off Vega 20 throughout the later part of its bring-up, as this is the GPU the heart of AMD’s relatively new Radeon Instinct MI50 and MI60 server accelerators.

First and foremost designed for servers then, Vega 20 is not the class of GPU that could cheaply make its way to consumers. Or at least, would seem to be AMD's original thought. But across the aisle, something unexpected has happened: NVIDIA hasn't moved the meter very much in terms of performance-per-dollar. The new Turing-based GeForce RTX cards instead are all about features, looking to usher in a new paradigm of rendering games with real-time raytracing effects, and in the process allocating large parts of the already-large Turing GPUs to this purpose. The end result has been relatively high prices for the GeForce RTX 20 series cards, all the while their performance gains in conventional game are much less than the usual generational uplift.

Faced with a less hostile pricing environment than many were first expecting, AMD has decided to bring Vega 20 to consumers after all, dueling with NVIDIA at one of these higher price points. Hitting the streets at $699, the Radeon VII squares up with the GeForce GTX 2080 as the new flagship Radeon gaming card.

| AMD Radeon Series Specification Comparison | ||||||

| AMD Radeon VII | AMD Radeon RX Vega 64 | AMD Radeon RX 590 | AMD Radeon R9 Fury X | |||

| Stream Processors | 3840 (60 CUs) |

4096 (64 CUs) |

2304 (36 CUs) |

4096 (64 CUs) |

||

| ROPs | 64 | 64 | 32 | 64 | ||

| Base Clock | 1400MHz | 1247MHz | 1469MHz | N/A | ||

| Boost Clock | 1750MHz | 1546MHz | 1545MHz | 1050MHz | ||

| Memory Clock | 2.0Gbps HBM2 | 1.89Gbps HBM2 | 8Gbps GDDR5 | 1Gbps HBM | ||

| Memory Bus Width | 4096-bit | 2048-bit | 256-bit | 4096-bit | ||

| VRAM | 16GB | 8GB | 8GB | 4GB | ||

| Single Precision | 13.8 TFLOPS | 12.7 TFLOPS | 7.1 TFLOPS | 8.6 TFLOPS | ||

| Double Precision | 3.5 TFLOPS (1/4 rate) |

794 GFLOPS (1/16 rate) |

445 GFLOPS (1/16 rate) |

538 GFLOPS (1/16 rate) |

||

| Board Power | 300W | 295W | 225W | 275W | ||

| Reference Cooling | Open-air triple-fan | Blower | N/A | AIO CLC | ||

| Manufacturing Process | TSMC 7nm | GloFo 14nm | GloFo/Samsung 12nm | TSMC 28nm | ||

| GPU | Vega 20 (331 mm2) |

Vega 10 (495 mm2) |

Polaris 30 (232 mm2) |

Fiji (596 mm2) |

||

| Architecture | Vega (GCN 5) |

Vega (GCN 5) |

GCN 4 | GCN 3 | ||

| Transistor Count | 13.2B | 12.5B | 5.7B | 8.9B | ||

| Launch Date | 02/07/2019 | 08/14/2017 | 11/15/2018 | 06/24/2015 | ||

| Launch Price | $699 | $499 | $279 | $649 | ||

Looking at our specification table, Radeon VII ships with a "peak engine clock" of 1800MHz, while the official boost clock is 1750MHz. This compares favorably to RX Vega 64's peak engine clock, which was just 1630MHz, so AMD has another 10% or so in peak clockspeed to play with. And thanks to an open air cooler and a revised SMU, Radeon VII should be able to boost to and sustain its higher clockspeeds a little more often still. So while AMD's latest card doesn't add more ROPs or CUs (it's actually a small drop from the RX Vega 64), it gains throughput across the board.

However, if anything, the biggest change compared to the RX Vega 64 is that AMD has doubled their memory size and more than doubled their memory bandwidth. This comes courtesy of the 7nm die shrink, which sees AMD's latest GPU come in with a relatively modest die size of 331mm2. The extra space has given AMD room on their interposer for two more HBM2 stacks, allowing for more VRAM and a wider memory bus. AMD has also been able to turn up the memory clockspeed up a bit as well, from 1.89 Gbps/pin on the RX Vega 64 to a flat 2 Gbps/pin for the Radeon VII.

Interestingly, going by its base specifications, the Radeon VII is essentially a Radeon Instinct MI50 at heart. So for AMD, there's potential to cannibalize Instinct sales if the Radeon VII's performance is too good for professional compute users. As a result, AMD has cut back on some of the chip's features just a bit to better differentiate the products. We'll go into more a bit later, but chief among these is that the card operates at a less-than-native FP64 rate, loses its full-chip ECC support, and naturally for a consumer product, it uses the Radeon Software gaming drivers instead of the professional Instinct driver stack.

Of course any time you're talking about putting a server GPU in to a consumer or prosumer card, you're talking about the potential for a powerful card, and this certainly applies to the Radeon VII. Ultimately, the angle that AMD is gunning for with their latest flagship card is on the merit of its competitive performance, further combined with its class-leading 16GB of HBM2 memory. As one of AMD's few clear-cut specification advantages over the NVIDIA competition, VRAM capacity is a big part of AMD's marketing angle; they are going to be heavily emphasizing content creation and VRAM-intensive gaming. Also new to this card and something AMD will be keen to call out is their triple-fan cooler, replacing the warmly received blower on the Radeon RX Vega 64/56 cards.

Furthermore, as a neat change, AMD is throwing their hat into the retail ring as a board vendor and directly selling the new card at the same $699 MSRP. Given that AIBs are also launching their branded reference cards today, it's an option for avoiding inflated launch prices.

Meanwhile, looking at the competitive landscape, there are a few items to tackle today. A big part of the mix is (as has become common lately) a game bundle. The ongoing Raise the Game Fully Loaded pack sees Devil May Cry 5, The Division 2, and Resident Evil 2 included for free with the Radeon VII, RX Vega and RX 590 cards. Meanwhile the RX 580 and RX 570 cards qualify for two out of the three. Normally, a bundle would be a straightforward value-add against a direct competitor – in this case, the RTX 2080 – but NVIDIA has their own dueling Game On bundle with Anthem and Battlefield V. In a scenario where the Radeon VII is expected to trade blows with the RTX 2080 rather than win outright, these value-adds become more and more important.

The launch of the Radeon VII also marks the first product launch since the recent shift in the competitive landscape for variable refresh monitor technologies. Variable refresh rate monitors have turned into a must-have for gamers, and since the launch of variable refresh technology earlier this decade, there's been a clear split between AMD and NVIDIA cards. AMD cards have supported VESA Adaptive Sync – better known under AMD's FreeSync branding – while NVIDIA desktop cards have only supported their proprietary G-Sync. But last month, NVIDIA made the surprise announcement that their cards would support VESA Adaptive Sync on the desktop, under the label of 'G-Sync Compatibility.' Details are sparse on how this program is structured, but at the end of the day, adaptive sync is usable in NVIDIA drivers even if a FreeSync panel isn't 'G-Sync Compatible' certified.

The net result is that while NVIDIA's announcement doesn't hinder AMD as far as features go, it does undermine AMD's FreeSync advantage – all of the cheap VESA Adaptive Sync monitors that used to only be useful on AMD cards are now potentially useful on NVIDIA cards as well. AMD of course has been quite happy to emphasize the "free" part of FreeSync, so as a weapon to use against NVIDIA, it has been significantly blunted. AMD's official line is one of considering this a win for FreeSync, and for freedom of consumer choice, though the reality is often a little more unpredictable.

The launch of the Radeon VII and its competitive positioning against the GeForce RTX 2080 means that AMD also has to crystalize their stance on the current feature gap between their cards and NVIDIA's latest Turing machines. To this end, AMD's position has remained the same on DirectX Raytracing (DXR) and AI-based image quality/performance techniques such as DLSS. In short, AMD's argument goes along the lines that they believe that the performance hit and price premium for these features isn't worth the overall image quality difference. In the meantime, AMD isn't standing still, and along with DXR fallback drivers, they working on support for WinML and DirectML for their cards. The risk to AMD being, of course, is that if DXR or NVIDIA's DLSS efforts end up taking off quickly, then the feature gap is going to become more than a theoretical annoyance.

All told, pushing out a 7nm large gaming GPU for consumers now is a very aggressive move so early in this process' lifecycle, especially as on a cyclical basis, Q1 is typically flat-to-down and Q2 is down. But in context, AMD doesn't have that much time to wait and see. The only major obstacle would be pricing it to be acceptable for consumers.

That brings us to today's launch. For $699, NVIDIA has done the price-bracket shifting already, on terms of dedicated hardware for accelerating raytracing and machine learning workloads. For the Radeon VII, the terms revolve around 16GB HBM2 and prosumer/content creator value. All that remains is their gaming performance.

| 2/2019 GPU Pricing Comparison | |||||

| AMD | Price | NVIDIA | |||

| $1299 | GeForce RTX 2080 Ti (Game On Bundle) |

||||

| Radeon VII (Raise the Game Bundle) |

$699/$719 | GeForce RTX 2080 (Game On Bundle) |

|||

| $499 | GeForce RTX 2070 (Game On Bundle, 1 game) |

||||

| Radeon RX Vega 64 OR Radeon RX Vega 56 (Raise the Game Bundle) |

$399 | ||||

| $349 | GeForce RTX 2060 (Game On Bundle, 1 game) |

||||

289 Comments

View All Comments

schizoide - Thursday, February 7, 2019 - link

Sure it does, at the bottom-end. It basically IS an instinct mi50 on the cheap.GreenReaper - Thursday, February 7, 2019 - link

Maybe they weren't selling so well so they decided to repurpose before Navi comes out and makes it largely redundant.schizoide - Thursday, February 7, 2019 - link

IMO, what happened is pretty simple. Nvidia's extremely high prices allowed AMD to compete with a workstation-class card. So they took a swing.eva02langley - Friday, February 8, 2019 - link

My take to. This card was never intended to be released. It just happened because the RTX 2080 is at 700+$.In Canada, the RVII is 40$ less than the cheapest 2080 RTX, making it the better deal.

Manch - Thursday, February 7, 2019 - link

It is but its slightly gimped perf wise to justify the price diff.sing_electric - Thursday, February 7, 2019 - link

Anyone else think that the Mac Pro is lurking behind the Radeon VII release? Apple traditionally does a March 2019 event where they launch new products, so the timing fits (especially since there's little reason to think the Pro would need to be launched in time for the Q4 holiday season).-If Navi is "gamer-focused" as Su has hinted, that may well mean GDDR6 (and rays?), so wouldn't be of much/any benefit to a "pro" workload

-This way Apple can release the Pro with the GPU as a known quantity (though it may well come in a "Pro" variant w/say, ECC and other features enabled)

-Maybe the timing was moved up, and separated from the Apple launch, in part to "strike back" at the 2080 and insert AMD into the GPU conversation more for 2019.

The timeline and available facts seem to fit pretty well here...

tipoo - Thursday, February 7, 2019 - link

I was thinking a better binned die like VII for the iMac Pro.Tbh the Mac Pro really needs to support CUDA/Nvidia if it's going to be a serious contendor for scientific compute.

sing_electric - Thursday, February 7, 2019 - link

I mean, sure? but I'm not sure WHAT market Apple is going after with the Mac Pro anyways... I mean, would YOU switch platforms (since anyone who seriously needs the performance necessary to justify the price tag in a compute-heavy workload has almost certainly moved on from their 2013 Mac Pro) with the risk that Apple might leave the Pro to languish again?There's certainly A market for it, I'm just not sure what the market is.

repoman27 - Thursday, February 7, 2019 - link

The Radeon VII does seem to be one piece of the puzzle, as far as the new Mac Pro goes. On the CPU side Apple still needs to wait for Cascade Lake Xeon W if they want to do anything more than release a modular iMac Pro though. I can't imagine Apple will ever release another dual-socket Mac, and I'd be very surprised if they switched to AMD Threadripper at this point. But even still, they would need XCC based Xeon W chips to beat the iMac Pro in terms of core count. Intel did release just such a thing with the Xeon W 3175X, but I'm seriously hoping for Cascade Lake over Skylake Refresh for the new Mac Pro. That would push the release timeline out to Q3 or Q4 though.The Radeon VII also appears to lack DisplayPort DSC, which means single cable 8K external displays would be a no-go. A new Mac Pro that could only support Thunderbolt 3 displays up to 5120 x 2880, 10 bits per color, at 60 Hz would almost seem like a bit of a letdown at this point. Apple is in a bit of an awkward position here anyway, as ICL-U will have integrated Thunderbolt 3 and an iGPU that supports DP 1.4a with HBR 3 and DSC when it arrives, also around the Q3 2019 timeframe. I'm not sure Intel even has any plans for discrete Thunderbolt controllers after Titan Ridge, but with no PCIe 4.0 on Cascade Lake, there's not much they can even do to improve on it anyway.

So maybe the new Mac Pro is a Q4 2019 product and will have Cascade Lake Xeon W and a more pro-oriented yet Navi-based GPU?

sing_electric - Thursday, February 7, 2019 - link

Possibly, but I'm not 100% sure that they need to be at the iMac Pro on core count to have a product. More RAM (with a lot of slots that a user can get to) and a socketed CPU with better thermals than you can get on the back of a display might do it. I'd tend to think that moving to Threadripper (or EPYC) is a pipe dream, partly because of Thunderbolt support (which I guess, now that it's open, Apple could THEORETICALLY add, but it just seems unlikely at this point, particularly since there'd be things where a Intel-based iMac Pro might beat a TR-based Mac Pro, and Apple doesn't generally like complexities like that).Also, I'd assumed that stuff like DSC support would be one of the changes between the consumer and Pro versions (and AMD's Radeon Pro WX 7100 already does DSC, so its not like they don't have the ability to add it to pro GPUs).