The Intel Core i9-9990XE Review: All 14 Cores at 5.0 GHz

by Dr. Ian Cutress on October 28, 2019 10:00 AM ESTChromium Compile: Windows VC++ Compile of Chrome

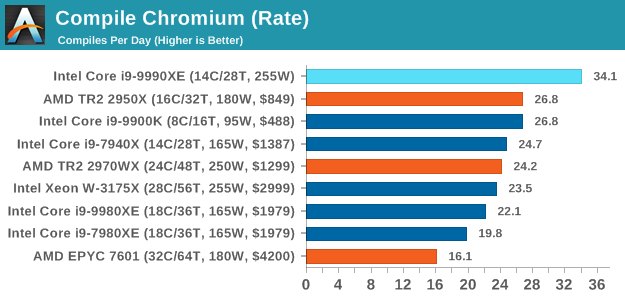

A large number of AnandTech readers are software engineers, looking at how the hardware they use performs. While compiling a Linux kernel is ‘standard’ for the reviewers who often compile, our test is a little more varied – we are using the windows instructions to compile Chrome, specifically a Chrome 56 build from March 2017, as that was when we built the test. Google quite handily gives instructions on how to compile with Windows, along with a 400k file download for the repo. This is by far one of our most popular benchmarks, and is a good measure of core performance, multithreading performance, and also memory accesses.

In our test, using Google’s instructions, we use the MSVC compiler and ninja developer tools to manage the compile. As you may expect, the benchmark is variably threaded, with a mix of DRAM requirements that benefit from faster caches. Data procured in our test is the time taken for the compile, which we convert into compiles per day. The benchmark takes anywhere from an hour on a fast single high-end desktop processor to several hours on the slowest offerings.

Prior to this test, the two CPUs battling it out for supremacy were the 16-core Ryzen Threadripper 2950X, and the 8-core i9-9900K. By adding six more cores, a lot more frequency, and two more memory channels, the Core i9-9990XE plows through this test very easily, perfoming the compile in 42 minutes and 10 seconds, and is the only processor to broach the 50 minute mark, let alone the 45 minute mark.

145 Comments

View All Comments

Batmeat - Monday, October 28, 2019 - link

Agreed.This chip is auction only. Expect to pay HUGE DOLLARS for this assuming you even have access to the auctions.

mrvco - Monday, October 28, 2019 - link

But the chip isn't selling for all that much at auction apparently... I was expecting much more than 2849 euros if this thing really is the golden ticket for HFT. From a financial perspective, this isn't worth Intel's time or effort relative to letting top-tier partners and resellers buy up the whole supply of 9990XE's to do the binning on their own. Regardless, it's less expensive than a Super Bowl ad I suppose and probably more effective considering their target audience.Cygni - Monday, October 28, 2019 - link

It is a niche product for a niche market... one that the article talked about at length. "Bragging rights" doesn't come into play when it is a tool for making money.willis936 - Monday, October 28, 2019 - link

It just doesn't make sense. If single threaded performance is king then why do you need 14 cores running at 5 GHz? If multithreaded performance is king then why not go wider? The low latency case doesn't make sense. You could assemble multiple systems focused on single threaded performance for less money than this 14 core auction-only chip costs. When people say it's only for bragging rights, they are not wrong.edzieba - Monday, October 28, 2019 - link

To GREATLY oversimplify HFT loads:you want as many cores as you can get, but those cores must respond as quickly as possible. You're effectively packet-watching: as soon as you see a packet you need to read it, determine if it is to be acted upon, determine how it is to be acted upon, and then respond, and you need to do it faster than everyone else. Everyone else has as close as is possible to the same network latency (e.g. stock exchanges employ huge fibre loops to ensure every endpoint has the same light-speed lag), so if you can run at 5GHz vs. 4GHz of your competitors you can respond to any given packet before they can. It's only a hair faster, but if you're jsut barely first you're still first so your transaction request is the one the stock exchange acts upon and not everyone else's.

You want more cores because each core can run its own worker with its own algorithm (or more workers on the same algorithm to offset by packet arrival). You never want to be operating at a lower frequency than everyone else because it means NOTHING that you have 64 cores if every one of your cores is consistently too slow to beat out everyone else.

You want all those cores on one die because you need to remain consistent in response (i.e. not have one worker working at cross-purposes against another) and inter-socket or worse inter-machine latency will kill you dead in the race to respond.

Processwindow - Monday, October 28, 2019 - link

If high frequency is the ultimate goal and money is not an issue, why staying water.cooling.and not going straight to LN2. LN2 is cheap by wall street standards and it would allow to go higher than 6 GHz for sure. Btw in the great explanation you give regarding the need of high frequency CPU in HFT , I see nowhere optimization of the network card whatever it is and of its firmware.JoeyJoJo123 - Monday, October 28, 2019 - link

>If high frequency is the ultimate goal and money is not an issue, why staying water.cooling.and not going straight to LN2. LN2 is cheap by wall street standards...These kinds of machines are used by automated systems doing stock trading thousands of times per second. (It's why being a day-trader is absolutely worthless because automated software can do your task thousands of times faster and more accurately given historical trends.) LN2 isn't "expensive" but this is a machine that needs to have the highest single-threaded performance possible, with as many cores as possible, yet still run 24/7 to keep up with the market. The system can never sleep, as we're talking potentially hundreds of thousands of dollars lost per few seconds of downtime. And that's why LN2 isn't used--It evaporates and while LN2 cooled systems can overclock higher than non-LN2 systems, it's at such a bleeding edge of instability that it in-and-of-itself will cost the stock-trader money whenever it inevitably gets a cold-bug and needs to reboot or if the thermal transfer between the pot and the IHS cracked at ultra-low temperatures and the temps are starting to rise, or if there's condensation around ICs, or if the power delivery system is starting to fail because it's been ran over-spec for the last 50 days, etc.

Processwindow - Monday, October 28, 2019 - link

What are you talking about? MRI tools in every big hospital in the world is working 24/7 with LN2 . Every semiconductor fab in the world use LN2 in manufacturing environnement. LN2 is not an exotic material.So you tell me you can spend 10s millions of dollar to gain a few ns in HFT but you don't want to go beyond water cooling to gain a few GHz on your CPU Something is wrong here.

Opencg - Monday, October 28, 2019 - link

You clearly don't understand the difference between those machines and these. You just don't have a mind for it. Do not try to argue. Don't even try to think about it man. You are useless.The difference is that those machines were designed with LN2 cooling in mind for sustained operation. Computers were not. Having a skilled overclocker precisely control a benchmark for a (relatively) short time is nothing like having a machine designed to automatically consistently do it over long periods. Developing a computer that could do his would not only be VERY expensive but there is also a risk of it simply not working consistently enough in the end anyway and you are back to square one.

Processwindow - Monday, October 28, 2019 - link

You are the one clearly not understanding the situation. It seems financial companies involved in HFT can spend 10s millions us$ to gain a few ns . But they wouldn't want to spend those same dollars to get 1 or 2 more GHz with special custom LN2 or whatever other ultra low temp cooling system? Something doesn't add up here.