The 64 Core Threadripper 3990X CPU Review: In The Midst Of Chaos, AMD Seeks Opportunity

by Dr. Ian Cutress & Gavin Bonshor on February 7, 2020 9:00 AM ESTAMD 3990X Against Prosumer CPUs

The first set of consumers that will be interested in this processor will be those looking to upgrade into the best consumer/prosumer HEDT package available on the market. The $3990 price is a high barrier to entry, but these users and individuals can likely amortize the cost of the processor over its lifetime. To that end, we’ve selected a number of standard HEDT processors that are near in terms of price/core count, as well as putting in the 8-core 5.0 GHz Core i9-9900KS and the 28-core unlocked Xeon W-3175X.

| AMD 3990X Consumer Competition | ||||||

| AnandTech | AMD 3990X |

AMD 3970X |

Intel 3175X |

Intel i9- 10980XE |

AMD 3950X |

Intel 9900KS |

| SEP | $3990 | $1999 | $2999 | $979 | $749 | $513 |

| Cores/T | 64/128 | 32/64 | 28/56 | 18/36 | 16/32 | 8/16 |

| Base Freq | 2900 | 3700 | 3100 | 3000 | 3500 | 5000 |

| Turbo Freq | 4300 | 4500 | 4300 | 4800 | 4700 | 5000 |

| PCIe | 4.0 x64 | 4.0 x64 | 3.0 x48 | 3.0 x48 | 4.0 x24 | 3.0 x16 |

| DDR | 4x 3200 | 4x 3200 | 6x 2666 | 4x 2933 | 2x 3200 | 2x 2666 |

| Max DDR | 512 GB | 512 GB | 512 GB | 256 GB | 128 GB | 128 GB |

| TDP | 280 W | 280 W | 255 W | 165 W | 105 W | 127 W |

The 3990X is beyond anything in price at this level, and even at the highest consumer cost systems, $1000 could be the difference between getting two or three GPUs in a system. There has to be big upsides here moving from the 32 core to the 64 core.

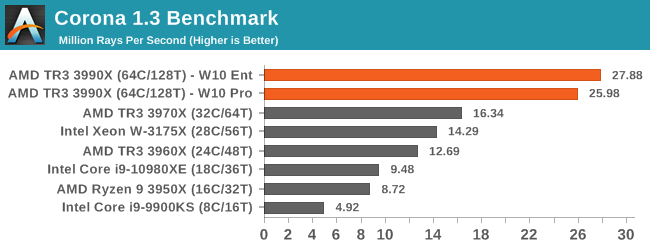

Corona is a classic 'more threads means more performance' benchmark, and while the 3990X doesn't quite get perfect scaling over the 32 core, it is almost there.

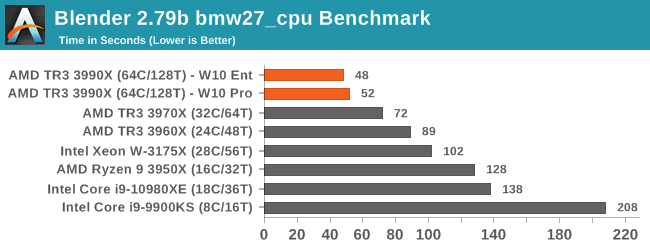

The 3990X scores new records in our Blender test, with sizeable speed-ups against the other TR3 hardware.

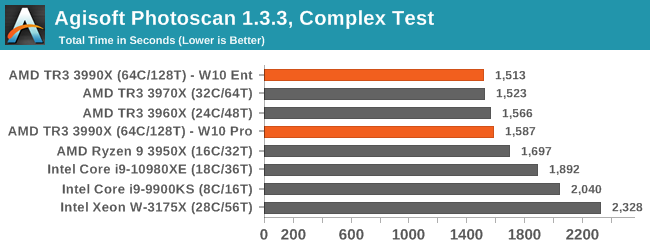

Photoscan is a variable threaded test, and the AMD CPUs still win here, although 24 core up to 64 core all perform within about a minute of each other in this 20 minute test. Intel's best consumer hardware is a few minutes behind.

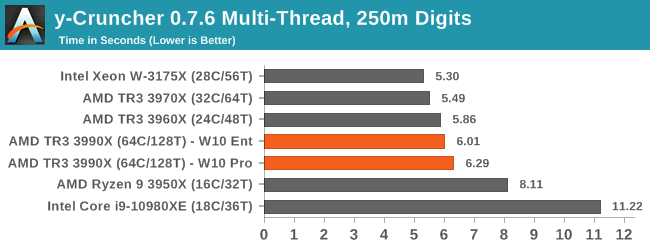

y-cruncher is an AVX-512 accelerated test, and so Intel's 28-core with AVX-512 wins here. Interestingly the 128 cores of the 3990X get in the way here, likely the spawn time of so many threads is adding to the overall time.

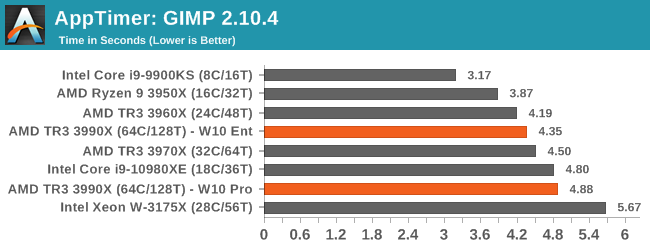

GIMP is a single threaded test designed around opening the program, and Intel's 5.0 GHz chip is the best here. the 64 core hardware isn't that bad here, although the W10 Enterprise data has the better result.

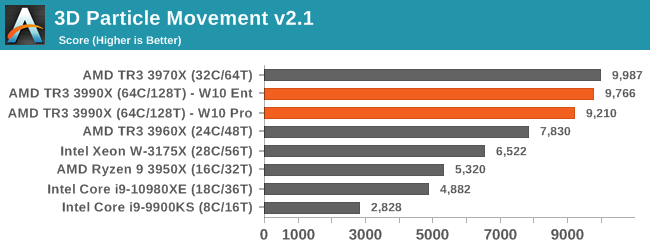

Without any hand tuned code, between 32 core and 64 core workloads on 3DPM, there's actually a slight deficit on 64 core.

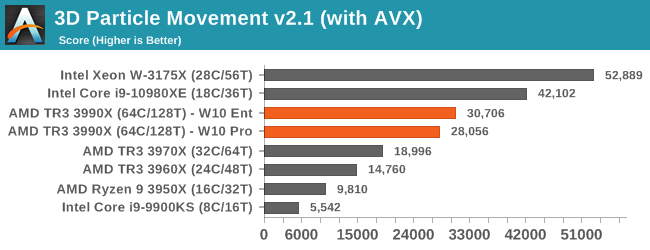

But when we crank in the hand tuned code, the AVX-512 CPUs storm ahead by a considerable margin.

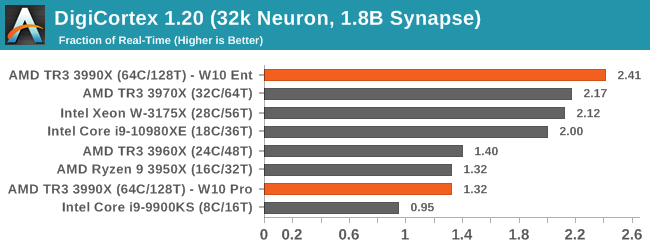

We covered Digicortex on the last page, but it seems that the different thread groups on W10 Pro is holidng the 3990X back a lot. With SMT disabled, we score nearer 3x here.

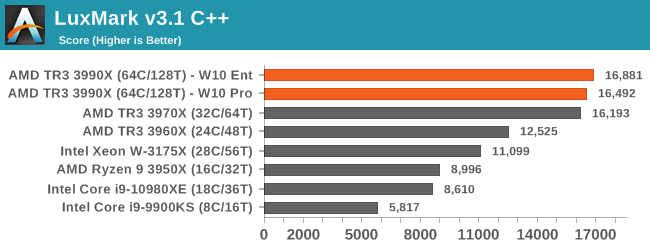

Luxmark is an AVX2 accelerated program, and having more cores here helps. But we see little gain from 32C to 64C.

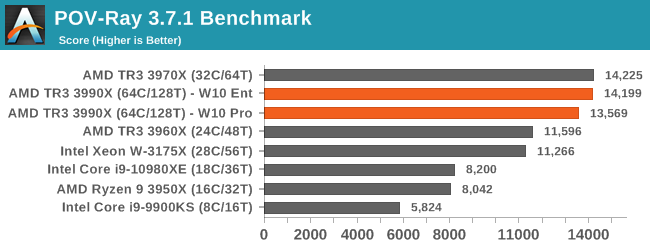

As we saw on the last page, POV-Ray preferred having SMT off for the 3990X, otherwise there's no benefit over the 32-core CPU.

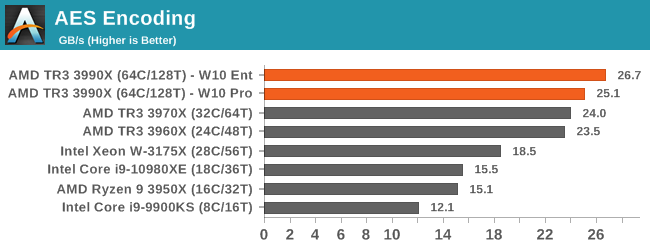

AES gets a slight bump over the 32 core, however not as much as the 2x price difference would have you believe.

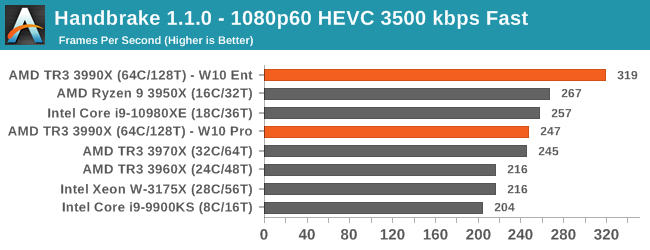

As we saw on the previous page, W10 Enterprise causes our Handbrake test to go way up, but on W10 Pro then the 3990X loses ground to the 3950X.

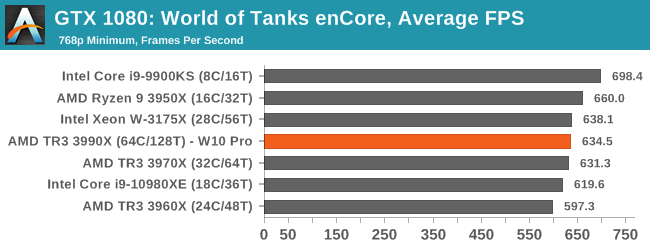

And how about a simple game test - we know 64 cores is overkill for games, so here's a CPU bount test. There's not a lot in it between the 3990X and the 3970X, but Intel's high frequency CPUs are the best here.

Verdict

There are a lot of situations where the jump from AMD's 32-core $1999 CPU, the 3970X, up to the 64-core $3990 CPU only gives the smallest tangible gain. That doesn't bode well. The benchmarks that do get the biggest gains however can get near perfect scaling, making the 3990X a fantastic upgrade. However those tests are few and far between. If these were the options, the smart money is on the 3970X, unless you can be absolutely clear that the software you run can benefit from the extra cores.

279 Comments

View All Comments

HStewart - Friday, February 7, 2020 - link

One note on render farms, in the past I created my own render farms and it was better to use multiple machines than cores because of dependency of disk io speed can be distributed. Yes it is a more expensive option but disk io serious more time consuming than processor time.Not content creation workstation is a different case - and more cores would be nice.

MattZN - Friday, February 7, 2020 - link

SSDs and NVMe drives have pretty much removed the write bottleneck for tasks such as rendering or video production. Memory has removed read bottleneck. There are very few of these sorts of workloads that are dependent on I/O any more. rendering, video conversion, bulk compiles.... on modern systems there is very little I/O involved relative to the cpu load.Areas which are still sensitive to I/O bandwidth would include interactive video editing, media distribution farms, and very large databases. Almost nothing else.

-Matt

HStewart - Saturday, February 8, 2020 - link

I think we need to see a benchmark specifically on render frame with single 64 core computer and also with dual 32 core machines in network and quad core machines in network. All machines have same cpu designed, same storage and possibly same memory. Memory is a question able part because of the core load.I have a feeling with correctly designed render farm the single 64 core will likely lose the battle but the of course the render job must be a large one to merit this test.

For video editing and workstation designed single cpu should be fine.

HStewart - Saturday, February 8, 2020 - link

One more thing these render tests need to be using real Render software - not PovRay, Corona and Blender.e

I personal using Lightwave 3D from NewTek, but 3DMax, Maya and Cimema 3d are good choice - Also custom render man software

Reflex - Saturday, February 8, 2020 - link

It wouldn't change the results.HStewart - Sunday, February 9, 2020 - link

Yes it would - this a real 3d render projects - for example one of reason I got in Lightwave is Star Trek movies, also used in older serious called Babylon 5 and Sea quest DSV. But you think about Pixar movies instead scenes in games and such.Reflex - Sunday, February 9, 2020 - link

It would not change the relative rankings of CPU's vs each other by appreciable amounts. Which is what people read a comparative review for.Reflex - Saturday, February 8, 2020 - link

Network latencies and transfer are significantly below PCIe. Below you challenged my points by discussing I/O and storage, but here you go the other direction suggesting a networked cluster could somehow be faster. That is not only unlikely, it would be ahistorical as clusters built for performance have always been a workaround to limited local resources.I used to mess around with Beowulf clusters back in the day, it was never, ever faster than simply having a better local node.

Reflex - Friday, February 7, 2020 - link

You may wish to read the article, which answers your 'honest generic CPU question' nicely. Short version is: It depends on your workload. If you just browse the web with a dozen tabs and play games, no this isn't worth the money. If you do large amounts of video processing and get paid for it, this is probably worth every penny. Basically your mileage may vary.HStewart - Saturday, February 8, 2020 - link

Video processing and rendering likely depends on disk io - also video as far as I know is also signal thready unless the video card allows multiple connections at same time.I just think adding more core is trying to get away from actually tackling the problem. The designed of the computer needs to change.