Intel NUC10i7FNH Frost Canyon Review: Hexa-Core NUC Delivers a Mixed Bag

by Ganesh T S on March 2, 2020 9:00 AM ESTHTPC Credentials - Display Outputs Capabilities

The

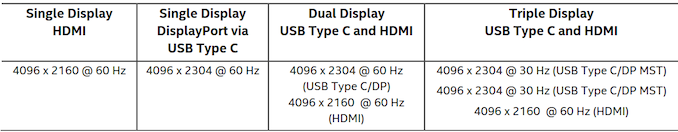

Supporting the display of high-resolution protected video content is a requirement for even a casual HTPC user. In addition, HTPC enthusiasts also want their systems to support refresh rates that either match or be an integral multiple of the frame rate of the video being displayed. Most displays / AVRs are able to transmit the supported refresh rates to the PC using the EDID metadata. In some cases, the desired refresh rate might be missing in the list of supported modes, and custom resolutions may need to be added.

Custom Resolutions

Our evaluation of the

The gallery below presents screenshots from the other refresh rates that were tested. The system has no trouble maintaining a fairly accurate refresh rate throughout the duration of the video playback.

High Dynamic Range (HDR) Support

The ability of the system to support HDR output is brought out in the first line of the madVR OSD in the above pictures. The display / desktop was configured to be in HDR mode prior to the gathering of the above screenshots.

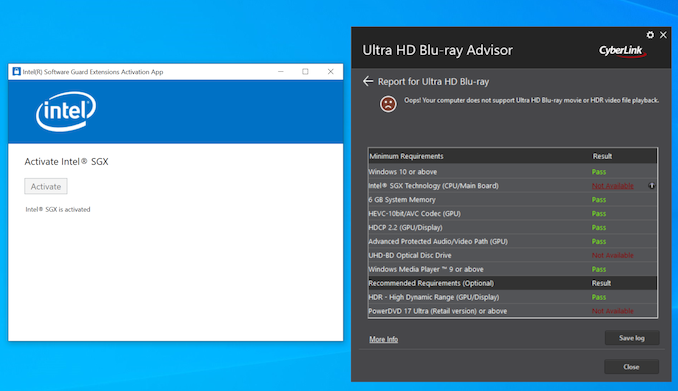

The CyberLink Ultra HD Blu-ray Advisor tool confirms that our setup (

[Update (3/17/2020): While I continue to have terrible luck in enabling SGX to operate correctly with the CuberLink Ultra HD Blu-ray Advisor tool, Intel sent across proof that the Frost Canyon NUC is indeed capable of playing back Ultra HD Blu-rays.

It is likely that most consumers using the pre-installed Windows 10 Home x64 / pre-installed drivers will have a painless experience unlike mine which started off the system as a barebones version.]

85 Comments

View All Comments

HStewart - Tuesday, March 3, 2020 - link

I will say that evolution of Windows has hurt PC market, with more memory and such, Microsoft adds a lot of fat into OS. As as point of sale developer though all these OS, I wish Microsoft had a way to reduce the stuff one does not need.Just for information the original Doom was written totally different to games - back in old days Michael Abrash (a leader in original game graphics) work with John Carmack of Id software for Doom and Quake, Back then we did not have GPU driven graphics and code was done in assembly language.

Over time, development got fat and higher level languages plus GPU and drivers. came in picture. This also occurred in OS area where in 1992 I had change companies because Assembly Language developers started becoming a dying breed.

I think part of this is Microsoft started adding so many features in the OS, and there is a lot of bulk to drive the windows interface which is much simpler in older versions.

If I was with Microsoft, I would have options in Windows for super trim version of the OS. Reducing overhead as much as possible. Maybe dual boot to it.

HStewart - Tuesday, March 3, 2020 - link

I have some of original Abrash's books - quite a collectors item now a dayshttps://www.amazon.com/Zen-Graphics-Programming-2n...

HStewart - Tuesday, March 3, 2020 - link

And even more - with Graphics Programming Black book - almost $1000 nowhttps://www.amazon.com/Michael-Abrashs-Graphics-Pr...

Qasar - Tuesday, March 3, 2020 - link

you do know there are programs out there that can remove some of the useless bloat that windows auto installs, right ? maybe not to the extent that you are referring to, but ot is possible. on a fresh reinstall of win 10, i usually remove almost 500 megs of apps that i wont use.erple2 - Saturday, March 14, 2020 - link

This is an age old argument that ultimately falls flat in the face of history. "Bloated" software today is VASTLY more capable of the "efficient" code written decades ago. You could make the argument that we might not need all of the capabilities of software today, but I rather like having the incredibly stable OS's today than what I had to deal with in the past. And yes, OS's today are much more stable than they were in 1992 (not to mention vastly more capable)Lord of the Bored - Thursday, March 5, 2020 - link

My recollection is that was Windows Vista, not XP. XP was hitting 2D acceleration hardware that had stopped improving much around the time Intel shipped their first graphics adapter.Vista, however, had a newfangled "3D" compositor that took advantage of all the hardware progress that had happened since 1995... and a butt-ugly fallback plan for systems that couldn't use it(read as: Intel graphics).

And then two releases later, Windows 8 dialed things way back because those damnable Intel graphics chips were STILL a significant install base and they didn't want to keep maintaining multiple desktop renderers.

...

Unless the Vista compositor was originally intended for XP, in which case I eat my hat.

TheinsanegamerN - Monday, March 2, 2020 - link

you dont need a 6 core CPU for back office systems or report machines either. So they wouldnt buy this at all.Dell, HP, ece make small systems with better CPU power for a lower price then this. The appeal of the NUCs was good CPUs with iris level GPUs isntead of the UHD that everyone else used.

PeachNCream - Monday, March 2, 2020 - link

The intention of the NUC was to provide a fairly basic computing device in a small and power efficient package. Iris models were something of an aberration in more recent models. In fact, the first couple of NUC generations used some of Intel's slowest processors available at the time. timniva - Tuesday, March 3, 2020 - link

The point is that if you're making a basic computing device why even go beyond 4 cores. I kind of want a NUC as a basic browsing computer that takes up little space. I can see these being used in the office too. Many use cases for a device like this with 6 or more cores in the office, especially for folks in engineering fields running Matlab or doing development/compiling. However, in almost all of these use cases having a stronger graphics package helps, never mind gaming. Taking a step back in the GPU side, especially given what AMD is doing right now and this being in response to the competition, doesn't make much sense. Perhaps this is just to hold them over until Intel fully transitions to using AMD GPUs in the future?Lord of the Bored - Thursday, March 5, 2020 - link

Can I just say how much I love that four cores is now considered a "basic" computing device? It leaves me suffused with a warm glow of joy.