The AMD Ryzen 3 3300X and 3100 CPU Review: A Budget Gaming Bonanza

by Dr. Ian Cutress on May 7, 2020 9:00 AM ESTCPU Performance: New Tests!

As part of our ever on-going march towards a better rounded view of the performance of these processors, we have a few new tests for you that we’ve been cooking in the lab. Some of these new benchmarks provide obvious talking points, others are just a bit of fun. Most of them are so new we’ve only run them on a few processors so far. It will be interesting to hear your feedback!

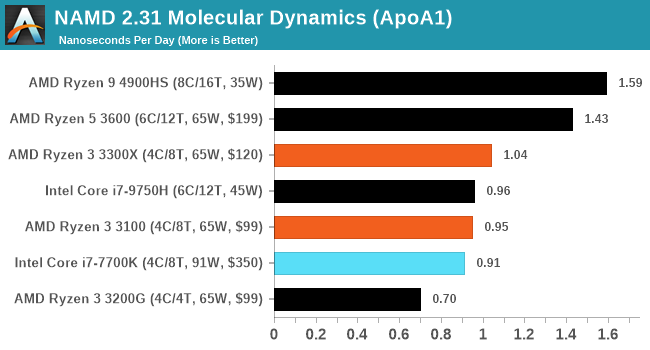

NAMD ApoA1

One frequent request over the years has been for some form of molecular dynamics simulation. Molecular dynamics forms the basis of a lot of computational biology and chemistry when modeling specific molecules, enabling researchers to find low energy configurations or potential active binding sites, especially when looking at larger proteins. We’re using the NAMD software here, or Nanoscale Molecular Dynamics, often cited for its parallel efficiency. Unfortunately the version we’re using is limited to 64 threads on Windows, but we can still use it to analyze our processors. We’re simulating the ApoA1 protein for 10 minutes, and reporting back the ‘nanoseconds per day’ that our processor can simulate. Molecular dynamics is so complex that yes, you can spend a day simply calculating a nanosecond of molecular movement.

Crysis CPU Render

One of the most oft used memes in computer gaming is ‘Can It Run Crysis?’. The original 2007 game, built in the Crytek engine by Crytek, was heralded as a computationally complex title for the hardware at the time and several years after, suggesting that a user needed graphics hardware from the future in order to run it. Fast forward over a decade, and the game runs fairly easily on modern GPUs, but we can also apply the same concept to pure CPU rendering – can the CPU render Crysis? Since 64 core processors entered the market, one can dream. We built a benchmark to see whether the hardware can.

Smooth#canitruncrysis pic.twitter.com/k7x31ULndF

— 𝐷𝑟. 𝐼𝑎𝑛 𝐶𝑢𝑡𝑟𝑒𝑠𝑠 (@IanCutress) May 4, 2020

For this test, we’re running Crysis’ own GPU benchmark, but in CPU render mode. This is a 2000 frame test, which we run over a series of resolutions from 800x600 up to 1920x1080.

| Crysis CPU Render Frames Per Second |

||||||

| AnandTech | 800 x600 |

1024 x768 |

1280 x800 |

1366 x768 |

1600 x900 |

1920 x1080 |

| AMD | ||||||

| Ryzen 9 4900HS | 11.50 | 8.75 | 7.44 | 6.83 | 5.21 | 4.30 |

| Ryzen 5 3600 | 9.98 | 7.84 | 6.69 | 6.15 | 4.75 | 3.92 |

| Ryzen 3 3300X | 8.42 | 6.52 | 5.43 | 5.01 | 3.92 | 3.07 |

| Ryzen 3 3100 | 7.50 | 5.78 | 4.87 | 4.5 | 3.54 | 2.77 |

| Intel | ||||||

| Core i7-7700K | 7.63 | 5.87 | 4.95 | 4.55 | 3.57 | 2.79 |

| Core i7-9750H | 6.78 | 5.17 | 4.37 | 3.99 | 3.12 | 2.46 |

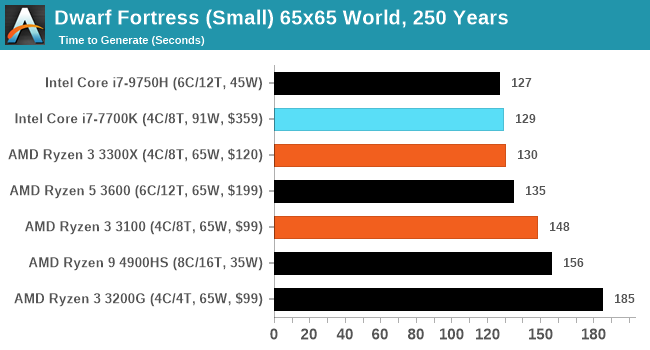

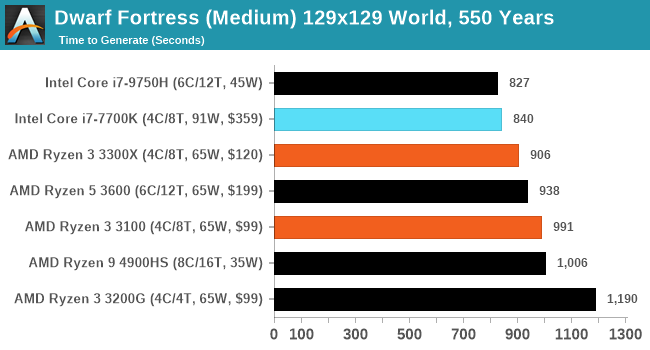

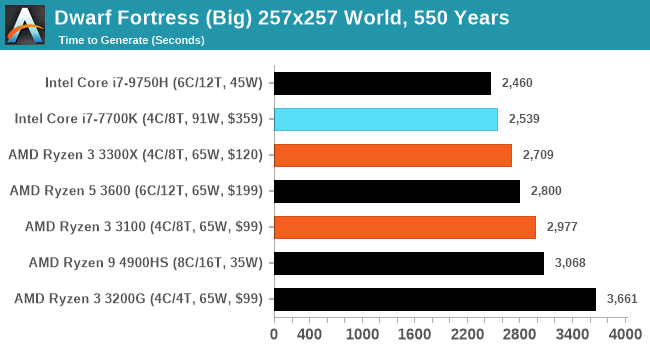

Dwarf Fortress

Another long standing request for our benchmark suite has been Dwarf Fortress, a popular management/roguelike indie video game, first launched in 2006. Emulating the ASCII interfaces of old, this title is a rather complex beast, which can generate environments subject to millennia of rule, famous faces, peasants, and key historical figures and events. The further you get into the game, depending on the size of the world, the slower it becomes.

DFMark is a benchmark built by vorsgren on the Bay12Forums that gives two different modes built on DFHack: world generation and embark. These tests can be configured, but range anywhere from 3 minutes to several hours. I’ve barely scratched the surface here, but after analyzing the test, we ended up going for three different world generation sizes.

Interestingly Intel's hardware likes Dwarf Fortress.

We also have other benchmarks in the wings, such as AI Benchmark (ETH), LINPACK, and V-Ray, however they still require a bit of tweaking to get working it seems.

249 Comments

View All Comments

Ian Cutress - Thursday, May 7, 2020 - link

4790K 6700K 7700KAll Intel quad core showing generational differences as to where the 3300X and 3100 fit in.

1600X, 1700, 1700X, 1800X are all in our benchmark database, Bench.

It's practically listed on almost every page.

notb - Thursday, May 7, 2020 - link

And that's obviously great. But with that approach you could just write "3100 and 3300X added to Bench", right? :)I have nothing against the factual layer of this article. Results are as expected and they look consistent.

But it's essentially a story how an entry-level $120 CPU from company A beats a not-so-ancient flagship from company B.

So I'm merely wondering why you decided to write it like this, instead of comparing to wider choice of expensive CPUs from 2017. Because in many of your results 3300X beats 1st gen Ryzens that were even more expensive than the 7700K.

Or you could include older 4C/8T Ryzens (1500X) - showing how much faster Zen2 is.

Instead you've included the older 6-core Ryzens, which are neither similar in core count nor in MSRP.

Ian Cutress - Friday, May 8, 2020 - link

2600/1600 AF is ~$85 at retail (where you can find it), and judging by the comments, VERY popular. That's why this was included.Deicidium369 - Friday, May 8, 2020 - link

Just say AMD GOOD! INTEL BAD! that's all they are looking foreastcoast_pete - Thursday, May 7, 2020 - link

Some other sites have, and yes, the 3300 gives most of the 1st generation Ryzens a run for their money.Irata - Thursday, May 7, 2020 - link

This is a highly impressive little CPU for the money.I particularly liked the 3300X‘s good showing. If this is at least in part due to it using only one CCX, this should bode well for Ryzen 3 which should have an eight core CCX.

Look at some tests were Ryzen did not do so well wrt their Intel counterpart like Kraken and Octane - the 3300x now does very well. It even scores slightly better than the 3700x

wr3zzz - Thursday, May 7, 2020 - link

Does the B550 MB need active cooling? I can't tell from the pic.callmebob - Thursday, May 7, 2020 - link

Look at the spec graphics. Note the only difference to the old B450 is pretty much that it provides PCIe 3.0 lanes instead of PCIe 2.0.Now, when was the last time you saw a PCIe 3.0-based chipset hub needing active cooling?

As an aside, while i am kinda glad the B550 is finally coming, i am also a bit disappointed in seeing AMD (and their design/manufacturing partners) needing a better part of a year just for managing a bump from PCIe 2.0 to PCIe 3.0. PCIe 3.0 has been in the market for around eight years now; there is no excuse for AMD taking this long to figure out this s*it.

Fritzkier - Thursday, May 7, 2020 - link

Because their PCIe 3 and 4 was provided by the CPU tho. Or maybe there's an advantage of PCIe lanes provided by the chipset?callmebob - Thursday, May 7, 2020 - link

Haha, do you even know _how many_ PCIe lanes the CPU provides? Wager a guess whether it is for more than a single x16 slot?