Intel’s Tiger Lake 11th Gen Core i7-1185G7 Review and Deep Dive: Baskin’ for the Exotic

by Dr. Ian Cutress & Andrei Frumusanu on September 17, 2020 9:35 AM EST- Posted in

- CPUs

- Intel

- 10nm

- Tiger Lake

- Xe-LP

- Willow Cove

- SuperFin

- 11th Gen

- i7-1185G7

- Tiger King

New Instructions and Updated Security

When a new generation of processors is launched, alongside the physical design and layout changes made, this is usually the opportunity to also optimize instruction flow, increase throughput, and enhance security.

Core Instructions

When Intel first stated to us in our briefings that by-and-large, aside from the caches, the new core was identical to the previous generation, we were somewhat confused. Normally we see something like a common math function get sped up in the ALUs, but no – the only additional changes made were for security.

As part of our normal benchmark tests, we do a full instruction sweep, covering throughput and latency for all (known) supported instructions inside each of the major x86 extensions. We did find some minor enhancements within Willow Cove.

- CLD/STD - Clearing and setting the data direction flag - Latency is reduced from 5 to 4 clocks

- REP STOS* - Repeated String Stores - Increased throughput from 53 to 62 bytes per clock

- CMPXCHG16B - compare and exchange bytes - latency reduced from 17 clocks to 16 clocks

- LFENCE - serializes load instructions - throughput up from 5/cycle to 8/cycle

There were two regressions:

- REP MOVS* - Repeated Data String Moves - Decreased throughput from 101 to 93 bytes per clock

- SHA256MSG1 - SHA256 message scheduling - throughput down from 5/cycle to 4/cycle

It is worth noting that Willow Cove, while supporting SHA instructions, does not have any form of hardware-based SHA acceleration. By comparison, Intel’s lower-power Tremont Atom core does have SHA acceleration, as does AMD’s Zen 2 cores, and even VIA’s cores and VIA’s Zhaoxin joint venture cores. I’ve asked Intel exactly why the Cove cores don’t have hardware-based SHA acceleration (either due to current performance being sufficient, or timing, or power, or die area), but have yet to receive an answer.

From a pure x86 instruction performance standpoint, Intel is correct in that there aren’t many changes here. By comparison, the jump from Skylake to Cannon Lake was bigger than this.

Security and CET

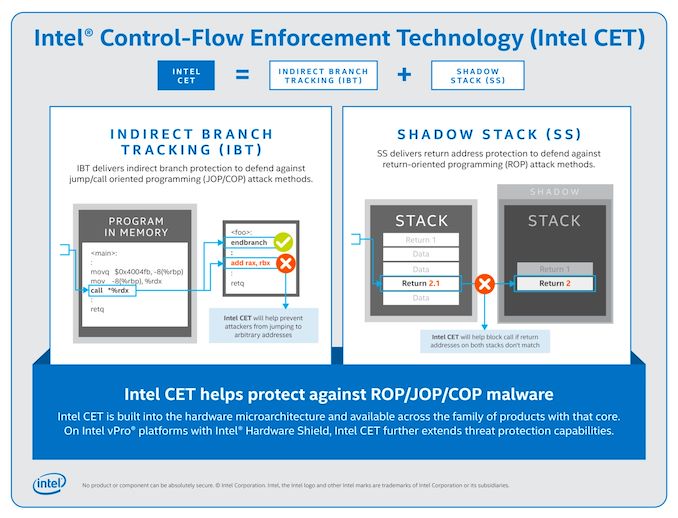

On the security side, Willow Cove will now enable Control-Flow Enforcement Technology (CET) to protect against a new type of attack. In this attack, the methodology takes advantage of control transfer instructions, such as returns, calls and jumps, to divert the instruction stream to undesired code.

CET is the combination of two technologies: Shadow Stacks (SS) and Indirect Branch Tracking (IBT).

For returns, the Shadow Stack creates a second stack elsewhere in memory, through the use of a shadow stack pointer register, with a list of return addresses with page tracking - if the return address on the stack is called and not matched with the return address expected in the shadow stack, the attack will be caught. Shadow stacks are implemented without code changes, however additional management in the event of an attack will need to be programmed for.

New instructions are added for shadow stack page management:

- INCSSP: increment shadow stack pointer (i.e. to unwind shadow stack)

- RDSSP: read shadow stack pointer into general purpose register

- SAVEPREVSSP/RSTORSSP: save/restore shadow stack (i.e. thread switching)

- WRSS: Write to Shadow Stack

- WRUSS: Write to User Shadow Stack

- SETSSBSY: Set Shadow Stack Busy Flag to 1

- CLRSSBSY: Clear Shadow Stack Busy Flag to 0

Indirect Branch Tracking is added to defend against equivalent misdirected jump/call targets, but requires software to be built with new instructions:

- ENDBR32/ENDBR64: Terminate an indirect branch in 32-bit/64-bit mode

Full details about Intel’s CET can be found in Intel’s CET Specification.

At the time of presentation, we were under the impression that CET would be available for all of Intel’s processors. However we have since learned that Intel’s CET will require a vPro enabled processor as well as operating system support for Hardware-Enforced Stack Protection. This is currently available on Windows 10’s Insider Previews. I am unsure about Linux support at this time.

Update: Intel has reached out to say that their text implying that CET was vPro only was badly worded. What it was meant to say was 'All CPUs support CET, however vPro also provides additional security such as Intel Hardware Shield'.

AI Acceleration: AVX-512, Xe-LP, and GNA2.0

One of the big changes for Ice Lake last time around was the inclusion of an AVX-512 on every core, which enabled vector acceleration for a variety of code paths. Tiger Lake retains Intel’s AVX-512 instruction unit, with support for the VNNI instructions introduced with Ice Lake.

It is easy to argue that since AVX-512 has been around for a number of years, particularly in the server space, we haven’t yet seen it propagate into the consumer ecosphere in any large way – most efforts for AVX-512 have been primarily by software companies in close collaboration with Intel, taking advantage of Intel’s own vector gurus and ninja programmers. Out of the 19-20 or so software tools that Intel likes to promote as being AI accelerated, only a handful focus on the AVX-512 unit, and some of those tools are within the same software title (e.g. Adobe CC).

There has been a famous ruckus recently with the Linux creator Linus Torvalds suggesting that ‘AVX-512 should die a painful death’, citing that AVX-512, due to the compute density it provides, reduces the frequency of the core as well as removes die area and power budget from the rest of the processor that could be spent on better things. Intel stands by its decision to migrate AVX-512 across to its mobile processors, stating that its key customers are accustomed to seeing instructions supported across its processor portfolio from Server to Mobile. Intel implied that AVX-512 has been a win in its HPC business, but it will take time for the consumer platform to leverage the benefits. Some of the biggest uses so far for consumer AVX-512 acceleration have been for specific functions in Adobe Creative Cloud, or AI image upscaling with Topaz.

Intel has enabled new AI instruction functionality in Tiger Lake, such as DP4a, which is an Xe-LP addition. Tiger Lake also sports an updated Gaussian Neural Accelerator 2.0, which Intel states can offer 1 Giga-OP of inference within one milliwatt of power – up to 38 Giga-Ops at 38 mW. The GNA is mostly used for natural language processing, or wake words. In order to enable AI acceleration through the AVX-512 units, the Xe-LP graphics, and the GNA, Tiger Lake supports Intel’s latest DL Boost package and the upcoming OneAPI toolkit.

253 Comments

View All Comments

ikjadoon - Thursday, September 17, 2020 - link

You wrote this twice without any references, but I'll just write this once:AMD is literally moving to custom Wi-Fi 6 modems w/ Mediatek (e.g., like ASMedia and AMD chipsets): https://www.tomshardware.com/news/report-amd-taps-...

PCIe4: it doesn't need to 'max out' a protocol to be beneficial and likewise allows fewer lanes for the same bandwidth (i.e., PCIe Gen4 also powers the DMI interface now, no?).

Thunderbolt 4 is genuinely an improvement over USB4. Anandtech wrote an entire article about TB4: https://www.anandtech.com/show/15902/intel-thunder... (mandates unlike USB4, 40 Gbps, DMA protection, wake-up by dock, charging, daisychaining, etc). Anybody who's bought a laptop in the past two years know that "USB type-C" is about as informative as "My computer runs an operating system."

AVX512 / DLboost: fair, nobody cares on a thin-and-light laptop.

LPDDR5 is likely coming in 2021 to a Tiger Lake refresh around CES. Open game how many OEMs will wait; noting very few of the 100s of laptop design wins have been released, I suspect many top-tier notebooks will wait.

Billy Tallis - Thursday, September 17, 2020 - link

I'd be surprised if the chipset is using gen4 speeds for the DMI or whatever they call it in mobile configurations. The PCIe lanes downstream of the chipset are all still gen3 speed, so there's not much demand for increased IO bandwidth. And last time, Intel took a very long time to upgrade their chipsets and DMI after their CPUs started offering faster PCIe on the direct attached lanes.JayNor - Saturday, September 19, 2020 - link

4 lanes of pcie4 are on the cpu chiplet, as are the thunderbolt io. They can be used for GPU or SSD.Billy Tallis - Saturday, September 19, 2020 - link

Did you mean to reply to a different comment?RedOnlyFan - Friday, September 18, 2020 - link

Lol this is so uneducated comment. Telling wrong stuff twice doesn't make it correct.Pcie4 implemented properly should consume less power than pcie3.

Thunderbolt 4 is not USB 4. Only tb3 was open sourced to USB 4 so USB 4 will be a subset for tb3 thank Intel for that.

There are more AI/ML used in the background than you realize. If you expect people to do highly multi threaded rendering stuff.. Why not expect AI/ML stuff?

And 2022 is still 1.5 year away. So amd is entering the party after its over.

JayNor - Saturday, September 19, 2020 - link

Thunderbolt 4 doubles the pcie speed vs Thunderbolt 3 that was donated for USB. Intel has also now donated the Thunderbolt 4 spec.Spunjji - Friday, September 18, 2020 - link

They have 4 (four) lanes of PCIe 4.0 - that provides the same bandwidth as Renoir's 8 lanes of 3.0I get that you're one of those posters who just repeats a list of features that Intel has and AMD doesn't in order to declare a "win", but seriously, at least pick one that provides a benefit.

JayNor - Saturday, September 19, 2020 - link

The m.2 pcie4 chips use 4 lanes. Seems like a good combo with Tiger Lake. AMD would need to use up 8 lanes to match it with their current laptop chips.Rudde - Saturday, September 19, 2020 - link

Problem is that there isn't any reasonable mobile pcie4 SSDs yet. Same problem with lpddr5. Tiger Lake will get them when they become available. Renoir was released half a year ago; all AMD based laptops will wait for next gen before adopting these technologies anyway.If you want to argue that AMD is behind, highlight what Ice Lake has, but Renoir doesn't have.

Spunjji - Saturday, September 19, 2020 - link

Why would they bother? There are no performance benefits to using a PCIe 4 SSD in the kinds of systems TGL will go into. You can't get data off it fast enough for the read speed to matter, and it has no effect on any of the applications anyone is likely to use on a laptop that has no GPU. This is aside from Rudde's point about there currently being no products that suit this use case.