Investigating Performance of Multi-Threading on Zen 3 and AMD Ryzen 5000

by Dr. Ian Cutress on December 3, 2020 10:00 AM EST- Posted in

- CPUs

- AMD

- Zen 3

- X570

- Ryzen 5000

- Ryzen 9 5950X

- SMT

- Multi-Threading

One of the stories around AMD’s initial generations of Zen processors was the effect of Simultaneous Multi-Threading (SMT) on performance. By running with this mode enabled, as is default in most situations, users saw significant performance rises in situations that could take advantage. The reasons for this performance increase rely on two competing factors: first, why is the core designed to be so underutilized by one thread, or second, the construction of an efficient SMT strategy in order to increase performance. In this review, we take a look at AMD’s latest Zen 3 architecture to observe the benefits of SMT.

What is Simultaneous Multi-Threading (SMT)?

We often consider each CPU core as being able to process one stream of serial instructions for whatever program is being run. Simultaneous Multi-Threading, or SMT, enables a processor to run two concurrent streams of instructions on the same processor core, sharing resources and optimizing potential downtime on one set of instructions by having a secondary set to come in and take advantage of the underutilization. Two of the limiting factors in most computing models are either compute or memory latency, and SMT is designed to interleave sets of instructions to optimize compute throughput while hiding memory latency.

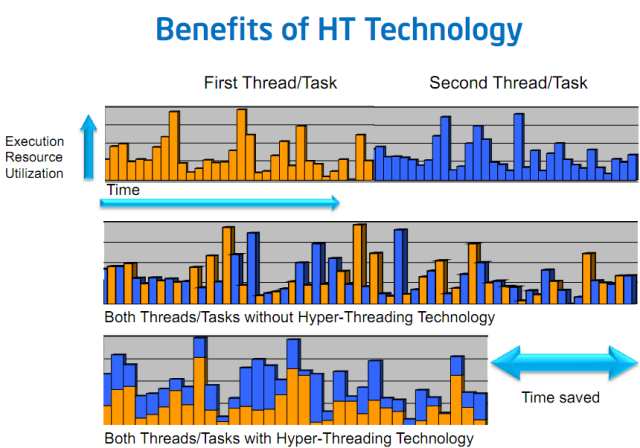

An old slide from Intel, which has its own marketing term for SMT: Hyper-Threading

When SMT is enabled, depending on the processor, it will allow two, four, or eight threads to run on that core (we have seen some esoteric compute-in-memory solutions with 24 threads per core). Instructions from any thread are rearranged to be processed in the same cycle and keep utilization of the core resources high. Because multiple threads are used, this is known as extracting thread-level parallelism (TLP) from a workload, whereas a single thread with instructions that can run concurrently is instruction-level parallelism (ILP).

Is SMT A Good Thing?

It depends on who you ask.

SMT2 (two threads per core) involves creating core structures sufficient to hold and manage two instruction streams, as well as managing how those core structures share resources. For example, if one particular buffer in your core design is meant to handle up to 64 instructions in a queue, if the average is lower than that (such as 40), then the buffer is underutilized, and an SMT design will enable the buffer is fed on average to the top. That buffer might be increased to 96 instructions in the design to account for this, ensuring that if both instruction streams are running at an ‘average’, then both will have sufficient headroom. This means two threads worth of use, for only 1.5 times the buffer size. If all else works out, then it is double the performance for less than double the core design in design area. But in ST mode, where most of that 96-wide buffer is less than 40% filled, because the whole buffer has to be powered on all the time, it might be wasting power.

But, if a core design benefits from SMT, then perhaps the core hasn’t been designed optimally for a single thread of performance in the first place. If enabling SMT gives a user exact double performance and perfect scaling across the board, as if there were two cores, then perhaps there is a direct issue with how the core is designed, from execution units to buffers to cache hierarchy. It has been known for users to complain that they only get a 5-10% gain in performance with SMT enabled, stating it doesn't work properly - this could just be because the core is designed better for ST. Similarly, stating that a +70% performance gain means that SMT is working well could be more of a signal to an unbalanced core design that wastes power.

This is the dichotomy of Simultaneous Multi-Threading. If it works well, then a user gets extra performance. But if it works too well, perhaps this is indicative of a core not suited to a particular workload. The answer to the question ‘Is SMT a good thing?’ is more complicated than it appears at first glance.

We can split up the systems that use SMT:

- High-performance x86 from Intel

- High-performance x86 from AMD

- High-performance POWER/z from IBM

- Some High-Performance Arm-based designs

- High-Performance Compute-In-Memory Designs

- High-Performance AI Hardware

Comparing to those that do not:

- High-efficiency x86 from Intel

- All smartphone-class Arm processors

- Successful High-Performance Arm-based designs

- Highly focused HPC workloads on x86 with compute bottlenecks

(Note that Intel calls its SMT implementation ‘HyperThreading’, which is a marketing term specifically for Intel).

At this point, we've only been discussing SMT where we have two threads per core, known as SMT2. Some of the more esoteric hardware designs go beyond two threads-per-core based SMT, and use up to eight. You will see this stylized in documentation as SMT8, compared to SMT2 or SMT4. This is how IBM approaches some of its designs. Some compute-in-memory applications go as far as SMT24!!

There is a clear trend between SMT-enabled systems and no-SMT systems, and that seems to be the marker of high-performance. The one exception to that is the recent Apple M1 processor and the Firestorm cores.

It should be noted that for systems that do support SMT, it can be disabled to force it down to one thread per core, to run in SMT1 mode. This has a few major benefits:

It enables each thread to have access to a full core worth of resources. In some workload situations, having two threads on the same core will mean sharing of resources, and cause additional unintended latency, which may be important for latency critical workloads where deterministic (the same) performance is required. It also reduces the number of threads competing for L3 capacity, should that be a limiting factor. Also should any software be required to probe every other workflow for data, for a 16-core processor like the 5950X that means only reaching out to 15 other threads rather than 31 other threads, reducing potential crosstalk limited by core-to-core connectivity.

The other aspect is power. With a single thread on a core and no other thread to jump in if resources are underutilized, when there is a delay caused by pulling something from main memory, then the power of the core would be lower, providing budget for other cores to ramp up in frequency. This is a bit of a double-edged sword if the core is still at a high voltage while waiting for data in an SMT disabled mode. SMT in this way can help improve performance per Watt, assuming that enabling SMT doesn’t cause competition for resources and arguably longer stalls waiting for data.

Mission critical enterprise workloads that require deterministic performance, and some HPC codes that require large amounts of memory per thread often disable SMT on their deployed systems. Consumer workloads are often not as critical (at least in terms of scale and $$$), and so the topic isn’t often covered in detail.

Most modern processors, when in SMT-enabled mode, if they are running a single instruction stream, will operate as if in SMT-off mode and have full access to resources. Some software takes advantage of this, spawning only one thread for each physical core on the system. Because core structures can be dynamically partitioned (adjusts resources for each thread while threads are in progress) or statically shared (adjusts before a workload starts), situations where the two threads on a core are creating their own bottleneck would benefit having only a single thread per core active. Knowing how a workload uses a core can help when designing software designed to make use of multiple cores.

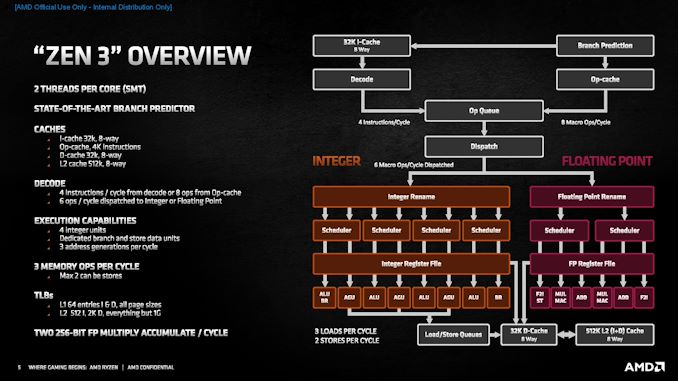

Here is an example of a Zen3 core, showing all the structures. One of the progress points with every new generation of hardware is to reduce the number of statically allocated structures within a core, as dynamic structures often give the best flexibility and peak performance. In the case of Zen3, only three structures are still statically partitioned: the store queue, the retire queue, and the micro-op queue. This is the same as Zen2.

SMT on AMD Zen3 and Ryzen 5000

So much like AMD’s previous Zen-based processors, the Ryzen 5000 series that uses Zen3 cores also have an SMT2 design. By default this is enabled in every consumer BIOS, however users can choose to disable it through the firmware options.

For this article, we have run our AMD Ryzen 5950X processor, a 16-core high-performance Zen3 processor, in both SMT Off and SMT On modes through our test suite and through some industry standard benchmarks. The goals of these tests are to ascertain the answers to the following questions:

- Is there a single-thread benefit to disabling SMT?

- How much performance increase does enabling SMT provide?

- Is there a change in performance per watt in enabling SMT?

- Does having SMT enabled result in a higher workload latency?*

*more important for enterprise/database/AI workloads

The best argument for enabling SMT would be a No-Lots-Yes-No result. Conversely the best argument against SMT would be a Yes-None-No-Yes. But because the core structures were built with having SMT enabled in mind, the answers are rarely that clear.

Test System

For our test suite, due to obtaining new 32 GB DDR4-3200 memory modules for Ryzen testing, we re-ran our standard test suite on the Ryzen 9 5950X with SMT On and SMT Off. As per our usual testing methodology, we test memory at official rated JEDEC specifications for each processor at hand.

| Test Setup | |||||

| AMD AM4 | Ryzen 9 5950X | MSI X570 Godlike |

1.B3T13 AGESA 1100 |

Noctua NH-U12S |

ADATA 4x32 GB DDR4-3200 |

| GPU | Sapphire RX 460 2GB (CPU Tests) NVIDIA RTX 2080 Ti |

||||

| PSU | OCZ 1250W Gold | ||||

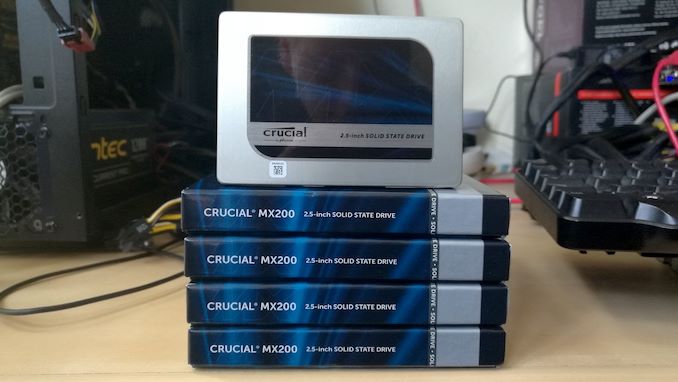

| SSD | Crucial MX500 2TB | ||||

| OS | Windows 10 x64 1909 Spectre and Meltdown Patched |

||||

| VRM Supplimented with Silversone SST-FHP141-VF 173 CFM fans | |||||

Also many thanks to the companies that have donated hardware for our test systems, including the following:

| Hardware Providers for CPU and Motherboard Reviews | |||

| Sapphire RX 460 Nitro |

NVIDIA RTX 2080 Ti |

Crucial SSDs | Corsair PSUs |

|

|

|

|

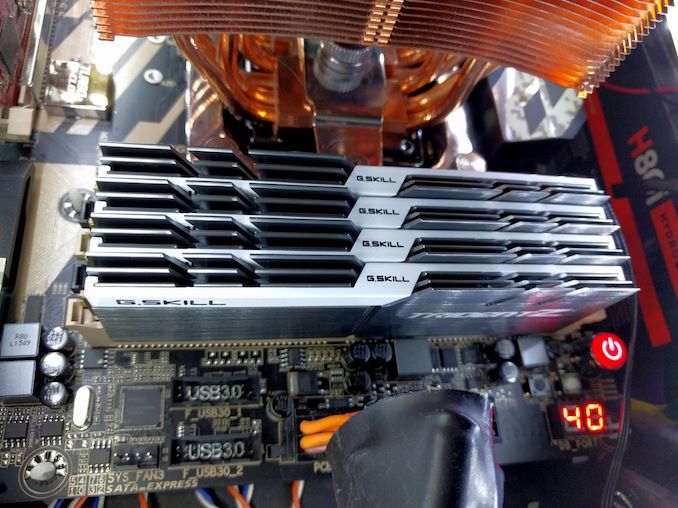

| G.Skill DDR4 | ADATA DDR4-3200 32GB |

Silverstone Ancillaries |

Noctua Coolers |

|

|

|

|

126 Comments

View All Comments

GeoffreyA - Tuesday, December 8, 2020 - link

There's a single set of 4 decoders. In SMT mode, I believe some sharing is going in. This is from the original Zen design:https://images.anandtech.com/doci/10591/HC28.AMD.M...

GeoffreyA - Tuesday, December 8, 2020 - link

* going onnaive dev - Wednesday, December 9, 2020 - link

Right, I found that article as well and from that slide it looks like the decoder would be shared. But then that slide was from 2017, so that might have changed.It looks though as if the decoder could decode those 4 instructions from a single program counter only, right? It's not like the decoder could decode e.g. 2 instructions from program counter 1 and another 2 instructions from program counter 2?

GeoffreyA - Thursday, December 10, 2020 - link

I'm not too sure how the implementation works, but I expect they're shuffling both threads through the decoder at roughly the same time. The decoder has four units (I think 1 complex and 3 simple). As far as I'm aware, that has stayed the same in both Zen 2 and 3.mapesdhs - Thursday, December 10, 2020 - link

Ian, a question about Handbrake, though it may not apply to the type of test you used. I've read that Handbrake doing an h264 encode can only use 16 threads max. Does this mean that in theory one could run two separate h264 encodes on a 5950X and thus obtain a good overall throughput speedup? Have you tried such a thing? Or might this only work if it were possible to force one encode to only use the 16 threads of one 8c block (CCX?), and the other encode to use the rest? ie. so that the separate encodes are not fighting over the same cores or indeed the same CCX-shared L3? Is it possible to force this somehow? Also, if the claimed 16 thread limit for h264 is true, is there a performance difference for a single h264 encode between SMT on vs. off just in general? ie. with it on, is the OS smart enough to ensure that the 16 threads are spread across all the cores evenly rather than being scrunched onto fewer cores because reasons? If not, then turning SMT off might speed it up. Note that I'm using Windows for all this.I don't know if any of this applies to h265, but atm the encoding I do is still 1080p. I did an analysis of all available Ryzen CPUs based on performance, power consumption and cost (I ruled out Intel partly due to the latter two factors but also because of a poor platform upgrade path) and found that although the 5900X scored well, overall it was beaten by the 2700X, mainly because the latter is so much cheaper. However, the 5950X would look a lot better if one could run two encodes on it at the same time without clashing, but review articles naturally never try this. I wish I could test it, but the only 16c system I have is a dual-socket S2011 setup with two 2560 v2s, so the separate CPUs introduce all sorts of other issues (NUMA and suchlike).

I found something similar a long time ago when I noticed one could run six separate Maya frame renders on a 24-CPU SGI rack Onyx (essentially one render per CPU board), compared to running a single render on a quad-CPU (single board) deskside Onyx, giving a good overall throughput increase (the renderer being limited to 4 CPUs per job). See:

http://www.sgidepot.co.uk/perfcomp_RENDER4_maya1.h...

Funny actually, re what you say about an overly good speedup perhaps implying a less than optimal core design. Something odd about SGIs is how many times on a multi CPU system one can btain better results by using more threads than there are CPUs, baring in mind MIPS CPUs from that era did not have SMT, ie. the CPUs kinda behave as if they do have SMT even though they don't. I found this behaviour occured most for Blender and C-Ray.

So anyway, it would be great if it were possible to run two h264 encodes on a 5950X at the same time, but there's probably no point if the OS doesn't spread out the loads in a sensible manner, or if in that circumstance there isn't a way to force each encode to use a separate CCX.

All very specific to my use case of course, but I have hundreds of hours of material to convert, so the ability to get twice the throughput from a 5950X would make that CPU a lot more interesting; so far reviews I've read show it to be about 2x faster than the 2700X for h264 Handbrake (just one encode of course), but it costs 4.4x more, rather ruining the price/performance angle. And if it does work then I guess one could ask the same question of TR - could one run eight separate h264 encodes on a future Zen3 TR without the thread management being a total mess? :D I'm assuming it probably wouldn't be so good with the older Zen2 design given the split L3.

GeoffreyA - Sunday, December 13, 2020 - link

Interesting question. Would be nice if someone could give this a test on 16-core Ryzen or TR, and see what happens. Yesterday, I was able to take both FFmpeg and Handbrake up to 128 threads, and it does work; but, having only a 4-core, 4-thread CPU, can't comment.*As for x264's performance limit, I'm not sure at what number of threads it begins to flag; but, quality wise, using too many (say, over 16 at 1080p) is not advisable. According to the x264 developers, vertical resolution / threads shouldn't fall below 40-50 and certainly not below 30.

https://forum.doom9.org/showthread.php?p=1213185#p...

forum.doom9.org/showthread.php?p=1646307#post1646307

More posts on high core counts:

forum.doom9.org/showthread.php?t=173277

forum.doom9.org/showthread.php?t=175766

* As far as I know, Windows schedules threads all right. From 1903, on Zen 2, one CCX is supposed to be filled up, then another. I imagine 16 threads will be spread across two CCXs in the 5950X. FFmpeg's --threads switch could prove useful too.

GeoffreyA - Sunday, December 13, 2020 - link

-threads, not --threadsHere are links set out better (thought they'd link in the comment):

https://forum.doom9.org/showthread.php?p=1213185#p...

https://forum.doom9.org/showthread.php?p=1646307#p...

https://forum.doom9.org/showthread.php?t=173277

https://forum.doom9.org/showthread.php?t=175766

karthikpal - Friday, December 11, 2020 - link

Nice content bro<a href="https://www.tronicsmaster.com">Ryzen 7 5800X</a>

deil - Sunday, December 13, 2020 - link

I wonder when smt4 will hit the market a model with 3 copies of most things on the die, in a ring configuration fp/int/fp/int, cache inside a ring st would have a chance to use 2 FP modules for single int processor part (when others don't use it ofc).This kind of setup would have very interesting performance numbers at least. I am not saying it's a good idea, but interesting one for sure.

Machinus - Sunday, December 13, 2020 - link

This article omits one of the basic considerations in any manually-configured and custom-cooled desktop system: achieving uniform, preditcable thermal behavior. Unless you are building servers to perform only one or two specific types of mathematical operations, and can build, configure, and stress test on those instruction types alone, you need high confidence that the chip will never exceed the thermal flux densities of the cooling system you built. Fixed-clock systems with a static number of available cores have much more consistent thermal performance than chips whose clocks, and number of threads, are free-floating. This reduces your peak flops, but it significantly extends system lifetime. HEDT and HPC systems have double or triple-digit coure counts per sockrt in 2020; SMT is not worth paying the price of reduced hardware lifetime unless you are building extremely specialized calculation servers.