Supermicro's Twin: Two nodes and 16 cores in 1U

by Johan De Gelas on May 28, 2007 12:01 AM EST- Posted in

- IT Computing

Twin Server: Concept

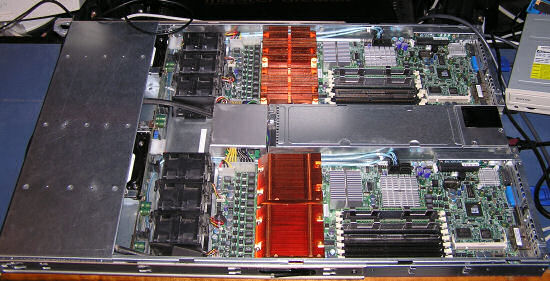

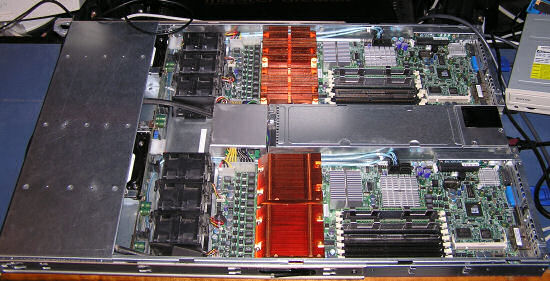

The big idea behind the Twin Server is that Supermicro puts two nodes in a 1U pizza box. The first advantage is that processing power density is at least twice as high as a typical 1U, and even higher than many (much more expensive) blade systems.

TCO should also be further lowered by powering both nodes from the same 900W "more than 90% efficient" power supply.

Powering two nodes, the PSU should work at a load where it is close to its maximum efficiency. The disadvantage is that the PSU is a single point of failure. Nevertheless, the concept behind the Supermicro Twin should result in superior processing power density and performance/Watt, making it very attractive as a HPC cluster node, web server, or rendering node.

That is not all; the chassis is priced very competitively. We did a quick comparison with the Dell PowerEdge. As Supermicro's big selling point is the flexibility it offers to its customers in customizing the server's configuration, it is clear that Dell is probably the most dangerous Tier-1 opponent with its extremely optimized supply chain, direct sales model, and relatively customizable systems.

While the difference isn't enormous, it is important to note that the Dell PowerEdge is one of the most aggressively priced servers out there, thanks to the huge discount. The idea behind the server is very attractive and the price is extremely competitive; these are reasons enough to see if the Supermicro Twin 1U can deliver in practice too. But first, we'll take a look at the technical features of this server.

The big idea behind the Twin Server is that Supermicro puts two nodes in a 1U pizza box. The first advantage is that processing power density is at least twice as high as a typical 1U, and even higher than many (much more expensive) blade systems.

TCO should also be further lowered by powering both nodes from the same 900W "more than 90% efficient" power supply.

Powering two nodes, the PSU should work at a load where it is close to its maximum efficiency. The disadvantage is that the PSU is a single point of failure. Nevertheless, the concept behind the Supermicro Twin should result in superior processing power density and performance/Watt, making it very attractive as a HPC cluster node, web server, or rendering node.

That is not all; the chassis is priced very competitively. We did a quick comparison with the Dell PowerEdge. As Supermicro's big selling point is the flexibility it offers to its customers in customizing the server's configuration, it is clear that Dell is probably the most dangerous Tier-1 opponent with its extremely optimized supply chain, direct sales model, and relatively customizable systems.

| Server Price Comparison | |||

| Components | Supermicro Twin (May 2007) | Components | Dell PowerEdge 1950 (May 2007) |

| 6015-T Chassis | $1,550 | Chassis | $3,610 |

| 2x Xeon 5320 | $1,000 | 1x Xeon 5320 | |

| 4x 2GB FB-DIMM | $1,480 | 4x 1GB FB-DIMM | |

| 4x Seagate ST3250820NS 250GB SATA NL35 | $340 | 2x 250GB SATA NL35 | |

| $4,370 | $3610 | ||

| 2x Xeon 5320 | $1,000 | 1x Xeon 5320 | $850 |

| Subtotal | $5,370 | Subtotal | $4,460 |

| Dell Discount | ($1,400) | ||

| Subtotal after discount | $3,060 | ||

| Total 2 systems: | $5,370 | Total 2 systems: | $6,120 |

While the difference isn't enormous, it is important to note that the Dell PowerEdge is one of the most aggressively priced servers out there, thanks to the huge discount. The idea behind the server is very attractive and the price is extremely competitive; these are reasons enough to see if the Supermicro Twin 1U can deliver in practice too. But first, we'll take a look at the technical features of this server.

28 Comments

View All Comments

JohanAnandtech - Monday, May 28, 2007 - link

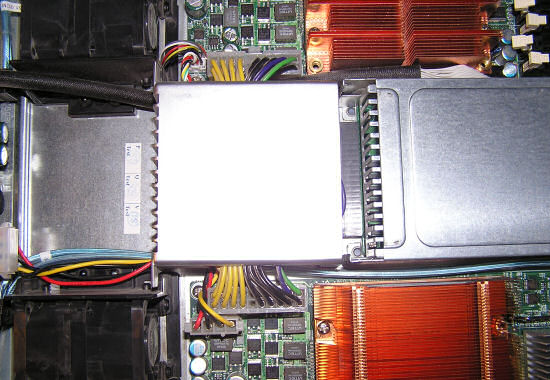

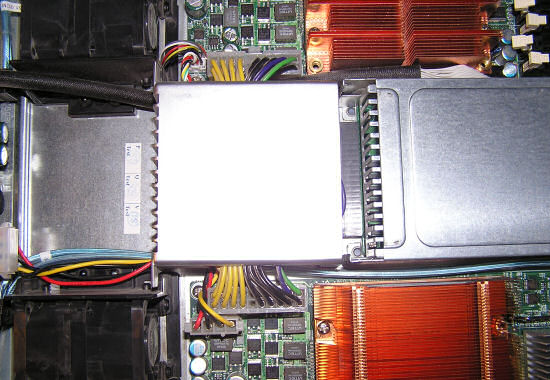

Those DIMM slots are empty :-)yacoub - Monday, May 28, 2007 - link

ohhh hahah thought they were filled with black DIMMs :Dyacoub - Monday, May 28, 2007 - link

Also on page 8:You should remove that first comma. It was throwing me off because the way it reads it sounds like the 2U servers save about 130W but then you get to the end of the sentence and realize you mean "in comparison with 2U servers, we save about 130W or about 30% thanks to Twin 1U". You could also say "Compared with 2U servers, we save..." to make the sentence even more clear.

Thanks for an awesome article, btw. It's nice to see these server articles from time to time, especially when they cover a product that appears to offer a solid TCO and strong comparative with the competition from big names like Dell.

JohanAnandtech - Monday, May 28, 2007 - link

Fixed! Good pointgouyou - Monday, May 28, 2007 - link

The part about infiniband's performance much better as you increase the number of core is really misleading.The graph is mixing core and nodes, so you cannot tell anything. We are in an era where a server has 8 cores: the scaling is completely different as it will depend less on the network. BTW, is the graph made for single core servers ? dual cores ?

MrSpadge - Monday, May 28, 2007 - link

Gouyou, there's a link called "this article" in the part on InfiniBand which answers your question. In the original article you can read that they used dual 3 GHz Woodcrests.What's interesting is that the difference between InfiniBand and GigE is actually more pronounced for the dual core Woodcrests compared with single core 3.4 GHz P4s (at 16 nodes). The explanation given is that the faster dual core CPUs need more communication to sustain performance. So it seems like their algorithm uses no locality optimizations to exploit the much faster communication within a node.

@BitJunkie: I second your comment, very nice article!

MrS

BitJunkie - Monday, May 28, 2007 - link

Nice article, I'm most impressed by the breadth and the detail you drilled in to - also the clarity with which you presented your thinking / results. It's always good to be stretched and great example of how to approach things in structured logical way.Don't mind the "it's an enthusiast site" comments. Some people will be stepping outside their comfort zone with this and won't thank you for it ;)

JohanAnandtech - Monday, May 28, 2007 - link

Thanks, very encouraging comment.And I guess it doesn't hurt the "enthusiast" is reminded that "pcs" can also be fascinating in another role than "Hardcore gaming machine" :-). Many of my students need the same reminder: being an ITer is more than booting Windows and your favorite game. My 2-year old daughter can do that ;-)

yyrkoon - Monday, May 28, 2007 - link

It is however nice to learn about InfiniBand. This is a technology I have been interrested in for a while now, and was under the impression was not going to be implemented until PCIe v2.0 (maybe I missed something here).I would still rather see this technology in the desktop class PC, and if this is yet another enterprise driven technology, then people such as myself, who were hoping to use it for decent home networking(remote storage) are once again, left out in the cold.

yyrkoon - Monday, May 28, 2007 - link

And I am sure every gamer out there knows what iSCSI *is* . . .

Even in 'IT' a 16 core 1U rack is a specialty system, and while they may be semi common in the load balancing/failover scenario(or maybe even used extensively in paralell processing, yes, and even more possible uses . . .), they are still not all that common comparred to the 'standard' server. Recently, a person that I know deployed 40k desktops/ 30k servers for a large company, and would'nt you know it, not one had more than 4 cores . . . and I have personally contracted work from TV/Radio stations(and even the odd small ISP), and outside of the odd 'Toaster', most machines in these places barely use 1 core.

I too also find technologies such as 802.3 ad link aggregation, iSCSI, AoE, etc interresting, and sometimes like playing around with things like openMosix, the latest /hottest Linux Distro, but at the end of the day, other than experimentation, these things typically do not entertain me. Most of the above, and many other technologies for me, are just a means to an end, not entertainment.

Maybe it is enjoyable staring at a machine of this type, not being able to use it to its full potential outside of the work place ? Personally I would not know, and honestly I really do not care, but if this is the case, perhaps you need to take notice of your 2 year old daughter, and relax once in a while.

The point here ? The point being: pehaps *this* 'gamer' you speak of knows a good bit more about 'IT' than you give him credit for, and maybe even makes a fair amount of cash at the end of the day while doing so. Or maybe I am a *real* hardware enthusiast, who would rather be reading about technology, instead of reading yet another 'product review'. Especially since any person worth their paygrade in IT should already know how this system (or anything like) is going to perform beforehand.