OCZ Vertex 3 (240GB) Review

by Anand Lal Shimpi on May 6, 2011 1:50 AM ESTThree months ago we previewed the first client focused SF-2200 SSD: OCZ's Vertex 3. The 240GB sample OCZ sent for the preview was four firmware revisions older than what ended up shipping to retail last month, but we hoped that the preview numbers were indicative of final performance.

The first drives off the line when OCZ went to production were 120GB capacity models. These drives have 128GiB of NAND on board and 111GiB of user accessible space, the remaining 12.7% is used for redundancy in the event of NAND failure and spare area for bad block allocation and block recycling.

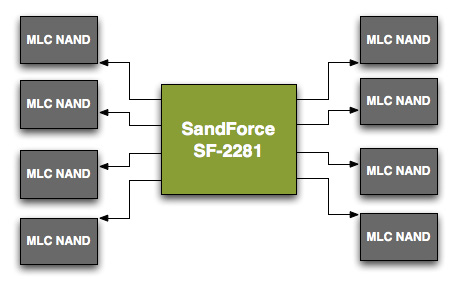

Unfortunately the 120GB models didn't perform as well as the 240GB sample we previewed. To understand why, we need to understand a bit about basic SSD architecture. SandForce's SF-2200 controller has 8 channels that it can access concurrently, it looks sort of like this:

Each arrowed line represents a single 8-byte channel. In reality, SF's NAND channels are routed from one side of the chip so you'll actually see all NAND devices to the right of the controller on actual shipping hardware.

Even though there are 8 NAND channels on the controller, you can put multiple NAND devices on a single channel. Two NAND devices can't be actively transferring data at the same time. Instead what happens is one chip is accessed while another is either idle or busy with internal operations.

When you read from or write to NAND you don't write directly to the pages, you instead deal with an intermediate register that holds the data as it comes from or goes to a page in NAND. The process of reading/programming is a multi-step endeavor that doesn't complete in a single cycle. Thus you can hand off a read request to one NAND device and then while it's fetching the data from an internal page, you can go off and program a separate NAND device on the same channel.

Because of this parallelism that's akin to pipelining, with the right workload and a controller that's smart enough to interleave operations across NAND devices, an 8-channel drive with 16 NAND devices can outperform the same drive with 8 NAND devices. Note that the advantage can't be double since ultimately you can only transfer data to/from one device at a time, but there's room for non-insignificant improvement. Confused?

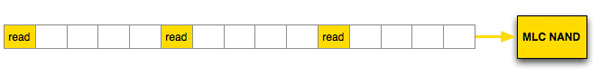

Let's look at a hypothetical SSD where a read operation takes 5 cycles. With a single die per channel, 8-byte wide data bus and no interleaving that gives us peak bandwidth of 8 bytes every 5 clocks. With a large workload, after 15 clock cycles at most we could get 24 bytes of data from this NAND device.

Hypothetical single channel SSD, 1 read can be issued every 5 clocks, data is received on the 5th clock

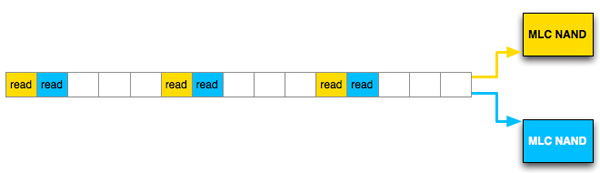

Let's take the same SSD, with the same latency but double the number of NAND devices per channel and enable interleaving. Assuming we have the same large workload, after 15 clock cycles we would've read 40 bytes, an increase of 66%.

Hypothetical single channel SSD, 1 read can be issued every 5 clocks, data is received on the 5th clock, interleaved operation

This example is overly simplified and it makes a lot of assumptions, but it shows you how you can make better use of a single channel through interleaving requests across multiple NAND die.

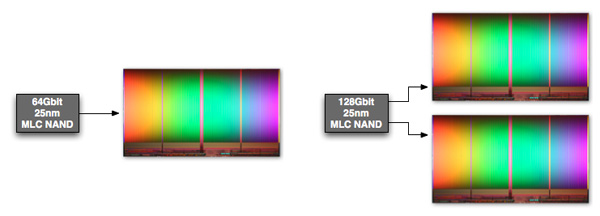

The same sort of parallelism applies within a single NAND device. The whole point of the move to 25nm was to increase NAND density, thus you can now get a 64Gbit NAND device with only a single 64Gbit die inside. If you need more than 64Gbit per device however you have to bundle multiple die in a single package. Just as we saw at the 34nm node, it's possible to offer configurations with 1, 2 and 4 die in a single NAND package. With multiple die in a package, it's possible to interleave read/program requests within the individual package as well. Again you don't get 2 or 4x performance improvements since only one die can be transferring data at a time, but interleaving requests across multiple die does help fill any bubbles in the pipeline resulting in higher overall throughput.

Intel's 128Gbit 25nm MLC NAND features two 64Gbit die in a single package

Now that we understand the basics of interleaving, let's look at the configurations of a couple of Vertex 3s.

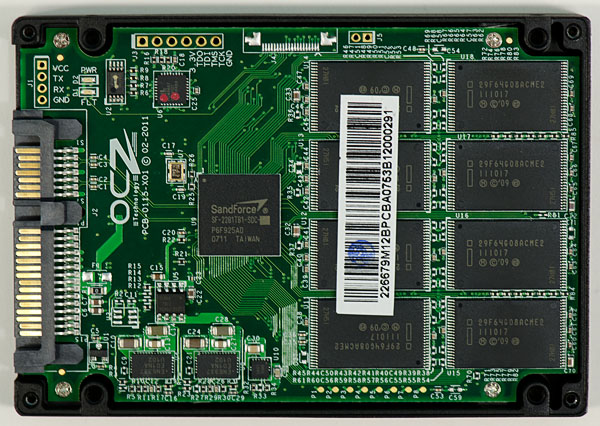

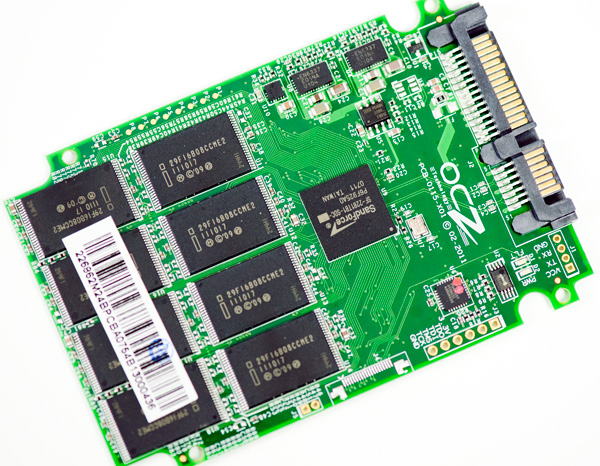

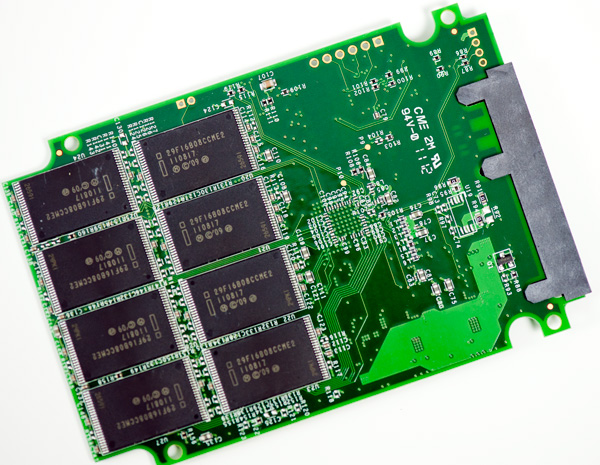

The 120GB Vertex 3 we reviewed a while back has sixteen NAND devices, eight on each side of the PCB:

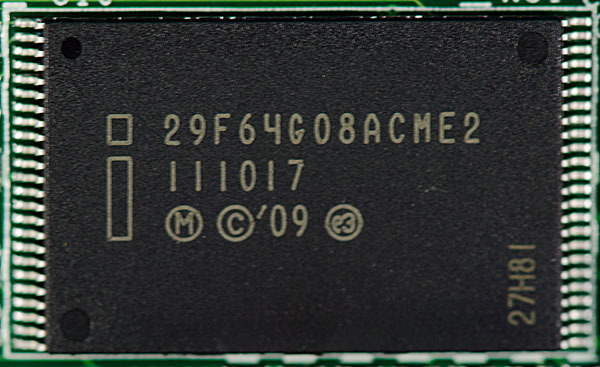

These are Intel 25nm NAND devices, looking at the part number tells us a little bit about them.

You can ignore the first three characters in the part number, they tell you that you're looking at Intel NAND. Characters 4 - 6 (if you sin and count at 1) indicate the density of the package, in this case 64G means 64Gbits or 8GB. The next two characters indicate the device bus width (8-bytes). Now the ninth character is the important one - it tells you the number of die inside the package. These parts are marked A, which corresponds to one die per device. The second to last character is also important, here E stands for 25nm.

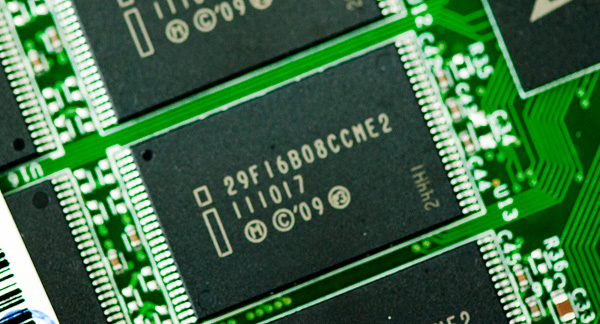

Now let's look at the 240GB model:

Once again we have sixteen NAND devices, eight on each side. OCZ standardized on Intel 25nm NAND for both capacities initially. The density string on the 240GB drive is 16B for 16Gbytes (128 Gbit), which makes sense given the drive has twice the capacity.

A look at the ninth character on these chips and you see the letter C, which in Intel NAND nomenclature stands for 2 die per package (J is for 4 die per package if you were wondering).

While OCZ's 120GB drive can interleave read/program operations across two NAND die per channel, the 240GB drive can interleave across a total of four NAND die per channel. The end result is a significant improvement in performance as we noticed in our review of the 120GB drive.

| OCZ Vertex 3 Lineup | |||||

| Specs (6Gbps) | 120GB | 240GB | 480GB | ||

| Raw NAND Capacity | 128GB | 256GB | 512GB | ||

| Spare Area | ~12.7% | ~12.7% | ~12.7% | ||

| User Capacity | 111.8GB | 223.5GB | 447.0GB | ||

| Number of NAND Devices | 16 | 16 | 16 | ||

| Number of die per Device | 1 | 2 | 4 | ||

| Max Read | Up to 550MB/s | Up to 550MB/s | Up to 530MB/s | ||

| Max Write | Up to 500MB/s | Up to 520MB/s | Up to 450MB/s | ||

| 4KB Random Read | 20K IOPS | 40K IOPS | 50K IOPS | ||

| 4KB Random Write | 60K IOPS | 60K IOPS | 40K IOPS | ||

| MSRP | $249.99 | $499.99 | $1799.99 | ||

The big question we had back then was how much of the 120/240GB performance delta was due to a reduction in performance due to final firmware vs. a lack of physical die. With a final, shipping 240GB Vertex 3 in hand I can say that the performance is identical to our preview sample - in other words the performance advantage is purely due to the benefits of intra-device die interleaving.

If you want to skip ahead to the conclusion feel free to, the results on the following pages are near identical to what we saw in our preview of the 240GB drive. I won't be offended :)

The Test

| CPU |

Intel Core i7 965 running at 3.2GHz (Turbo & EIST Disabled) Intel Core i7 2600K running at 3.4GHz (Turbo & EIST Disabled) - for AT SB 2011, AS SSD & ATTO |

| Motherboard: |

Intel DX58SO (Intel X58) Intel H67 Motherboard |

| Chipset: |

Intel X58 + Marvell SATA 6Gbps PCIe Intel H67 |

| Chipset Drivers: |

Intel 9.1.1.1015 + Intel IMSM 8.9 Intel 9.1.1.1015 + Intel RST 10.2 |

| Memory: | Qimonda DDR3-1333 4 x 1GB (7-7-7-20) |

| Video Card: | eVGA GeForce GTX 285 |

| Video Drivers: | NVIDIA ForceWare 190.38 64-bit |

| Desktop Resolution: | 1920 x 1200 |

| OS: | Windows 7 x64 |

90 Comments

View All Comments

tech6 - Friday, May 6, 2011 - link

Thanks for another thorough review. I noticed that the Samsung 470 (and its OEM equivalent) are getting very popular. Any chance of a review?Anand Lal Shimpi - Friday, May 6, 2011 - link

I've been meaning to do a roundup focusing on 3Gbps drives and diving deeper on the 470, it's just a matter of finding the time. It's definitely on the list though.Take care,

Anand

darwinosx - Friday, May 6, 2011 - link

With Samsungs drive division having been sold to Seagate you have to wonder what happens to service and support of Samsung branded drives.TotalLamer - Friday, May 6, 2011 - link

...any chance the Vertex 3s won't spontaneously brick themselves whenever they damned well choose like the Vertex 2s did? Such horridly unreliable drives.No Intel, no care.

jmunjr - Friday, May 6, 2011 - link

A lot of ultraportable laptops use 7mm height drives. From what I ahve read the Vertex series are 9.5mm and cannot be easily modded to fit. The Crucial however have a space that can be easily removed(though it voids the warranty). Is really would be nice to have more SSD choices in the 7mm height option.jcompagner - Friday, May 6, 2011 - link

I think also a warning must be given, because they really don't work quite right out of the box with everything default.I have a Dell XPS17 (L702x) and the Vertex 3 240GB, and installing windows 7 (sp1) is quite hard. The default AHCI driver of windows really doesn't work with the Vertex 3. So yes you can install it in sata mode that kind of works but you want AHCI, And setting that after install is not that easy (you really have to make sure that the latest intel drivers are there and then tweak some registry setting)

Best thing to go around this is to use the Intel F6 driver right from the installer of Win7. That will help and then you can install it at once.

The thing is that OCZ sees this as a problem with drivers or the system, i completely don't agree with this, there are many complaining constantly on the forum because of this. And the intel drive that i also have never have these problems they install just fine. So it is really OCZ which should look into why they are not compatible.

Besides that after you have taken this hurdle you have ofcourse the LPM registry tweak you have to do to kill LPM mode. But this is not only a OCZ/Vertex problem also Crucial (C300) has this same problem. But again with the Intel SSD i haven't seen this problem also.

I just think that the Vertex doesn't behave completely correct on all the SATA commands that are out there. I really hope for them that they can fix that (they get a bit of bad name i know enough forums that really don't recommend OCZ because of all this)

But after all these install troubles i must say it is fast and works quite well.

I don't really like that now a MAXIOPS version is coming also for the 240GB! I am curious of how much faster that will be

One question: If the number of die's tells everything about the speeds, why is the 480GB then slower? (at least on paper)

Ammaross - Friday, May 6, 2011 - link

"One question: If the number of die's tells everything about the speeds, why is the 480GB then slower? (at least on paper) "You didn't read the interleaving example then. If 2 die per chip, and 2 chips per channel fill up 4 of the 5 theoretical "slots" in the 5-clock example, imagine what 4 dies per chip and 2 chips per channel does trying to cram/schedule 8 dies into 5 slots? Then think what happens if all requests are going to one or two die on the same package? It's just a matter of clogging the pipes or burning slots due to a package already processing a request. You can think of it like the 8x/8x/4x SLI/CF situation with P67 where that 3rd gfx card just doesn't help much at all due to being data-starved, or the overhead of SLI/CF in itself.

jcompagner - Saturday, May 7, 2011 - link

but still, why is it even slower and not the same speed as the 240GB then?darwinosx - Friday, May 6, 2011 - link

It seems from public comments on New Egg and elsewhere that there a lot of unhappy owners of tis drive. High failure rate and many people continue to comment on how poor OCZ's tech support is which is the opposite of what this review says.Lingyis - Friday, May 6, 2011 - link

is there something anandtech can test about reliability? i had 3 OCZ vertex from a few years ago and 2 of them had bad sectors after about 6 months of use. whatever time i saved on the SSD was more than wiped out by my time having to reinstall software, and possibly each time it has to run chkdsk related commands. i have been quite reluctant to use SSD since--i went with good ol' HDD in my new laptop and chkdsk has yet to reveal any errors.some time ago, i read on this site that the officially from intel, failure rates are something like 1.2% for non-Intel drives and 0.5% for intel drives? obviously, massive data is required to get these kind of statistics, but if you can figure out some way of testing reliability on these SSD, that'll be much more important to people like me as SSD is fast enough for most practical purposes. perhaps you can run these drives intensely over a period of 30 days (probably more) and see if any data corruption sets in. if there's a way to limit read/write to a certain region of the SSD than better obviously, but the controller i suppose might have a say in that.