The SandForce Roundup: Corsair, Kingston, Patriot, OCZ, OWC & MemoRight SSDs Compared

by Anand Lal Shimpi on August 11, 2011 12:01 AM ESTIt's a depressing time to be covering the consumer SSD market. Although performance is higher than it has ever been, we're still seeing far too many compatibility and reliability issues from all of the major players. Intel used to be our safe haven, but even the extra reliable Intel SSD 320 is plagued by a firmware bug that may crop up unexpectedly, limiting your drive's capacity to only 8MB. Then there are the infamous BSOD issues that affect SandForce SF-2281 drives like the OCZ Vertex 3 or the Corsair Force 3. Despite OCZ and SandForce believing they were on to the root cause of the problem several weeks ago, there are still reports of issues. I've even been able to duplicate the issue internally.

It's been three years since the introduction of the X25-M and SSD reliability is still an issue, but why?

For the consumer market it ultimately boils down to margins. If you're a regular SSD maker then you don't make the NAND and you don't make the controller.

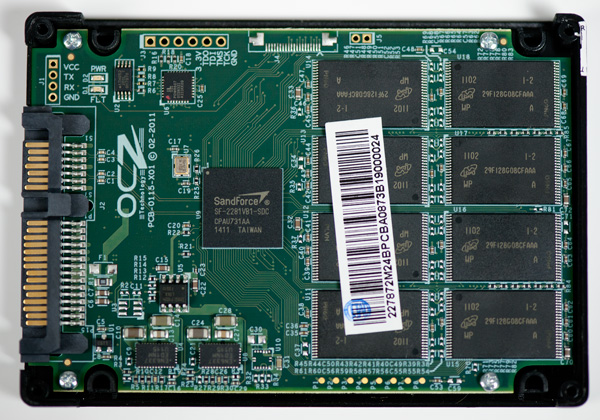

A 120GB SF-2281 SSD uses 128GB of 25nm MLC NAND. The NAND market is volatile but a 64Gb 25nm NAND die will set you back somewhere from $10 - $20. If we assume the best case scenario that's $160 for the NAND alone. Add another $25 for the controller and you're up to $185 without the cost of the other components, the PCB, the chassis, packaging and vendor overhead. Let's figure another 15% for everything else needed for the drive bringing us up to $222. You can buy a 120GB SF-2281 drive in e-tail for $250, putting the gross profit on a single SF-2281 drive at $28 or 11%.

Even if we assume I'm off in my calculations and the profit margin is 20%, that's still not a lot to work with.

Things aren't that much easier for the bigger companies either. Intel has the luxury of (sometimes) making both the controller and the NAND. But the amount of NAND you need for a single 120GB drive is huge. Let's do the math.

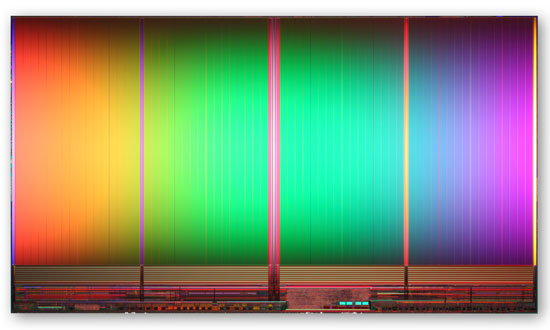

8GB IMFT 25nm MLC NAND die - 167mm2

The largest 25nm MLC NAND die you can get is an 8GB capacity. A single 8GB 25nm IMFT die measure 167mm2. That's bigger than a dual-core Sandy Bridge die and 77% the size of a quad-core SNB. And that's just for 8GB.

A 120GB drive needs sixteen of these die for a total area of 2672mm2. Now we're at over 12 times the wafer area of a single quad-core Sandy Bridge CPU. And that's just for a single 120GB drive.

This 25nm NAND is built on 300mm wafers just like modern microprocessors giving us 70685mm2 of area per wafer. Assuming you can use every single square mm of the wafer (which you can't) that works out to be 26 120GB SSDs per 300mm wafer. Wafer costs are somewhere in four digit range - let's assume $3000. That's $115 worth of NAND for a drive that will sell for $230, and we're not including controller costs, the other components on the PCB, the PCB itself, the drive enclosure, shipping and profit margins. Intel, as an example, likes to maintain gross margins north of 60%. For its consumer SSD business to not be a drain on the bottom line, sacrifices have to be made. While Intel's SSD validation is believed to be the best in the industry, it's likely not as good as it could be as a result of pure economics. So mistakes are made and bugs slip through.

I hate to say it but it's just not that attractive to be in the consumer SSD business. When these drives were selling for $600+ things were different, but it's not too surprising to see that we're still having issues today. What makes it even worse is that these issues are usually caught by end users. Intel's microprocessor division would never stand for the sort of track record its consumer SSD group has delivered in terms of show stopping bugs in the field, and Intel has one of the best track records in the industry!

It's not all about money though. Experience plays a role here as well. If you look at the performance leaders in the SSD space, none of them had any prior experience in the HDD market. Three years ago I would've predicted that Intel, Seagate and Western Digital would be duking it out for control of the SSD market. That obviously didn't happen and as a result you have a lot of players that are still fairly new to this game. It wasn't too long ago that we were hearing about premature HDD failures due to firmware problems, I suspect it'll be a few more years before the current players get to where they need to be. Samsung may be one to watch here going forward as it has done very well in the OEM space. Apple had no issues adopting Samsung controllers, while it won't go anywhere near Marvell or SandForce at this point.

90 Comments

View All Comments

Ipatinga - Thursday, August 11, 2011 - link

So, the Corsair Force GT is really going against OCZ Vertex 3? I thought it was agains Vertex 3 Max IOPS.In this case, the Corsair Force 3 is going after Agility 3?

And Corsair Performance 3 is going after Solid 3?

Thanks :)

Would like to hear more about NAND Flash that is Async and Sync and Toogle.

bob102938 - Thursday, August 11, 2011 - link

There are some factors that were not considered on the first page of the article. The number of dies per wafer is important, but you are forgetting the cost of producing a flash memory wafer vs a VLSI wafer. Flash memory is a ~20 layer process that has margins for error which can be worked around. VLSI is a 60+ layer process that has 0 margin for error. Producing flash memory wafers is more than an order of magnitude cheaper than producing the same-size VLSI wafer. Additionally, turnaround time on a flash wafer can be achieved in ~20 days, whereas a VLSI wafer can require 3 months.Also the internal cost of a 300mm flash memory wafer is more like $1000. A VLSI wafer is around $8000.

philosofool - Thursday, August 11, 2011 - link

I don't want to blame the victims, end users. Obviously, manufacturers have a responsibility to QA.Still, when you look at the market forces here, it seems obvious that market forces are driving the problem.

Manufacturer makes the COOL drive that gets the best performances marks of any drive out there. One year later, the COOLER drive is released. No one wants a COOL drive anymore. Plus, the margin making COOL drives is so small, you can't drop your price on a COOL drive to make it an attractive "midrange" option. So you have to start developing a new controller to make something down-right freezing.

Because there's such an emphasis on performance, controllers and the drives they run become obsolete before a water-tight reliable version of the controller can be made. Of course, they're not really obsolete--there's nothing wrong with the X-25M controller--but they can't compete in a market with drives that show twice the random read performance of an unreliable competitor.

Constant R&D on new controllers and the demand for performance mean that reliability takes a backseat. You can't sell COOL drives as long as someone makes a COOLER drive, even if cooler drives have reliability problems. Think about yourself: would you buy an X-25 M knowing that you could get a Vertex 3 instead?

Bannon - Thursday, August 11, 2011 - link

I built a system on an Asus P8Z68 Deluxe motherboard and used two Intel 510 250GB drives with it. One is the system drive and the other data drive with firmwares PWG2 and PWG4 respectively. To date I have not experienced a BSOD BUT my system drive will drop from 6Gbs to 3Gbs for no apparent reason and stay there until I power the system off. My data drive is rock solid at 6Gbs and stays there. I've just started working with Intel so I don't know where that is going to lead. Hopefully it end up with a new drive with the latest firmware and 6Gbs performance. Given my druthers I'd rather have this problem than the Sandforce BSOD's but I wanted to point out that everything isn't perfect in Intel-land.Coup27 - Thursday, August 11, 2011 - link

Anand,Can we ever expect a 470 review?

nish0323 - Thursday, August 11, 2011 - link

or am I the only one about the fact that the OWC drive is the ONLY one with a 5 year warranty on it!! That's nuts... they actually back up the claim of their SSD drive longevity by giving you such a long warranty. I love SSDs.OWC Grant - Friday, August 12, 2011 - link

Glad you noticed that warranty term because it's somewhat related to topic of this article. I've been in direct contact with Anand on this as the tone of article is all-encompassing and I wanted to shed some light on that from our perspective.While many SF based SSDs share firmware, not all hardware is the same. Our SSDs have subtle design and/or component differences which is what we feel reduces or eliminates our products susceptibility to the BSOD issue.

The honest truth is we have not been able to create a BSOD issue here with our SSDs using the same procedures that caused other brands' SSDs to experience BSOD. Nor have we received or read one direct report of such an occurrence using our drives.

And while we cut our teeth so to speak in the Mac industry, PLENTY of PC users have our SSDs in their systems...as well as that we do extensive testing on a variety of motherboards/system configs to ensure long term reliable operation.

More supportive perhaps is the fact that we've had other brand users who experienced BSOD, but after buying our SSD, they reported back that it eliminated any issues they were experiencing.

ckryan - Thursday, August 11, 2011 - link

should be getting more reliable, not less. As profit margins get slimmer and slimmer, shouldn't manufactures be producing more reliable drives? Also, Intel might be making less money per drive, but surely their enterprise sales require the same levels of validation (required previously).Conscript - Thursday, August 11, 2011 - link

am I nuts after reading multiple reviews from Anand as well as elsewhere, that I keep thinking I'm best off with a 256GB Crucial M4? I've had my 160GB X-25 for a while now, and think I'm going to hand it down to the wifey.Bannon - Thursday, August 11, 2011 - link

I had a 256GB M4 which worked fine except it would BSOD if I let my system sleep.