The SandForce Roundup: Corsair, Kingston, Patriot, OCZ, OWC & MemoRight SSDs Compared

by Anand Lal Shimpi on August 11, 2011 12:01 AM ESTIt's a depressing time to be covering the consumer SSD market. Although performance is higher than it has ever been, we're still seeing far too many compatibility and reliability issues from all of the major players. Intel used to be our safe haven, but even the extra reliable Intel SSD 320 is plagued by a firmware bug that may crop up unexpectedly, limiting your drive's capacity to only 8MB. Then there are the infamous BSOD issues that affect SandForce SF-2281 drives like the OCZ Vertex 3 or the Corsair Force 3. Despite OCZ and SandForce believing they were on to the root cause of the problem several weeks ago, there are still reports of issues. I've even been able to duplicate the issue internally.

It's been three years since the introduction of the X25-M and SSD reliability is still an issue, but why?

For the consumer market it ultimately boils down to margins. If you're a regular SSD maker then you don't make the NAND and you don't make the controller.

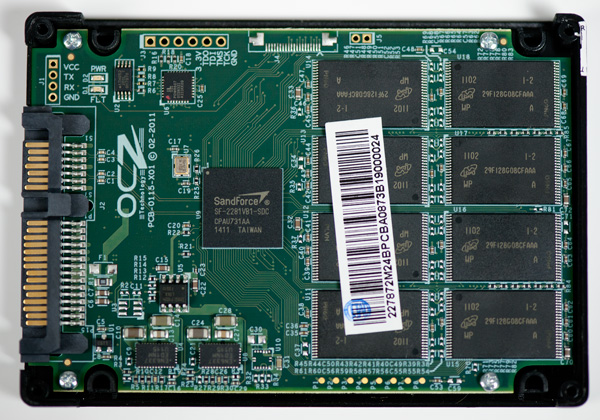

A 120GB SF-2281 SSD uses 128GB of 25nm MLC NAND. The NAND market is volatile but a 64Gb 25nm NAND die will set you back somewhere from $10 - $20. If we assume the best case scenario that's $160 for the NAND alone. Add another $25 for the controller and you're up to $185 without the cost of the other components, the PCB, the chassis, packaging and vendor overhead. Let's figure another 15% for everything else needed for the drive bringing us up to $222. You can buy a 120GB SF-2281 drive in e-tail for $250, putting the gross profit on a single SF-2281 drive at $28 or 11%.

Even if we assume I'm off in my calculations and the profit margin is 20%, that's still not a lot to work with.

Things aren't that much easier for the bigger companies either. Intel has the luxury of (sometimes) making both the controller and the NAND. But the amount of NAND you need for a single 120GB drive is huge. Let's do the math.

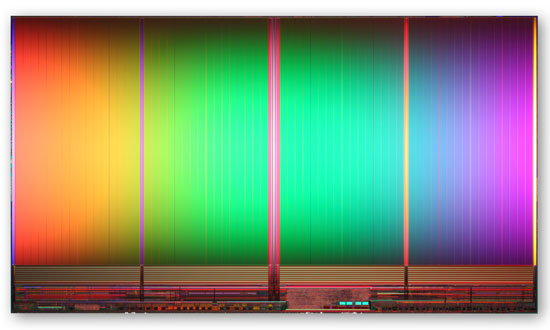

8GB IMFT 25nm MLC NAND die - 167mm2

The largest 25nm MLC NAND die you can get is an 8GB capacity. A single 8GB 25nm IMFT die measure 167mm2. That's bigger than a dual-core Sandy Bridge die and 77% the size of a quad-core SNB. And that's just for 8GB.

A 120GB drive needs sixteen of these die for a total area of 2672mm2. Now we're at over 12 times the wafer area of a single quad-core Sandy Bridge CPU. And that's just for a single 120GB drive.

This 25nm NAND is built on 300mm wafers just like modern microprocessors giving us 70685mm2 of area per wafer. Assuming you can use every single square mm of the wafer (which you can't) that works out to be 26 120GB SSDs per 300mm wafer. Wafer costs are somewhere in four digit range - let's assume $3000. That's $115 worth of NAND for a drive that will sell for $230, and we're not including controller costs, the other components on the PCB, the PCB itself, the drive enclosure, shipping and profit margins. Intel, as an example, likes to maintain gross margins north of 60%. For its consumer SSD business to not be a drain on the bottom line, sacrifices have to be made. While Intel's SSD validation is believed to be the best in the industry, it's likely not as good as it could be as a result of pure economics. So mistakes are made and bugs slip through.

I hate to say it but it's just not that attractive to be in the consumer SSD business. When these drives were selling for $600+ things were different, but it's not too surprising to see that we're still having issues today. What makes it even worse is that these issues are usually caught by end users. Intel's microprocessor division would never stand for the sort of track record its consumer SSD group has delivered in terms of show stopping bugs in the field, and Intel has one of the best track records in the industry!

It's not all about money though. Experience plays a role here as well. If you look at the performance leaders in the SSD space, none of them had any prior experience in the HDD market. Three years ago I would've predicted that Intel, Seagate and Western Digital would be duking it out for control of the SSD market. That obviously didn't happen and as a result you have a lot of players that are still fairly new to this game. It wasn't too long ago that we were hearing about premature HDD failures due to firmware problems, I suspect it'll be a few more years before the current players get to where they need to be. Samsung may be one to watch here going forward as it has done very well in the OEM space. Apple had no issues adopting Samsung controllers, while it won't go anywhere near Marvell or SandForce at this point.

90 Comments

View All Comments

arklab - Thursday, August 11, 2011 - link

A pity you didn't get the new ... err revised OWC 240GB Mercury EXTREME™ Pro 6G SSD.It now uses the SandForce 2282 controller.

While said to be similar to the troubled 2281, I'm wondering if it is different enough to side step the BSOD bug.

It may well also be faster - at least by a bit.

Only the 240GB has the new controller, not there 120GB - though the 480 will also be getting it "soon".

PLEASE get one, test, and add to this review!

cigar3tte - Thursday, August 11, 2011 - link

Anand mentioned that he didn't see any BSOD's with the 240GB drives he passed out. AFAIK, only the 120GB drives have the problem.Also, the BSOD is only when you are running the OS on the drive. So if you have the drive as an addon, you'd just lose the drive, but no BSOD, I believe.

I returned my 120GB Corsair Force 3 and got a 64GB Micro Center SSD (the first SandForce controller) instead.

jcompagner - Sunday, August 14, 2011 - link

ehh,, i have one of the first 240GB vertex 3 in my Dell XPS17 sandy bridge laptop.with the first firmware 2.02 i didn't get BSOD after i got Windows 7 64bit installed right (using the intel drivers, fixing the LPM settings in the registry)

everything was working quite right

then we got 2 firmware version who where horrible BSOD almost any other day. Then we get 2.09 which OCZ says thats a bit of an debug/intermediate release not really a final release. And what is the end result? ROCK STABLE!! no BSOD at all anymore.

But then came the 2.11 release they stressed that everybody should upgrade and also upgrade to the latest 10.6 intel drivers.. I thought ok lets do it then.

In 2 weeks: 3 BSOD, at least 2 of them where those F6 errors again..

Now i think it is possible to go back to 2.09 again, which i am planing to do if i got 1 more hang/BSOD ...

geek4life!! - Thursday, August 11, 2011 - link

I thought OCZ purchasing Indilnx was to have their own drives made "In house".To my knowledge they already have some drives out that use the Indilnx controller with more to come in the future.

I would like your take on this Anand ?

zepi - Thursday, August 11, 2011 - link

How about digging deeper into SSD behavior in server usage?What kind of penalties can be expected if daring admins use couple of SSD's in a raid for database / exchange storage? Or should one expect problems if you run a truckload of virtual machines from reasonably priced a raid-5 of MLC-SSD's ?

Does the lack of trim-support in raid kill the performance and which drivers are the best etc?

cactusdog - Thursday, August 11, 2011 - link

Great review but Why wouldnt you use the latest RST driver? Supposed to fix some issues.Bill Thomas - Thursday, August 11, 2011 - link

What's your take on the new EdgeTech Boost SSD's?ThomasHRB - Thursday, August 11, 2011 - link

Thanks for another great article Anand, I love reading all the articles on this site. I noticed that you have also managed to see the BSOD issues that others are having.I don't know if my situation is related, but from personal experience and a bit of trial and error I found that by unstable power seems to be related to the frequency of these BSOD events. I recently built a new system while I was on holiday in Brisbane Australia.

Basic Specs:

Mainboard - Gigabyte GA-Z68X-UD3R-B3

Graphics - Gigabyte GV-N580UD-15I

CPU - Intel Core i72600K (stock clock)

Cooler - Corsair H60 (great for computer running in countries where ambient temp regularly reach 35degrees Celsius)

PSU -Corsair TX750

In Brisbane my machine ran stable for 2 solid weeks (no shutdown's only restart during software installations, OS updates etc).

However when I got back to Fiji, and powered up my machine, I had these BSOD's every day or 2 (I shutdown my machine during the days when I am at work and at night when I am asleep) (CPU temp never exceeded 55degrees C measured with CoreTemp and RealTemp) and GPU temp also never went above 60degrees C measured with nvidia gadget from addgadget.com)

All my computer's sit behind an APC Back-UPS RS (BR1500). I also have an Onkyo TX-NR609 hooked up to the HDMI-mini port, so I disconnected that for a few days, but i saw no differences.

However last Friday, a major power spike caused my Broadband router (dlink DIR-300) to crash, and I had to reset the unit to get it working. My machine also had a BSOD at that exact same moment. so I thought that it was a possibility that I was getting a power spike being transmitted through the Ethernet cable from my ISP (the only thing that I have not got an isolation unit for)

So the next day I bough and installed an APC ProtectNET (PNET1GB) and I have not had a single BSOD running for almost 1 full week (no shutdown's and my Onkyo has been hooked back up).

Although this narrative is long and reflects nothing more than my personal experiences, I at least found it strange that my BSOD seems to have nothing to do with the Vertex3 and more to do with random power fluctuations in my living environment.

And it may be possible that other people are having the same problem I had, and attributing it to a particular piece of hardware simply because other people have done the same attribution.

Kind Regards.

Thomas Rodgers

etamin - Thursday, August 11, 2011 - link

Great article! The only thing that's holding me back from buying an SSD is that secure data erasing is difficult on an SSD and a full rewrite of the drive is neither time efficient nor helpful to the longevity of the drive...or so I have heard from a few other sources. What is your take on this secure deletion dilemma (if it actually exists)?lyeoh - Friday, August 12, 2011 - link

AFAIK erasing a "conventional" 1 TB drive is not very practical either ( takes about 3 hours).Options:

a) Use encryption, refer to the "noncompressible" benchmarks, use the more reliable SSDs, and use hardware acceleration or fast CPUs e.g. http://www.truecrypt.org/docs/?s=hardware-accelera...

b) Use physical destruction - e.g. thermite, throwing it into lava, etc :).