SandForce TRIM Issue & Corsair Force Series GS (240GB) Review

by Kristian Vättö on November 22, 2012 1:00 PM ESTIntroduction to the TRIM Issue

TRIM in SandForce based SSDs has always been trickier than with other SSDs due to the fact that SandForce's way to deal with data is a lot more complicated. Instead of just writing the data to the NAND as other SSDs do, SandForce employs a real-time compression and de-duplication engine. When even your basic design is a lot more complex, there is a higher chance that something will go wrong. When other SSDs receive a TRIM command, they can simply clean the blocks with invalid data and that's it. SandForce, on the other hand, has to check if the data is used by something else (i.e. thanks to de-duplication). You don't want your SSD to erase data that can be crucial to your system's stability, do you?

As we have shown in dozens of reviews, TRIM doesn't work properly when dealing with incompressible data. It never has. That means when the drive is filled and tortured with incompressible data, it's put to a state where even TRIM does not fully restore performance. Since even Intel's own proprietary firmware didn't fix this, I believe the problem is so deep in the design that there is just no way to completely fix it. However, the TRIM issue we are dealing with today has nothing to do with incompressible data: now TRIM doesn't work properly with compressible data either.

Testing TRIM: It's Broken

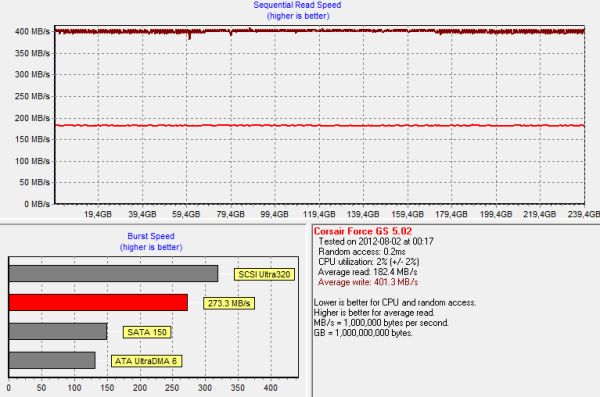

SandForce doesn't behave normally when we put it through our torture test with compressible data. While other SSDs experience a slowdown in write speed, SandForce's write speed remains the same but read speed degrades instead. Below is an HD Tach graph of 240GB Corsair Force GS, which was first filled with compressible data and then peppered with compressible 4KB random writes (100% LBA space, QD=32):

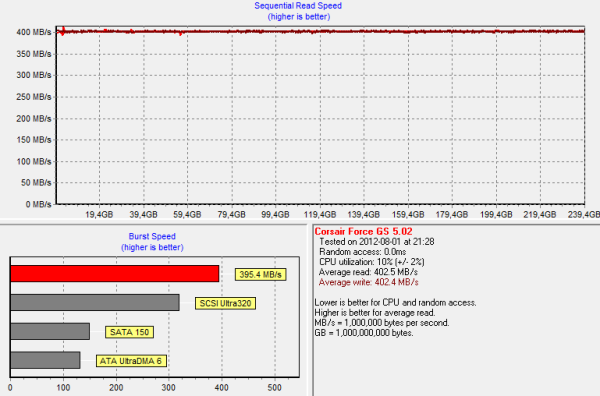

And for comparison, here is the same HD Tach graph ran on a secure erased Force GS:

As you can see, write speed wasn't affected at all by the torture. However, read performance degraded by more than 50% from 402MB/s to 182MB/s. That is actually quite odd because reading from NAND is a far simpler process: You simply keep applying voltages until you get the desired outcome. There is no read-modify-write scheme, which is the reason why write speed degrades in the first place. We don't know the exact reason why read speed degrades in SandForce based SSDs but once again, it seems to be the way it was designed. My guess is that the degradation has something to do with how the data is decompressed but most likely there is something much more complicated in here.

Read speed degradation is not the real problem, however. So far we haven't faced a consumer SSD that wouldn't experience any degradation after enough torture. Given that consumer SSDs typically have only 7-15% of over-provisioned NAND, sooner than later you will run into a situation where read-modify-write is triggered, which will result in a substantial decline in write performance. With SandForce your write speed won't change (at least not by much) but the read speed goes downhill instead. It's a trade-off but neither is worse than the other as all workloads consist of both reads and writes.

To test TRIM, I TRIM'ed the drive after our 20 minute torture:

And here is the real issue. Normally TRIM would restore performance to clean-level state, but this is not the case. Read speed is definitely up from dirty state but it's not fully restored. Running TRIM again didn't yield any improvement either, so something is clearly broken here. Also, it didn't matter if I filled and tortured with drive with compressible, pseudo-random data, or incompressible data; the end result was always the same when I ran HD Tach.

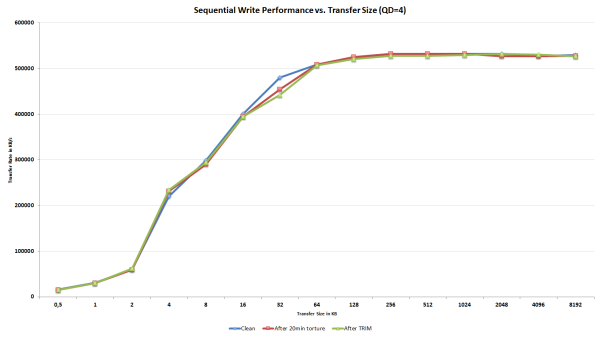

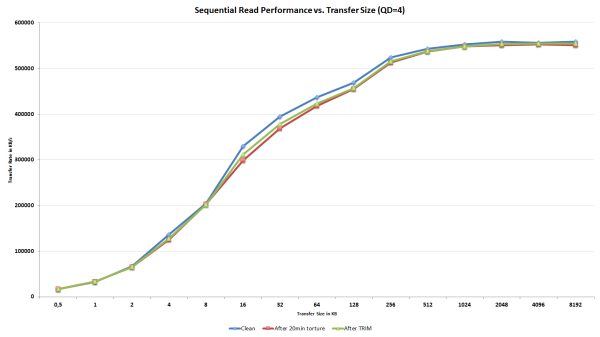

I didn't want to rely completely on HD Tach as it's always possible that one benchmark behaves differently from others, especially when it comes to SandForce. I turned to ATTO since it uses highly compressible data as well to see if it would report data similar to our HD Tach results. Once again, I first secure erased the drive, filled it with sequential data and proceeded to torture the drive with 4KB random writes (LBA space 100%, QD=32) for 20 minutes:

As expected, write speed is not affected except for an odd bump at transfer size of 32KB. Since we are only talking about one IO size and performance actually degraded after TRIM, it's completely possible that this is simply an error.

The read speed ATTO graph is telling the same story as our HD Tach graphs; read speed does indeed degrade and is not fully restored after TRIM. The decrease in read speed is a lot smaller compared to our HD Tach results, but it should be kept in mind that ATTO reads/writes a lot less data to the drive compared to HD Tach, which reads/writes across the whole drive.

What we can conclude from the results is that TRIM is definitely broken in SandForce SSDs with firmware 5.0.0, 5.0.1, or 5.0.2. If your SandForce SSD is running the older 3.x.x firmware, you have nothing to worry about because this TRIM issue is limited strictly to 5.x.x firmwares. Luckily, this is not the end of the world because SandForce has been aware of this issue for a long time and a fix is already available for some drives. Let's have a look how the fix works.

_575px.PNG)

56 Comments

View All Comments

Sivar - Saturday, November 24, 2012 - link

Do you understand how data deduplication works?This is a rhetorical question. Those who have read your comments know the answer.

Please read the Wikipedia article on data deduplication, or some other source, before making further comments.

JellyRoll - Saturday, November 24, 2012 - link

I am repeating the comments above for you, since you referenced the Wiki I would kindly suggest that you might have a look at it yourself before commenting further."the intent of storage-based data deduplication is to inspect large volumes of data and identify large sections – such as entire files or large sections of files – that are identical, in order to store only one copy of it."

This happens without any regard to whether data is compressible or not.

If you have two matching sets of data, be they incompressible or not, they would be subject to deduplicatioin. It would merely require mapping to the same LBA addresses.

For instance, if you have two files that consist of largely incompressible data, but they are still carbon copies of each, they are still subject to data deduplication.

'nar - Monday, November 26, 2012 - link

You contradict yourself dude. You are regurgitating the words, but their meaning isn't sinking in. If you have two sets of incompressible data, then you have just made it compressible, ie. 2=1When the drive is hammered with incompressible data, there is only one set of data. If there were two or more sets of identical data then it would be compressible. De-duplication is a form of compression. If you have incompressible data, it cannot be de-duped.

Write amplification improvements come from compression, as in 2 files=1 file. Write less, lower amplification. Compressible data exhibits this, but incompressible data cannot because no two files are identical. Write amp is still high with incompressible data like everyone else. Your conclusion is backwards. De-duplication can only be applied on compressible data.

The previous article that Anand himself wrote suggested dedupe, it did not state that it was used, as that was not divulged. Either way, dedupe is similar to compression, hence the description. Although vague, it's the best we got from Sandforce to describe what they do.

What Sandforce uses is speculation anyhow, since it deals with trade secrets. If you really want to know you will have to ask Sandforce yourself. Good luck with that. :)

JellyRoll - Tuesday, November 27, 2012 - link

If you were to write 100 exact copies of a file, with each file consisting of incompressible data and 100MB in size, deduplication would only write ONE file, and link back to it repeatedly. The other 99 instances of the same file would not be repeatedly written.That is the very essence of deduplication.

SandForce processors do not exhibit this characteristic, be it 100 files or even only two similar files.

Of course SandForce doesn't disclose their methods, but full on terming it dedupe is misleading at best.

extide - Wednesday, November 28, 2012 - link

DeDuplication IS a form of compression dude. Period!!FunnyTrace - Wednesday, November 28, 2012 - link

SandForce presumably uses some sort of differential information update. When a block is modified, you find the difference between the old data and the new data. If the difference is small, you can just encode it over a smaller number of bits in the flash page. If you do the difference encoding, you cannot gc the old data unless you reassemble and rewrite the new data to a different location.Difference encoding requires more time (extra read, processing, etc). So, you must not do it when the write buffer is close to full. You can always choose whether or not you do differential encoding.

It is definitely not deduplication. You can think of it as compression.

A while back my prof and some of my labmates tried to guess their "DuraWrite" (*rolls eyes*) technology and this is the best guess have come up with. We didn't have the resources to reverse engineer their drive. We only surveyed published literature (papers, patents, presentations).

Oh, and here's their patent: http://www.google.com/patents/US20120054415

JellyRoll - Friday, November 30, 2012 - link

Hallelujah!Thanks funnytrace, i had a strong suspicion that it was data differencing. In the linked patent document it lists this 44 times. Maybe that many repetitions will sink in for some who still believe it is deduplication?

Also, here is a link to data differencing for those that wish to learn..

http://en.wikipedia.org/wiki/Data_differencing

Radoslav Danilak is listed as the inventor, not surprising i believe he was SandForce employee #2. He is now running Skyera, he is an excellent speaker btw.

extide - Saturday, November 24, 2012 - link

It's no different than SAN's and ZFS and other enterprise level storage solutions doing block level de-duplication. It's not magic, and it's not complicated. Why is it so hard to believe? I mean, you are correct that the drive has no idea what bytes go to what file, but it doesn't have to. As long as the controller sends the same data back to the host for a given read on an lba as the host sent to write, it's all gravvy. It doesnt matter what ends up on the flash,.JellyRoll - Saturday, November 24, 2012 - link

Aboslutely correct. However, they have much more powerful processors. You are talking about a very low wattage processor that cannot handle deduplication on this scale. SandForce also does not make the statement that they actually DO deduplication.FunBunny2 - Saturday, November 24, 2012 - link

here: http://thessdreview.com/daily-news/latest-buzz/ken..."Speaking specifically on SF-powered drives, Kent is keen to illustrate that the SF approach to real time compression/deduplication gives several key advantages."

Kent being the LSI guy.