Intel Disables TSX Instructions: Erratum Found in Haswell, Haswell-E/EP, Broadwell-Y

by Ian Cutress on August 12, 2014 8:20 PM EST

One of the main features Intel was promoting at the launch of Haswell was TSX – Transactional Synchronization eXtensions. In our analysis, Johan explains that TSX enables the CPU to process a series of traditionally locked instructions on a dataset in a multithreaded environment without locks, allowing each core to potentially violate each other’s shared data. If the series of instructions is computed without this violation, the code passes through at a quicker rate – if an invalid overwrite happens, the code is aborted and takes the locked route instead. All a developer has to do is link in a TSX library and mark the start and end parts of the code.

News coming from Intel’s briefings in Portland last week boil down to an erratum found with the TSX instructions. Tech Report and David Kanter of Real World Technologies are stating that a software developer outside of Intel discovered the erratum through testing, and subsequently Intel has confirmed its existence. While errata are not new (Intel’s E3-1200 v3 Xeon CPUs already have 140 of them), what is interesting is Intel’s response: to push through new microcode to disable TSX entirely. Normally a microcode update would suggest a workaround, but it would seem that this a fundamental silicon issue that cannot be designed around, or intercepted at an OS or firmware/BIOS level.

Intel has had numerous issues similar to this in the past, such as the FDIV bug, the f00f bug and more recently, the P67 B2 SATA issues. In each case, the bug was resolved by a new silicon stepping, with certain issues (like FDIV) requiring a recall, similar to recent issues in the car industry. This time there are no recalls, the feature just gets disabled via a microcode update.

The main focus of TSX is in server applications rather than consumer systems. It was introduced primarily to aid database management and other tools more akin to a server environment, which is reflected in the fact that enthusiast-level consumer CPUs have it disabled (except Devil’s Canyon). Now it will come across as disabled for everyone, including the workstation and server platforms. Intel is indicating that programmers who are working on TSX enabled code can still develop in the environment as they are committed to the technology in the long run.

Overall, this issue affects all of the Haswell processors currently in the market, the upcoming Haswell-E processors and the early Broadwell-Y processors under the Core M branding, which are currently in production. This issue has been found too late in the day to be introduced to these platforms, although we might imagine that the next stepping all around will have a suitable fix. Intel states that its internal designs have already addressed the issue.

Intel is recommending that Xeon users that require TSX enabled code to improve performance should wait until the release of Haswell-EX. This tells us two things about the state of Haswell: for most of the upcoming LGA2011-3 Haswell CPUs, the launch stepping might be the last, and the Haswell-EX CPUs are still being worked on. That being said, if the Haswell-E/EP stepping at launch is not the last one, Intel might not promote the fact – having the fix for TSX could be a selling point for Broadwell-E/EP down the line.

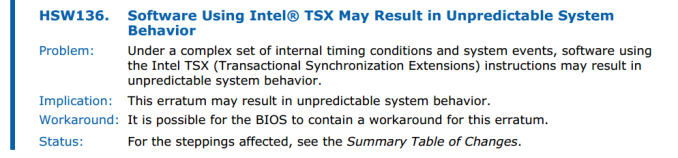

For those that absolutely need TSX, it is being said that TSX can be re-enabled through the BIOS/firmware menu should the motherboard manufacturer decide to expose it to the user. Reading though Intel’s official errata document, we can confirm this:

We are currently asking Intel what the required set of circumstances are to recreate the issue, but the erratum states ‘a complex set of internal timing conditions and system events … may result in unpredictable system behaviour’. There is no word if this means an unrecoverable system state or memory issue, but any issue would not be in the interests of the buyers of Intel’s CPUs who might need it: banks, server farms, governments and scientific institutions.

At the current time there is no road map for when the fix will be in place, and no public date for the Haswell-EX CPU launch. It might not make sense for Intel to re-release the desktop Haswell-E/EP CPUs, and in order to distinguish them it might be better to give them all new CPU names. However the issue should certainly be fixed with Haswell-EX and desktop Broadwell onwards, given that Intel confirms they have addressed the issue internally.

Source: Twitter, Tech Report

63 Comments

View All Comments

psyq321 - Wednesday, August 13, 2014 - link

Well, considering that Haswell EP is in mass production already, and final qualification-stage samples were out for months, the timing is bad.I doubt Intel is going to stop imminent release of HSW-EP, which is in some weeks anyway.

SNB-EP situation was lucky, because VT-d affected C0/C1 steppings only which was only mass produced for the HEDT (consumer) part, while most of the production-grade Xeons were of C2 stepping.

This time, the situation is different - if this bug affects the latest stepping of HSW-EP, first Xeons that will be sold in public are also going to be affected.

Not good, since TSX is exactly kind of a feature you'd see in heavy use in the market for EP SKUs.

The only good for some people might be that the ebay market might soon be loaded with diverted HSW-EP engineering samples since all OEMs will probably rapidly move to qualification of the next stepping (with TSX fixes).

lmcd - Wednesday, August 13, 2014 - link

SNB-E VT-d got fixed. I have C1 SNB-E, and an updated motherboard that fixed it.MrSpadge - Wednesday, August 13, 2014 - link

Don't think that others don't make such mistakes - they simply don't get as much attention due to less relevance when shipping smaller numbers. Or worse, they might not publish the errors at all.jrg - Thursday, September 4, 2014 - link

Or maybe the others don't see enough use that anyone ever discovers the bug....romrunning - Wednesday, August 13, 2014 - link

" they still can't do their testing right and in advance..."Well, I'm sure you can do it better, so please apply right away to Intel. </s>

All snark aside, c'mon, you have humans coming up with tests to try to confirm compatibility, and none of them are perfect. So if you think they can catch every esoteric situation, then I guess you'll have to adjust your viewpoint.

I'm amazed they catch as many as they do!

TiGr1982 - Wednesday, August 13, 2014 - link

Well, in the past I happened to work in testing (software testing, however) for 5 years full time and part time, and found more than 1500 bugs during that time myself personally, so I know what testing is, and that's why I'm so critical regarding bugs. OK, hardware testing is not the same thing, but nevertheless...nbtech - Thursday, August 14, 2014 - link

You make it seem like they don't know what they're doing, but your experience with software testing is much different. I have experience with hardware verification, and I can assure you that Intel does quite a bit of it. Its worth it for them to prevent these types of bugs. As you can imagine a widespread issue like this could end up costing billions. Luckily its just TSX, which probably doesn't affect too many people, and its possible that less verification effort was devoted to that piece and they launched it anyway and planned a fix for the next stepping. It sucks, but its not a showstopper.jhh - Friday, August 15, 2014 - link

The more interesting question is how many bugs did you miss which were found downstream from you. If you found 1500 bugs, assuming that you were 90% effective, which is an unreasonably high percentage, then there were 1666 bugs originally, and you missed 166 of them. Per Capers Jones, the average test efficiency is 30%, while 70% of the bugs are missed.Most hardware has some errata. I was part of a team which was fighting a bug for a couple of months, which turned out to be caused an errata (not Intel in this case). While the errata was known, the applicability to our software was not immediately apparent. The problem occurred when there was a new batch of hardware, and the problem was originally thought to be caused by the new batch. The real reason was that enough hardware had been produced that the high order bit in one byte of the (sequentially assigned) MAC address was set, and the way the MAC address was used exercised the errata. It's incredibly expensive to fix an IC after production, and even more so when the IC has been installed on a circuit board, and the worst case is when the circuit board has be shipped to many locations and is in use. Because of this, errata is usually addressed by a workaround, not by replacing the part, as long as most of the chip works.

TiGr1982 - Friday, August 15, 2014 - link

Yes, I agree with you and I understand this; so, hardware errors are better be found and fixed at the design and ES verification and testing stages. Of course, easier said than done.dylan522p - Monday, August 18, 2014 - link

Hardware is SOOOO much more complicated than software. You have no idea.