FMS 2014: SanDisk ULLtraDIMM to Ship in Supermicro's Servers

by Kristian Vättö on August 18, 2014 7:00 AM EST

We are running a bit late with our Flash Memory Summit coverage as I did not get back from the US until last Friday, but I still wanted to cover the most interesting tidbits of the show. ULLtraDIMM (Ultra Low Latency DIMM) was initially launched by SMART Storage a year ago but SanDisk acquired the company shortly after, which made ULLtraDIMM a part of SanDisk's product portfolio.

The ULLtraDIMM was developed in partnership with Diablo Technologies and it is an enterprise SSD that connects to the DDR3 interface instead of the traditional SATA/SAS and PCIe interfaces. IBM was the first to partner with the two to ship the ULLtraDIMM in servers, but at this year's show SanDisk announced that Supermicro will be joining as the second partner to use ULLtraDIMM SSDs. More specifically Supermicro will be shipping ULLtraDIMM in its Green SuperServer and SuperStorage platforms and availability is scheduled for Q4 this year.

| SanDisk ULLtraDIMM Specifications | |

| Capacities | 200GB & 400GB |

| Controller | 2x Marvell 88SS9187 |

| NAND | SanDisk 19nm MLC |

| Sequential Read | 1,000MB/s |

| Sequential Write | 760MB/s |

| 4KB Random Read | 150K IOPS |

| 4KB Random Write | 65K IOPS |

| Read Latency | 150 µsec |

| Write Latency | < 5 µsec |

| Endurance | 10/25 DWPD (random/sequential) |

| Warranty | Five years |

We have not covered the ULLtraDIMM before, so I figured I would provide a quick overview of the product as well. Hardware wise the ULLtraDIMM consists of two Marvell 88SS9187 SATA 6Gbps controllers, which are configured in an array using a custom chip with a Diablo Technologies label, which I presume is also the secret behind DDR3 compatibility. ULLtraDIMM supports F.R.A.M.E. (Flexible Redundant Array of Memory Elements) that utilizes parity to protect against page/block/die level failures, which is SanDisk's answer to SandForce's RAISE and Micron's RAIN. Power loss protection is supported as well and is provided by an array of capacitors.

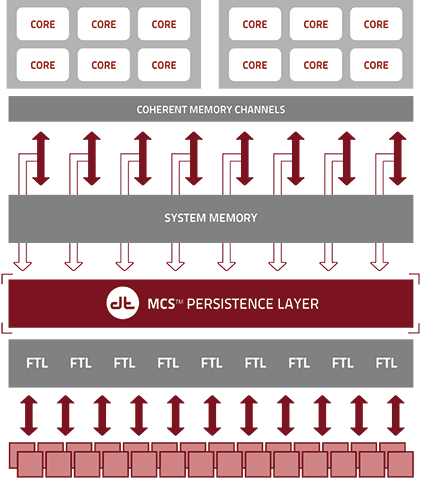

The benefit of using a DDR3 interface instead of SATA/SAS or PCIe is lower latency because the SSDs sit closer to the CPU. The memory interface has also been designed with parallelism in mind and can thus take greater advantage of multiple drives without sacrificing performance or latency. SanDisk claims write latency of less then five microseconds, which is lower than what even PCIe SSDs offer (e.g. Intel SSD DC P3700 is rated at 20µs).

Unfortunately there are no third party benchmarks for the ULLtraDIMM (update: there actually are benchmarks) so it is hard to say how it really stacks up against PCIe SSDs, but the concept is definitely intriguing. In the end, NAND flash is memory and putting it on the DDR3 interface is logical, even though NAND is not as fast as DRAM. NVMe is designed to make PCIe more flash friendly but there are still some intensive workloads that should benefit from the lower latency of the DDR3 interface. Hopefully we will be able to get a review sample soon, so we can put ULLtraDIMM through our own tests and see how it really compares with the competition.

30 Comments

View All Comments

mmrezaie - Monday, August 18, 2014 - link

yeah but I still think there can be some other good ideas left exploring or maybe there are some just as research projects! but thanks this is actually very interesting.ozzuneoj86 - Monday, August 18, 2014 - link

I like how it looks... reminds me of expansion cards from the 80s and 90s with several different shapes and colors of components all crammed onto one PCB.nathanddrews - Monday, August 18, 2014 - link

I love stuff like this... even if I'll never use it. XDHollyDOL - Monday, August 18, 2014 - link

Is there any need to have motherboard/cpu (memory controller) support for this or does it work in any DDR3 slot?MrSpadge - Monday, August 18, 2014 - link

To me it seems like better controllers on PCIe with NVMe ar still far sufficient for NAND. They claim a write access time of 5 µs, yet in the benchmarks the latency achieved in the real world is still comparable to the Fusion IO. This tells me that both drives are still pretty much NAND-limited in their performance and the slight overhead reduction by moving from PCIe to DDR3 simply doesn't matter (yet).And servers still need RAM usually plenty of it. NAND can never replace it due to its limited write cycles. Putting sophisticated memory controller into the CPUs and those sockets onto the PCB costs much more than comparably simple PCIe lanes. It seems like a waste not to use the memory sockets for DRAM.

One can argue that with 200 & 400 GB per ULLtraDIMM impressive capacities are possible. But any machine with plenty of DIMMs slots to spare will also have plenty of PCIe lanes available.

Cerb - Monday, August 18, 2014 - link

5 uS is much higher than the NAND itself, at least that they've given public specs for, so I don't get where that's coming from.It's not a matter than PCIe is or isn't good enough, really, as it is that this allows 1U, and 2U proprietary FFs with little to no room for cards, to be much more capable, and practical. PCIe cards take up a lot of room themselves, and M.2 2280 takes up a lot of board space (compare it to DIMM slots).

Kristian Vättö - Monday, August 18, 2014 - link

IMFT's 64Gbit 20nm MLC has a typical page program latency of 1,300µs, so 5µs is definitely much lower than the average NAND latency. Of course, with efficient DRAM caching, write-combining and interleaving it's possible to overcome the limits of a single NAND die but ultimately performance is still limited by NAND.p1esk - Monday, August 18, 2014 - link

This is a step in the right direction - towards eliminating DRAM. As more CPU cache becomes available on die (especially when technologies as Micron's Memory Cube take off), and as flash becomes faster, it will be possible to load everything from SSDs straight into cache.Hopefully the next step would be getting rid of a need for virtual memory.

Cerb - Monday, August 18, 2014 - link

Why on Earth would you want to get rid of virtual memory? It's the best thing since sliced cinnamon roll bread, and is a fundamental part of all non-embedded modern software.p1esk - Monday, August 18, 2014 - link

Why on Earth would your want to have this ugly cludge if you had a choice? Maybe you enjoy all that extra complexity in your processor designs? How about al the overhead of TLBs and additional processing for every single instruction?Virtual memory concept was introduced to look at a hard drive as an extension of main memory when a program could not fit in DRAM. This is not the case anymore.

It's true that it also has been used for a different purpose - to create separate logical address spaces for each process, which is tricky when you have limited amount of DRAM. However, it becomes much simpler if you have a 1TB of SSD address space available. Now nothing stops you from physical separation of address space on the drive. Just allocate one GB to the first process, second GB to the second, and so on. Process ID can indicate the offset needed to get to that address space. Problem solved, no need for translation. I'm simplifying of course, but when you have terabytes of memory, you simply don't have most of the problems virtual memory was intended to solve, and those that remain can be solved much more elegantly.