ARM Challenging Intel in the Server Market: An Overview

by Johan De Gelas on December 16, 2014 10:00 AM EST

Introduction to ARM Servers

"Intel does not have any competition whatsoever in the midrange and high-end (x86) server market". We came to that rather boring conclusion in our review of the Xeon E5-2600 v2. That date was September 2013.

At the same time, the number of announcements and press releases about ARM server SoCs based on the new ARMv8 ISA were almost uncountable. AppliedMicro was announcing their 64-bit ARMv8 X-Gene back in late 2011. Calxeda sent us a real ARM-based server at the end of 2012. Texas Instruments, Cavium, AMD, Broadcom, and Qualcomm announced that they would be challenging Intel in the server market with ARM SoCs. Today, the first retail products have finally appeared in the HP Moonshot server.

There has been no lack of muscular statements about the ARM Server SoCs. For example, Andrew Feldman, the founder of micro server pioneer Seamicro and the former head of the server department at AMD stated: "In the history of computers, smaller, lower-cost, and higher-volume CPUs have always won. ARM cores, with their low-power heritage in devices, should enable power-efficient server chips." One of the most infamous silicon valley insiders even went so far as to say, "ARM servers are currently steamroller-ing Intel in key high margin areas but for some reason the company is pretending they don’t exist."

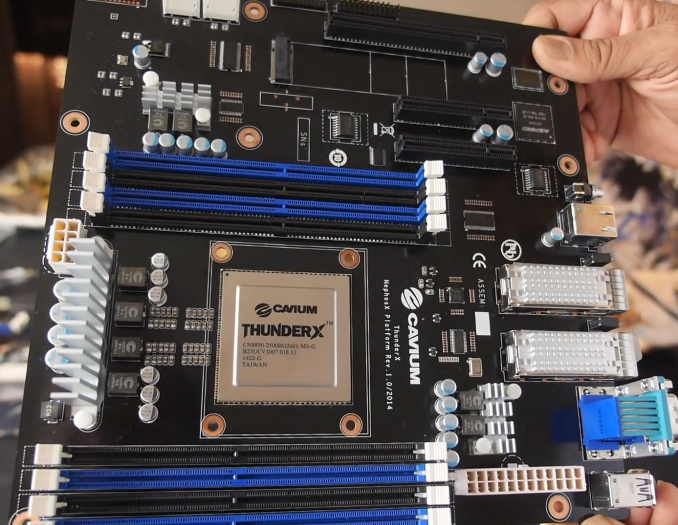

Rest assured, we will not stop at opinions and press releases. As people started talking specifications, we really got interested. Let's see how the Cavium Thunder-X, AppliedMicro X-Gene, Broadcom Vulcan, and AMD Opteron A1100 compare to the current and future Intel Server chips. We are working hard to get all these contenders in our lab, and we are having some success, but it is too soon for a full blown shoot out.

Micro Servers and Scale-out Servers

Micro servers were the first the target of the ARM licensees. Typically, a discussion about Micro servers quickly turns into a wimpy versus brawny core debate. One of the reasons for that is that Seamicro, the inventor of the micro server, first entered the market with Atom CPUs. The second reason is that Calxeda, the pioneer of ARM based servers, had to work with the fact that the Cortex-A9 core was a wimpy core that could not deal with most server workloads. Wikipedia also associates micro servers with very low power SoCs: “Very low power and small size server based on System-on-Chip, typically centered around ARM processor”.

Micro servers are typically associated with low end servers that serve static HTML, cache web objects, and/or function as slow storage servers. It's true that you will not find a 150W high-end Xeon inside a micro server, but that does not mean that micro servers are defined by low power SoCs. In fact, the most successful micro servers are based on 15-45W Xeon E3s. Seamicro, the pioneer of micro servers, clearly indicated that there was little interest in the low power Atom based systems, but that sales spiked once they integrated Xeon E3s.

Currently micro servers are still a niche market. But micro servers are definitely not hype; they are here to stay, although we don't think they will be as dominant as rack servers or even blade servers in the near future. To understand why we would make such a bold statement, it is important to understand the real reason why micro servers exist.

Let us go back to the past decade (2005-2010). Virtualization was (and is) embraced as the best way to make enterprises with many heterogeneous applications running on underutilized servers more efficient. RAM capacity and core counts shot up. Networking and storage lagged but caught up – more or less – as flash storage, 10 Gbit Ethernet, and SRIOV became available. But the trend to notice was that virtualization made servers more I/O feature rich: the number and speed of network NICs and PCI-e expansion slots for storage increased quickly. Servers based on the Xeon E5 and Opterons have become "software defined datacenters in a box" with virtual switching and storage. The main driver for buying complex servers with high processor counts and more I/O devices is simple: professionals want the benefits that highly integrated virtualization software brings. Faster provisioning, high availability (HA), live migration (vMotion), disaster recovery (DR), keeping old services alive (running on Windows 2000 for example): virtualization made everything so much easier.

But what if you did not need those features because your application is spread among many servers and can take a few hardware outages? What if you do not need the complex hardware sharing features such as SRIOV and VT-d? The prime example is an application like Facebook, but quite a few smaller web farms are in a similar situation. If you do not need the features that come with enterprise virtualization software, you are just adding complexity and (consultancy/training) costs to your infrastructure.

Unfortunately, as always, the industry analysts came with unrealistic high predictions for the new micro server market: in 2016, they would be 10% of the market, no less than "a 50 fold jump"! The simple truth is that there is a lot of demand for "non-virtualized" servers, but they do not all have to be as dense and low power as the micro servers inside the Boston Viridis. The "very low power", extremely dense micro servers with their very low power SoCs are not a good match for most workloads out there, with the exception of some storage and memcached machines. But there is a much larger market for servers denser than the current rack servers, but less complex and cheaper than the current blade servers, and there's a demand for systems with a relatively strong SoC, currently the SoCs with a TDP in the 20W-80W range.

Not convinced? ARM and the ARM licensees are. The first thing that Lakshmi Mandyam, the director of ARM servers systems at ARM, emphasized when we talked to her is that ARM servers will be targeting scale-out servers, not just micro servers. The big difference is that micro servers are using (very) low power CPUs, while scale-out servers are just servers that can run lots and lots of threads in parallel.

78 Comments

View All Comments

aryonoco - Wednesday, December 17, 2014 - link

I just wanted to thank you Johan De Gelas for this very insightful and interesting article.Hugely enjoyed reading it and your thoughts on the subject.

Good to see high quality content continue to be published at AT now that Anand has left.

JohanAnandtech - Wednesday, December 17, 2014 - link

aryonoco, Jann Thanks for letting me know. A good motivation to always push a bit harder to make sure I don't let my readers down :-).jann5s - Wednesday, December 17, 2014 - link

Thank you Johan, for writing this very interesting article!przemo_li - Wednesday, December 17, 2014 - link

Very well written walk through current and possible CPU/SOC parts.Will there be similar piece for software?

ARM (embedded) folks aren't famous for quality drivers/code.

It must change, so it will change. But for now such overview would be great!

bobbozzo - Wednesday, December 17, 2014 - link

Typo on page2:"(4 Slots x 8 DIMMs)" - change 8 to 8GB

Thanks

bobbozzo - Wednesday, December 17, 2014 - link

and page 4:"you will be able to choose between SoCs that have 100 Gbit Ethernet and 10GBit Ethernet."

should 100 be 40?

bobbozzo - Wednesday, December 17, 2014 - link

Page 12:"Most of them are the usual IPSec, TPC offloading engines"

Should that be TCP?

Also, are there still accelerators for AntiVirus engines and IDS/IPS search (there were some back in 2005).

Thanks

bobbozzo - Wednesday, December 17, 2014 - link

...I guess that's what the RegEx would be useful for.

However, not all IDS/IPS / A/V patterns use RegEx, and there are other means of acceleration.

eanazag - Wednesday, December 17, 2014 - link

Welcome back Johan.Glad to see you're still writing here. Good stuff in the article.

JKflipflop98 - Wednesday, December 17, 2014 - link

I simply don't get where this whole "microserver" thing is coming from.By the time you cluster up enough ARM processors to match the processing power of an Intel/AMD solution, you're burning just as much power and spent just as much money as you would have by using x86 in the first place. Except now you have to use some janky middleware solution because all your software is x86 and you're running on ARM cores.