Intel SSD 750 PCIe SSD Review: NVMe for the Client

by Kristian Vättö on April 2, 2015 12:00 PM ESTSequential Read Performance

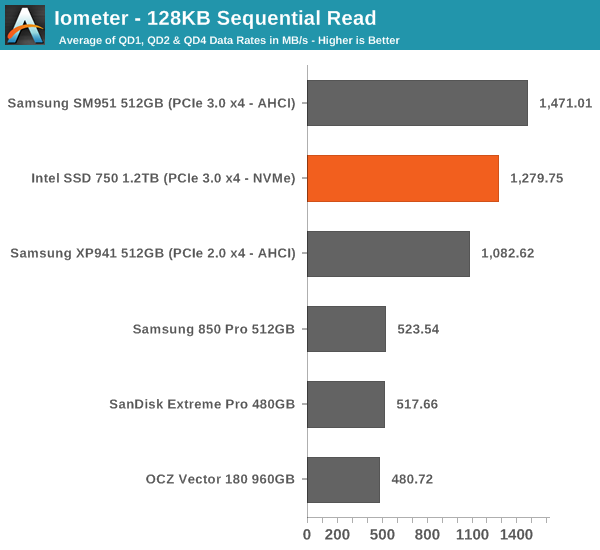

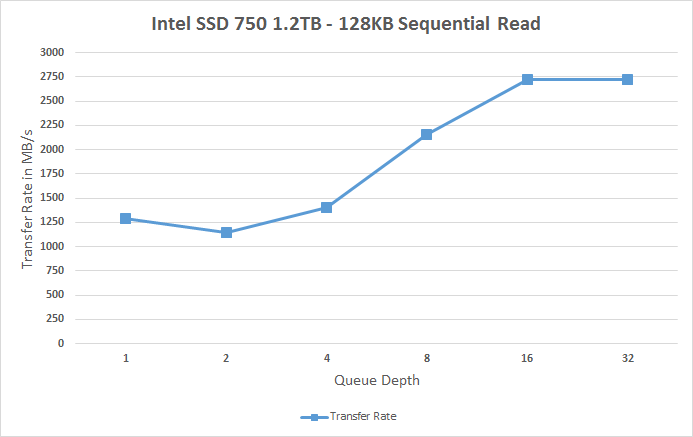

Our sequential tests are conducted in the same manner as our random IO tests. Each queue depth is tested for three minutes without any idle time in between the tests and the IOs are 4K aligned similar to what you would experience in a typical desktop OS.

In sequential read performance the SM951 keeps its lead. While the SSD 750 reaches up to 2.75GB/s at high queue depths, the scaling at small queue depths is very poor. I think this is an area where Intel should have put in a little more effort because it would translate to better performance in more typical workloads.

|

|||||||||

Sequential Write Performance

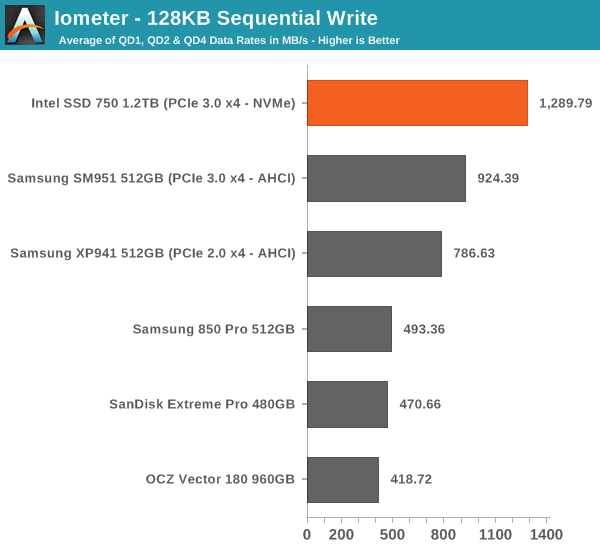

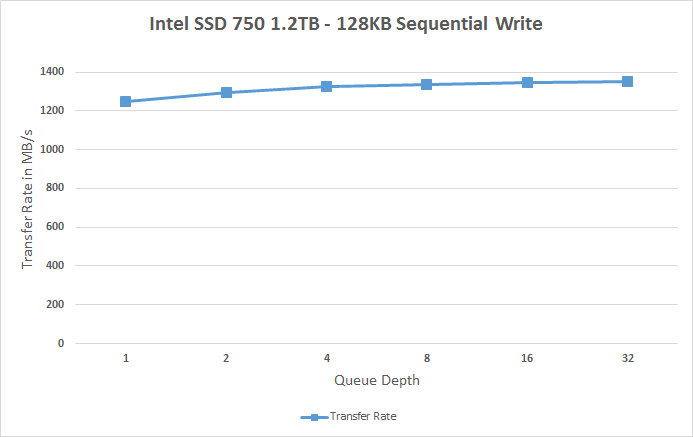

Sequential write testing differs from random testing in the sense that the LBA span is not limited. That's because sequential IOs don't fragment the drive, so the performance will be at its peak regardless.

The SSD 750 is faster in sequential writes than the SM951, but the difference isn't all that big when you take into account that the SSD 750 has more than twice the amount of NAND. The SM951 did have some throttling issues as you can see in the graph below and I bet that with a heatsink and proper cooling the two would be quite identical because at queue depth of 1 the SSD 750 is only marginally faster. It's again a bit disappointing that the SSD 750 isn't that well optimized for sequential IO because there's prcatically no scaling at all and the drive maxes out at ~1350MB/s.

|

|||||||||

132 Comments

View All Comments

Kristian Vättö - Friday, April 3, 2015 - link

As I explained in the article, I see no point in testing such high queue depths in a client-oriented review because the portion of such IOs is marginal. We are talking about a fraction of a percent, so while it would show big numbers it has no relevance to the end-user.voicequal - Saturday, April 4, 2015 - link

Since you feel strongly enough to levy a personal attack, could you also explain why you think QD128 is important? Anandtech's storage benchmarks are likely a much better indication of user experience unless you have a very specific workload in mind.d2mw - Friday, April 3, 2015 - link

Guys why are you cutpasting the same old specs table and formulaic article? For a review of the first consumer NVMe I'm sorely disappointed you didn't touch on latency metrics: one of the most important improvements with the NVMe busKristian Vättö - Friday, April 3, 2015 - link

There are several latency graphs in the article and I also suggest that you read the following article to better understand what latency and other storage metrics actually mean (hint: latency isn't really different from IOPS and throughput).http://www.anandtech.com/show/8319/samsung-ssd-845...

Per Hansson - Friday, April 3, 2015 - link

Hi Kristian, what evidence do you have that the firmware in the SSD 750 is any different from that found in the DC P3600 / P3700?According to leaked reports released before they have the same firmware: http://www.tweaktown.com/news/43331/new-consumer-i...

And if you read the Intel changelog you see in firmware 8DV10130: "Drive sub-4KB sequential write performance may be below 1MB/sec"

http://downloadmirror.intel.com/23931/eng/Intel_SS...

Which was exactly what you found in the original review of the P3700:

http://www.anandtech.com/show/8147/the-intel-ssd-d...

http://www.anandtech.com/bench/product/1239

Care to retest with the new firmware?

I suspect you will get identical performance.

Per Hansson - Saturday, April 4, 2015 - link

I should be more clear: I mean that you retest the P3700.And obviously the performance of the 750 wont match that, as it is based of the P3500.

But I think you get what I mean anyway ;)

djsvetljo - Friday, April 3, 2015 - link

I am unclear of which connector will this use. Does it use the video card PCI-E port?I have MSI Z97 MATE board that has one PCI-E gen3 x16 and one PCI-E gen2 x 4. Will I be able to use it and will I be limited somehow?

DanNeely - Friday, April 3, 2015 - link

if you use the 2.0 x4 slot your maximum throughput will top out at 2gb/sec. For client workloads this probably won't matter much since only some server workloads can hit situations where the drive can exceed that rate.djsvetljo - Friday, April 3, 2015 - link

So it uses the GPU express port although the card pins are visually shorter ?eSyr - Friday, April 3, 2015 - link

> although in real world the maximum bandwidth is about 3.2GB/s due to PCIe inefficiencyWhat does this phrase mean? If you're referring to 8b10b encoding, this is plainly false, since PCIe gen 3 utilized 128b130b coding. If you're referring to the overheds related to TLP and DLLP headers, this is depends on device's and PCIe RC's maximum transaction size. But, even with (minimal) 128 byte limit it would be 3.36 GB/s. In fact, modern PCIe RCs support much larger TLPs, thus eliminating header-related overheads.