The AMD FreeSync Review

by Jarred Walton on March 19, 2015 12:00 PM EST

Introduction to FreeSync and Adaptive Sync

The first time anyone talked about adaptive refresh rates for monitors – specifically applying the technique to gaming – was when NVIDIA demoed G-SYNC back in October 2013. The idea seemed so logical that I had to wonder why no one had tried to do it before. Certainly there are hurdles to overcome, e.g. what to do when the frame rate is too low, or too high; getting a panel that can handle adaptive refresh rates; supporting the feature in the graphics drivers. Still, it was an idea that made a lot of sense.

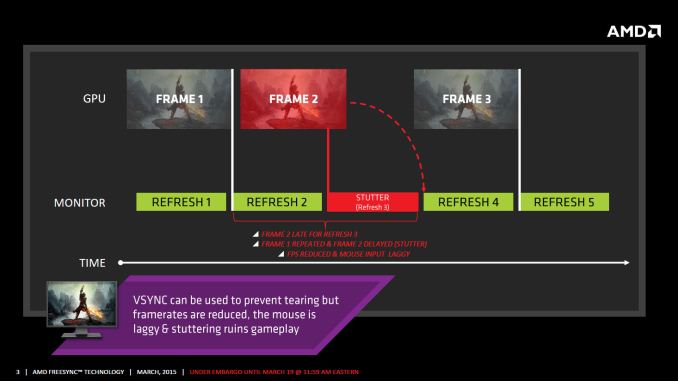

The impetus behind adaptive refresh is to overcome visual artifacts and stutter cause by the normal way of updating the screen. Briefly, the display is updated with new content from the graphics card at set intervals, typically 60 times per second. While that’s fine for normal applications, when it comes to games there are often cases where a new frame isn’t ready in time, causing a stall or stutter in rendering. Alternatively, the screen can be updated as soon as a new frame is ready, but that often results in tearing – where one part of the screen has the previous frame on top and the bottom part has the next frame (or frames in some cases).

Neither input lag/stutter nor image tearing are desirable, so NVIDIA set about creating a solution: G-SYNC. Perhaps the most difficult aspect for NVIDIA wasn’t creating the core technology but rather getting display partners to create and sell what would ultimately be a niche product – G-SYNC requires an NVIDIA GPU, so that rules out a large chunk of the market. Not surprisingly, the result was that G-SYNC took a bit of time to reach the market as a mature solution, with the first displays that supported the feature requiring modification by the end user.

Over the past year we’ve seen more G-SYNC displays ship that no longer require user modification, which is great, but pricing of the displays so far has been quite high. At present the least expensive G-SYNC displays are 1080p144 models that start at $450; similar displays without G-SYNC cost about $200 less. Higher spec displays like the 1440p144 ASUS ROG Swift cost $759 compared to other WQHD displays (albeit not 120/144Hz capable) that start at less than $400. And finally, 4Kp60 displays without G-SYNC cost $400-$500 whereas the 4Kp60 Acer XB280HK will set you back $750.

When AMD demonstrated their alternative adaptive refresh rate technology and cleverly called it FreeSync, it was a clear jab at the added cost of G-SYNC displays. As with G-SYNC, it has taken some time from the initial announcement to actual shipping hardware, but AMD has worked with the VESA group to implement FreeSync as an open standard that’s now part of DisplayPort 1.2a, and they aren’t getting any royalties from the technology. That’s the “Free” part of FreeSync, and while it doesn’t necessarily guarantee that FreeSync enabled displays will cost the same as non-FreeSync displays, the initial pricing looks quite promising.

There may be some additional costs associated with making a FreeSync display, though mostly these costs come in the way of using higher quality components. The major scaler companies – Realtek, Novatek, and MStar – have all built FreeSync (DisplayPort Adaptive Sync) into their latest products, and since most displays require a scaler anyway there’s no significant price increase. But if you compare a FreeSync 1440p144 display to a “normal” 1440p60 display of similar quality, the support for higher refresh rates inherently increases the price. So let’s look at what’s officially announced right now before we continue.

350 Comments

View All Comments

testbug00 - Thursday, March 19, 2015 - link

Huh? It's just the brand that AMD is putting on adaptive sync for their GPUs. ???farhadd - Friday, March 20, 2015 - link

I would never buy a Gsync monitor because I want GPU flexibility down the road. Period. Unfortunately, with no assurance from Nvidia that they might support Freesync down the road, I'm afraid to invest in a Freesync monitor either. Both sides lose.just4U - Friday, March 20, 2015 - link

"Not sure why people are bashing Gsync"--------

It's not a open standard. That's the main reason to bash it.

JonnyDough - Monday, March 23, 2015 - link

NVidia can state what they want. So can Apple. Companies that refuse to create standards with other companies for the benefit of the consumer will pay the price with actual money. This is part of why I buy AMD and not Nvidia products. I got tired of the lies, the schemes, and the confusion that they like to throw at those that fund them.medi03 - Wednesday, March 25, 2015 - link

Paying 100$+ premium, being locked-in to a single manufacturer, having only a single input port on a monitor is "fantastic" indeed.DigitalFreak - Thursday, March 19, 2015 - link

Your mom called. She said you need to clean your room.anubis44 - Tuesday, March 24, 2015 - link

@DigitalFreak: So your response to an intelligently argued point about not supporting a company that imposes proprietary standards is to say 'your mom called?' You're clearly a genius, aren't you?SetiroN - Thursday, March 19, 2015 - link

I'm disappointed Anandtech is forgetting this:there's a fundamental difference between G-Sync and freesync, which justifies the custom hardware and makes it worth it compared to something I'd rather stay without: freesync requires v-sync to be active and is plagued with the additional latency that comes with it, gsync replaces it entirely.

Considering that the main reason anyone would want adaptive sync is to play decently even when the framerate dips at 30-40fps, where the 3 v-synced, pre-rendered frames account for a huge 30-40ms latency, AMD can shove its free solution up its arse as far as I'm concerned.

I'd rather have tearing and no latency, or no tearing and acceptable latency at lower settings (to allow me to play at 60fps), both of which don't require a new monitor.

For the considerable benefit of playing at lower-than-60 fps without tearing AND no additional latency, I'll gladly pay the nvidia premium (as soon as 4K 120Hz IPS will be available).

FriendlyUser - Thursday, March 19, 2015 - link

Did you read the article? Of course not! VSync On or Off only comes into play when outside the refresh rate range of the monitor and is an option that GSync does not have. If you have GSync you are force into VSync On when outside the refresh range of the monitor.200380051 - Thursday, March 19, 2015 - link

No, it'S the other way around. Freesync does not require V-Sync; you can actually choose, and it will impact the stuttering/tearing or display latency. OTOH, G-Sync does exactly what you said : V-Sync on, when outside of dynamic sync range. Read more carefully, pal.