The AMD FreeSync Review

by Jarred Walton on March 19, 2015 12:00 PM EST

Introduction to FreeSync and Adaptive Sync

The first time anyone talked about adaptive refresh rates for monitors – specifically applying the technique to gaming – was when NVIDIA demoed G-SYNC back in October 2013. The idea seemed so logical that I had to wonder why no one had tried to do it before. Certainly there are hurdles to overcome, e.g. what to do when the frame rate is too low, or too high; getting a panel that can handle adaptive refresh rates; supporting the feature in the graphics drivers. Still, it was an idea that made a lot of sense.

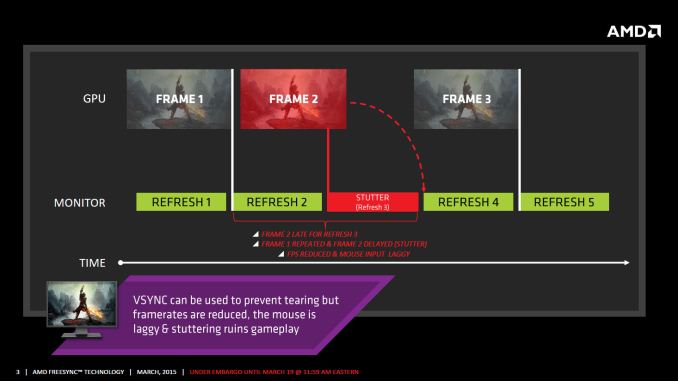

The impetus behind adaptive refresh is to overcome visual artifacts and stutter cause by the normal way of updating the screen. Briefly, the display is updated with new content from the graphics card at set intervals, typically 60 times per second. While that’s fine for normal applications, when it comes to games there are often cases where a new frame isn’t ready in time, causing a stall or stutter in rendering. Alternatively, the screen can be updated as soon as a new frame is ready, but that often results in tearing – where one part of the screen has the previous frame on top and the bottom part has the next frame (or frames in some cases).

Neither input lag/stutter nor image tearing are desirable, so NVIDIA set about creating a solution: G-SYNC. Perhaps the most difficult aspect for NVIDIA wasn’t creating the core technology but rather getting display partners to create and sell what would ultimately be a niche product – G-SYNC requires an NVIDIA GPU, so that rules out a large chunk of the market. Not surprisingly, the result was that G-SYNC took a bit of time to reach the market as a mature solution, with the first displays that supported the feature requiring modification by the end user.

Over the past year we’ve seen more G-SYNC displays ship that no longer require user modification, which is great, but pricing of the displays so far has been quite high. At present the least expensive G-SYNC displays are 1080p144 models that start at $450; similar displays without G-SYNC cost about $200 less. Higher spec displays like the 1440p144 ASUS ROG Swift cost $759 compared to other WQHD displays (albeit not 120/144Hz capable) that start at less than $400. And finally, 4Kp60 displays without G-SYNC cost $400-$500 whereas the 4Kp60 Acer XB280HK will set you back $750.

When AMD demonstrated their alternative adaptive refresh rate technology and cleverly called it FreeSync, it was a clear jab at the added cost of G-SYNC displays. As with G-SYNC, it has taken some time from the initial announcement to actual shipping hardware, but AMD has worked with the VESA group to implement FreeSync as an open standard that’s now part of DisplayPort 1.2a, and they aren’t getting any royalties from the technology. That’s the “Free” part of FreeSync, and while it doesn’t necessarily guarantee that FreeSync enabled displays will cost the same as non-FreeSync displays, the initial pricing looks quite promising.

There may be some additional costs associated with making a FreeSync display, though mostly these costs come in the way of using higher quality components. The major scaler companies – Realtek, Novatek, and MStar – have all built FreeSync (DisplayPort Adaptive Sync) into their latest products, and since most displays require a scaler anyway there’s no significant price increase. But if you compare a FreeSync 1440p144 display to a “normal” 1440p60 display of similar quality, the support for higher refresh rates inherently increases the price. So let’s look at what’s officially announced right now before we continue.

350 Comments

View All Comments

chizow - Thursday, March 19, 2015 - link

@lordken and yes I am well aware Gsync is tied to Nvidia lol, but like I said, will I bet on the market leader with ~70% market share and installed user base (actually much higher than this, since Kepler is 100% vs. GCN1.1 is maybe 30%? over the cards sold since 2012) over the solution that holds a minor share of the dGPU market and even a smaller share of the CPU/APU market.chizow - Thursday, March 19, 2015 - link

And why don't you stop your biased preconceptions and actually read some articles that don't just take AMD's slidedecks at face value? Read a review that actually tries to tackle the real issues I am referring to, while actually TALKING to the vendors and doing some investigative reporting:http://www.pcper.com/reviews/Displays/AMD-FreeSync...

You will see, there are some major issues still with FreeSync that still need to be answered and addressed.

JarredWalton - Thursday, March 19, 2015 - link

It's not a "major issue" so much as a limitation of the variable refresh rate range and how AMD chooses to handle it. With NVIDIA it refreshes the same frame at least twice if you drop below 30Hz, and that's fine but it would have to introduce some lag. (When a frame is being refreshed, there's no way to send the next frame to the screen = lag.) AMD gives two options: VSYNC off or VSYNC on. With VSYNC off, you get tearing but less lag/latency. With VSYNC on you get stuttering if you fall below the minimum VRR rate.The LG displays are actually not a great option here, as 48Hz at minimum is rather high -- 45 FPS for example will give you tearing or stutter. So you basically want to choose settings for games such that you can stay above 48 FPS with this particular display. But that's certainly no worse than the classic way of doing things where people either live with tearing or aim for 60+ FPS -- 48 FPS is more easily achieved than 60 FPS.

The problem right now is we're all stuck comparing different implementations. A 2560x1080 IPS display is inherently different than a 2560x1440 TN display. LG decided 48Hz was the minimum refresh rate, most likely to avoid flicker; others have allowed some flicker while going down to 30Hz. You'll definitely see flicker on G-SYNC at 35FPS/35Hz in my experience, incidentally. I can't knock FreeSync and AMD for a problem that is arguably the fault of the display, so we'll look at it more when we get to the actual display review.

As to the solution, well, there's nothing stopping AMD from just resending a frame if the FPS is too low. They haven't done this in the current driver, but this is FreeSync 1.0 beta.

Final thought: I don't think most people looking to buy the LG 34UM67 are going to be using a low-end GPU, and in fact with current prices I suspect most people that upgrade will already have an R9 290/290X. Part of the reason I didn't notice issues with FreeSync is that with a single R9 290X in most games the FPS is well over 48. More time is needed for testing, obviously, and a single LCD with FreeSync isn't going to represent all future FreeSync displays. Don't try and draw conclusions from one sample, basically.

chizow - Friday, March 20, 2015 - link

@JarredHow is it not a major issue? You think that level of ghosting is acceptable and comparable to G-Sync!?!?! My have your standards dropped, if that is the case I do not think you are qualified to write this review, or at least post it under Editorial, or even better, post it under the AMD sponsored banner.

Fact is, below the stated minimum refresh, FreeSync is WORST than a non-VRR monitor would be, as all the tearing and input lag is there AND you get awful flickering and ghosting too.

And how do you know it is a limitation of panel technology when Nvidia's solution exhibits none of these issues at typical refresh rates as low as 20Hz, and especially at the higher refresh rates that AMD starts to experience it? Don't you have access to the sources and players here? I mean we know you have AMD's side of the story, but why don't you ask these same questions to Nvidia, the scaler makers, the monitor makers as well? It could certainly be a limitation of the spec don't you think? If monitor makers are just designing a monitor to AMD's FreeSync spec, and AMD is claiming they can alleviate this via a driver update, it sounds to me like the limitation is in the specification, not the technology, especially when Nvidia's solution does not have these issues. In fact, if you had asked Nvidia, as PCPer did, they may very well have explained to you why FreeSync ghosts/flickers, and their solution does not: From PCPer, again:

" But in a situation where the refresh rate can literally be ANY rate, as we get with VRR displays, the LCD will very often be in these non-tuned refresh rates. NVIDIA claims its G-Sync module is tuned for each display to prevent ghosting by change the amount of voltage going to pixels at different refresh rates, allowing pixels to untwist and retwist at different rates."

Science and hardware trumps hunches and hearsay, imo. :)

Also, you might need to get with Ryan to fully understand the difference between G-Sync and FreeSync at low refresh. G-Sync simply displays the same frame twice. There is no sense of input lag, as input lag would be if the next refreshed panel was tied to a different input I/O. That is not the case with G-Sync, because the held frame 2nd is still tied to the input of the 1st frame, but the next live frame has a live input. All you perceive is low FPS, not input lag. There is a difference. It would be like playing a game at 30FPS on a 60Hz monitor with no Vsync. Still, certainly much better than AMD's solution of having everything fall apart at a framerate that is still quite high and hard to obtain for many video cards.

The LG is a horrible situation, who wants to be tied to a solution that is only effective in such a tight framerate band? If you are actually going to do some "testing", why don't you test something meaningful like a gaming session that shows the % of frames in any particular game with a particular graphics card that shows that fall outside of the "supported" refresh rates. I think you will find the amount of time spent outside of these bands is actually pretty high in demanding games and titles at the higher than 1080p games on the market today.

And you definitely see flicker at 35fps/35Hz on a G-Sync panel? Prove it. I have an ROG Swift and there is no flicker as low as 20FPS which is common in the CPU-limited MMO games out there. Not any noticeable flicker. You have access to both technologies, prove it. Post a video, post pictures, post the kind of evidence and do the kind of testing you would actually expect from a professional reviewer on a site like AT instead of addressing the deficiencies in your article with hearsay and anecdotal evidence.

Last part, again I'd recommend running the test I suggested on multiple panels with multiple cards and mapping out the frame rates to see the % that fall outside or below these minimum FreeSync thresholds. I think you would be surprised, especially given many of these panels are above 1080p. Even that LG is only ~1.35x 1080p, but most of these panels are 1440p premium panels and I can tell you for a fact a single 970/290/290X/980 class card is NOT enough to maintain 40+FPS in many recent demanding games at high settings. And as of now, CF is not an option. So another strike against FreeSync, if you want to use it, your realistic options are a 290/X at the minimum or there's the real possibility you are below the minimum threshold.

Hopefully you don't take this too harshly or personally, while there is some directed comments in there, there's also a lot of constructive feedback. I have been a fan of some of your work in the past but this is certainly not your best effort or an effort worthy of AT, imo. The biggest problem I have and we've gotten into it a bit in the past is that you repeat many of the same misconceptions that helped shape and perpetuate all the "noise" surrounding FreeSync. For example, you mention it again in this article, yet do we have any confirmation from ANYONE that existing scalers and panels can simply be flashed to FreeSync with a firmware update? If not, why bother repeating the myth?

Darkito - Friday, March 20, 2015 - link

@JaredWhat do you make of this PCPerformance article?

http://www.pcper.com/reviews/Displays/AMD-FreeSync...

"

G-Sync treats this “below the window” scenario very differently. Rather than reverting to VSync on or off, the module in the G-Sync display is responsible for auto-refreshing the screen if the frame rate dips below the minimum refresh of the panel that would otherwise be affected by flicker. So, in a 30-144 Hz G-Sync monitor, we have measured that when the frame rate actually gets to 29 FPS, the display is actually refreshing at 58 Hz, each frame being “drawn” one extra instance to avoid flicker of the pixels but still maintains a tear free and stutter free animation. If the frame rate dips to 25 FPS, then the screen draws at 50 Hz. If the frame rate drops to something more extreme like 14 FPS, we actually see the module quadruple drawing the frame, taking the refresh rate back to 56 Hz. It’s a clever trick that keeps the VRR goals and prevents a degradation of the gaming experience. But, this method requires a local frame buffer and requires logic on the display controller to work. Hence, the current implementation in a G-Sync module."

Especially those last few sentences. You say AMD can just duplicate frames like G-Sync but according to this article it's actually something in the G-Sync module that enables it. Is there truth to that?

Socketofpoop - Thursday, March 19, 2015 - link

Not worth the typing effort. Chizow is a well known nvidia fanboy or possibly a shill for them. As long as it is green it is best to him. Bent over, cheeks spread and ready for nvidias next salvo all the time.chizow - Friday, March 20, 2015 - link

@Socketofpoop, I'm well known among AMD fanboys! I'm so flattered!I would ask this of you and the lesser-known AMD fanboys out there. If a graphics card had all the same great features, performance, support with existing prices that Nvidia offers, but had an AMD logo and red cooler on the box, I would buy the AMD card in a heartbeat. No questions asked. Would you if roles were reversed? Of course not, because you're an AMD fan and obviously brand preference matters to you more than what is actually the better product.

Black Obsidian - Thursday, March 19, 2015 - link

I hate to break it to you, but history has not been kind to the technically superior but proprietary and/or higher cost solution. HD-DVD, miniDisc, Laserdisc, Betamax... the list goes on.JarredWalton - Thursday, March 19, 2015 - link

Something else interesting to note is that there are 11 FreeSync displays already in the works (with supposedly nine more unannounced), compared to seven G-SYNC displays. In terms of numbers, FreeSync on the day of launch has nearly caught up to G-SYNC.chizow - Thursday, March 19, 2015 - link

Did you pull that off AMD's slidedeck too Jarred? What's interesting to note is you list the FreeSync displays "in the works" without counting the G-Sync panels "in the works"? And 3 monitors is now "nearly caught up to" 7? Right.A brand new panel is a big investment (not really), I guess everyone should place their bets carefully. I'll bet on the market leader that holds a commanding share of the dGPU market, consistently provides the best graphics cards, great support and features, and isn't riddled with billions in debt with a gloomy financial outlook.