Arm Announces Mali-G76 GPU: Scaling up Bifrost

by Ryan Smith & Andrei Frumusanu on May 31, 2018 3:00 PM ESTThe Mali G76 - Scaling It Up

Section by Ryan Smith

Mali-G76 is an interesting change for Arm’s GPU designs, both because it changes some fundamental aspects of the Bifrost architecture, and yet it doesn’t.

At a very high level, there are no feature changes with respect to graphics, and only some small changes when it comes to compute (more on that in a moment). So there is little to talk about with regards here in terms of end-user functionality or flashy features. Bifrost was already a modern graphics architecture, and the state of 3D graphics technology hasn’t significantly changed in the last two years to invalidate that.

Instead, like Mali-G72 before it, G76 is another optimization pass on the underpinnings of the architecture. And compared to G72, G76 is a much greater pass that as a result makes some significant changes in how Arm’s GPUs work. It’s still very much the Bifrost architecture, but it’s actually one of the biggest changes we’ve ever seen within a single graphics architecture from one revision to the next, and that goes for both mobile and PC.

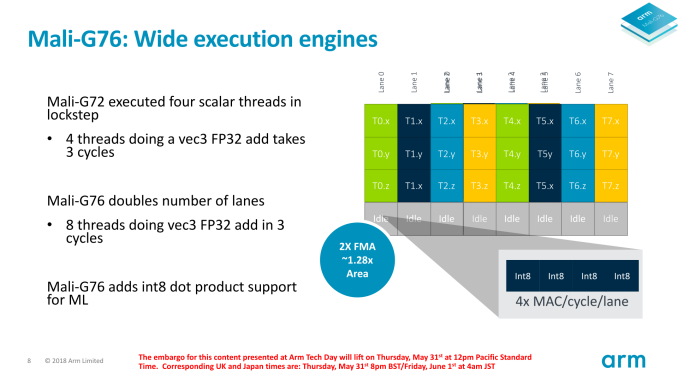

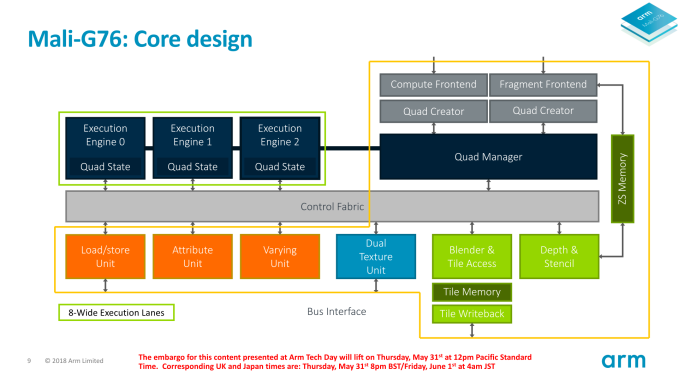

The big change here is that in an effort to further boost the performance and area efficiency of the architecture, Arm is doubling the width of their fundamental compute block, the “quad” execution engine. In both the Mali-G71 and G72, a quad is just that: a 4-wide SIMD unit, with each lane possessing separate FMA and ADD/SF pipes. Fittingly, the width of a wavefront at the ISA-level for these parts has also been just 4 instructions, meaning all of the threads within a wavefront are issued in a single cycle. Overall, Bifrost’s use of a 4-wide design was a notably narrow choice relative to most other graphics architectures.

But the quad is a quad no longer. For Mali-G76, Arm is going big. The eponymous quad is now an 8-wide SIMD. In other words, a Mali-G76 quad – and for that matter an entire core – now has twice as many ALUs as before. All of the features are the same, as is the execution model within a quad, but now Arm can weave together and execute 8 threads per clock per quad versus 4 on past Bifrost parts.

This is a very interesting change because, simply put, the size of a wavefront is typically a defining feature of an architecture. For long-lived architectures, especially in the PC space, wavefront sizes haven’t changed for years. NVIDIA has used a 32-wide wavefront(warp) going all the way back in G80 in 2006, and AMD’s 64-wide wavefront goes back to the pre-GCN days. As a result this is the first time we have seen a vendor change the size of their wavefron in the middle of an architectural generation.

Now there are several ramifications of this, both for efficiency purposes and coding purposes. But before going too far, I want to quickly recap part of our Mali-G71 article from 2016, discussing the rationale for Arm’s original 4-wide wavefront design.

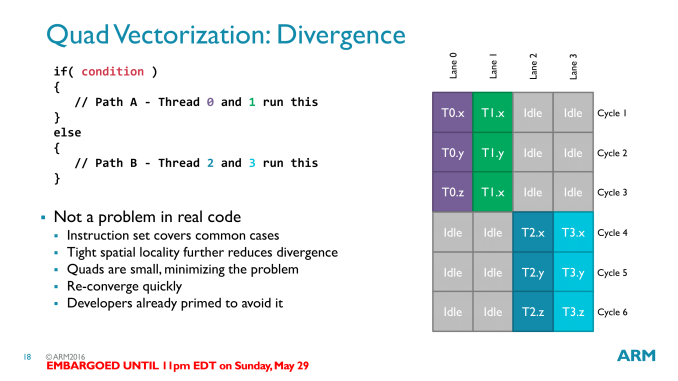

Moving on, within the Bifrost architecture, ARM’s wavefronts are called Quads. True to the name, these are natively 4 threads wide, and a row of instructions from a full quad is executed per clock cycle. Bifrost’s wavefront size is particularly interesting here, as quads are much smaller than competing architectures, which are typically in the 16-32 thread range. Wavefront design in general reflects the need to find a balance between resource/area density and performance. Wide wavefronts require less control logic (ex: 32 threads with 1 unit of control logic, versus 4x8 threads with 8 units of control logic), but at the same time the wider the wavefront, the harder it is to fill. ARM’s GPU philosophy in general has been concerned with trying to avoid execution stalls, and their choice in wavefront size reflects this. By going with a narrower wavefront, a group of threads is less likely to diverge – that is, take different paths, typically as a result of a conditional statement – than a wider wavefront. Divergences are easy enough to handle (just follow both paths), but the split hurts performance.

At the time, Arm said that they went with a 4-wide wavefront in order to minimize the occurrence of idle ALUs from thread divergence. On paper this is a sound strategy, as if you’re expecting a lot of branching code, then those ALUs are doing nothing of value for you if they’re idle due to thread divergence. A great deal of effort goes into balancing an architecture design around this choice, and particularly in the PC space, once you choose a size you’re essentially stuck with it as developers will optimize against this.

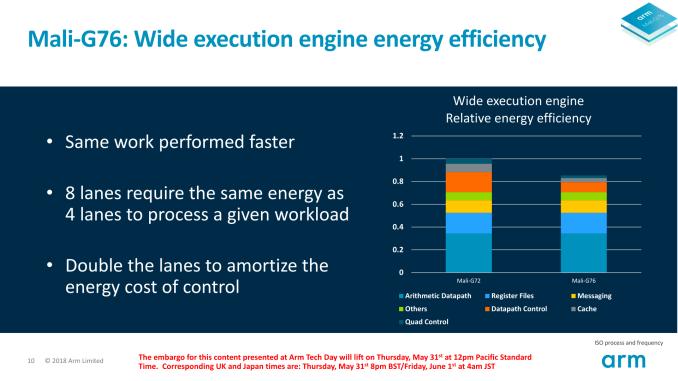

However the trade-off for a narrow wavefront and the resulting SIMDs is that the ratio of control logic to ALUs is quite a bit higher. Every SIMD is supported by a mix of cache, dispatch control hardware, internal datapaths, and other hardware. The size of this logic is somewhat fixed due to its functionality, so a wider SIMD doesn’t require much of an increase in the size of the supporting hardware. And it’s this trade-off that Arm is targeting for Mali-G76.

The net result of switching to an 8-wide SIMD design here is that Arm is decreasing the control logic to ALU ratio – or perhaps it’s better said that they’re increasing the ratio of ALUs to control logic. In the case of G76, for example, despite doubling the lanes and theoretical throughput of an execution engine, the resulting block is only about 28% larger than one of Mali-G72’s engines. Scale this up over an entire GPU, and you can easily see how this can be a more area-efficient option.

Though not explicitly said by Arm in their briefings, our interpretation of this change is that it’s a bit of an admittance that the 4-wide design of the original Bifrost architecture was overzealous; that thread divergence in real-world code isn’t high enough to justify the need for such a narrow SIMD. For their part, Arm did confirm that they see the granularity requirements of GPU code (games and compute alike) being different than what they were when G71 launched. And in the meantime this also helps Arm’s scalability efforts, as the more area-dense quad design means that Arm can pack more of them in the same die space, getting a larger number of ALUs per mm2 overall.

This change also brings the Mali-G7x GPUs in-line with the Mali-G52, which uses the same 8-wide SIMD design and was launched by Arm in a more low-key manner back in March of this year. So while G76 is technically the second Arm GPU design announced with this change, it’s been our first real chance to sit down with Arm and see what they’re thinking.

It goes without saying then that we’re curious to see what the real-world performance impacts of this change are like. Given just how uncommonly narrow Arm’s quads were, it should be pretty easy to similarly fill an 8-wide SIMD design, and in that respect, I suspect Arm is right about wider being a better choice. However wider designs do require some smarter compiler programming in order to ensure you can keep the wider SIMDs similarly filled, so Arm’s driver team has a part to play in all of this as well.

Thankfully for Arm, the mobile market is not nearly as bound to wavefront size as the PC market is, which allows for Arm to get away with a mid-generation change like this. Developers aren’t writing customized code specifically for Arm’s GPUs in the way they are in the PC space, rather everything is significantly abstracted (and overall left rather generic) through OpenGL ES, Vulkan, and other graphics/compute APIs. So for mobile developers and for existing game/application binaries, this underlying change should be completely hidden by the combination of APIs and Arm’s drivers.

As an aside, doubling the number of SIMD lanes within a quad has also led Arm to double the relevant supporting cache and pathways as well. While Arm doesn’t officially disclose the size of a quad’s register file, they have confirmed that there are 64 registers per lane for G76’s register file, just like there was for Mali-G72. So on a relative basis, register file pressure is unchanged.

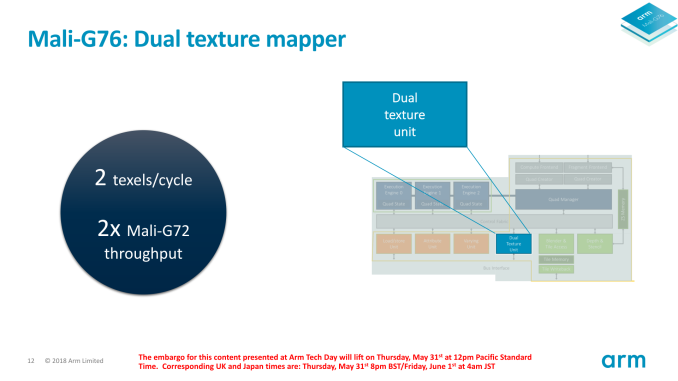

Fittingly, Arm has also doubled the throughput of their pixel and texel hardware to keep up with the wider quads. A single core can now spit out 2 texels and 2 pixels per clock, maintaining the same ALU/texture and ALU/pixel ratios as before. To signify this on the Mali-G76, Arm now calls this their “Dual Texture Unit”, as opposed to the single “Texture Unit” on G72 and G71.

The end result of all of this, as Andrei once put it, is that in a sense Arm has smashed together two Mali-G72 cores together to make a single G76 core. At equal clockspeeds compute, texture, and pixel throughput have all been doubled, and on paper will result in virtually identical performance. However the benefit to Arm is that this design takes up a lot less space than two whole cores; Arm essentially gets the same per-clock performance in about 66% of the die area of an equivalent G72 design, greatly boosting their area efficiency, an always important metric for the silicon integrators who bring Arm’s GPU designs to life.

25 Comments

View All Comments

eastcoast_pete - Friday, June 1, 2018 - link

I expect some headwind for this, but bear with me. Firstly, great that ARM keeps pushing forward on the graphics front, this does sound promising. Here my crazy (?) question: would a MALI G76 based graphics card for a PC (laptop or desktop) be a. feasible and b. be better/faster than Intel embedded. Like many users, I have gotten frustrated with the crypto-craze induced price explosion for NVIDIA and AMD dedicated graphics, and Intel seems to have thrown in the towel on making anything close to those when it comes to graphics. So, if one can run WIN 10 on ARM chips, would a graphics setup with, let's say, 20 Mali G76 cores, be worthwhile to have? How would it compare to lower-end dedicated graphics from the current duopoly? Any company out there ambitious and daring enough to try?eastcoast_pete - Friday, June 1, 2018 - link

Just to clarify: I mean a dedicated multicore (20, please) PCIe-connected MALI graphics card in a PC with a grown-up Intel or AMD Ryzen CPU - hence "crazy", but maybe not. I know there will be some sort of ARM-CPU based WIN 10 laptops, but those target the market currently served by Celeron etc.Alurian - Friday, June 1, 2018 - link

Arguably MALI might one day be powerful to do interesting things with should ARM choose to take that direction. But comparing MALI to the dedicated graphics units that AMD and NVIDIA have been working with for decades...certainly not in the short term. If it was that easy Intel would have popped out a competitor chip by now.Valantar - Friday, June 1, 2018 - link

I'd say it depends on your use case. For desktop usage and multimedia, it'd probably be decent, although it would use significantly more power than any iGPU simply due to being a PCIe device.On the other hand, for 3D and gaming, drivers are key (and far more complex), and ARM would here have to play catch-up with a decade or more of driver development from their competitors. It would not go well.

duploxxx - Friday, June 1, 2018 - link

like many users, I have gotton frustrated with and intel seems to have thrown in the towel....how does that sound you think?..........

easy solution buy a ryzen apu. more then enough cpu power to run win10 and decent gpu and if you think the intel cpu are better then ******

eastcoast_pete - Friday, June 1, 2018 - link

Have you tried to buy a graphics card recently? I actually like the Ryzen chips, but once you add the (required) dedicated graphics card, it gets expensive fast. There is a place for a good, cheap graphics solution that still beats Intel embedded but doesn't break the bank. Think HTC setups. My comment on Intel having thrown in the towel refers to them now using AMD dedicated graphics fused to their CPUs in recent months; they have clearly abandoned the idea of increasing the performance of their own designs (Iris much?) , and that is bad for the competitive situation in the lower end graphics space.jimjamjamie - Friday, June 1, 2018 - link

Ryzen 3 2200GRyzen 5 2400G

Thank me later.

dromoxen - Sunday, June 3, 2018 - link

2200ge2400ge

Intel has far from given up gfx , in fact they are plunging into it with both feet? They are demonstrating their own discrete card and hope for availability sometime in 2019. the amd powered hades is just a stop gap, maybe even a little technology demonstrator if you will. The promise of APU accelerated processing is finally arriving, most especially for AI apps.

Ryan Smith - Friday, June 1, 2018 - link

Truthfully I don't have a good answer for you. A Mali-G76MP20 works out to 480 ALUs (8*3*20), which isn't a lot by PC standards. However ALUs alone aren't everything, as we can clearly see comparing NVIDIA to AMD, AMD to Intel, etc.At a high level, high core count Malis are meant to offer laptop-class performance. So I'd expect them to be performance competitive with an Intel GT2 configuration, if not ahead of them in some cases. (Note that Mali is only for iGPUs as part of an SoC; it's lacking a bunch of important bits necessary to be used discretely)

At least if Arm gets their way, then perhaps one day we'll get to see this. With Windows-on-ARM, there's no reason you couldn't eventually build an A76+G76 SoC for a Windows machine.

eastcoast_pete - Friday, June 1, 2018 - link

Thanks Ryan! I wouldn't expect MALI graphics in a PC to challenge the high end of dedicated graphics, but if they come close to an NVIDIA 1030 card but significantly cheaper, I would be game to try. That being said, I realize that going from an SOC to a actual stand-alone part would require some heavy lifting. But then, there is an untapped market waiting to be served. Lastly, this must have occurred to the people at ARM graphics (MALI team) , and I wonder if any of them has ever speculated on how their newest&hottest would stack up against GT2, or entry-level NVIDIA and AMD solutions. Any off-the-record remarks?