Sun’s T2000 “Coolthreads” Server: First Impressions and Experiences

by Johan De Gelas on March 24, 2006 12:05 AM EST- Posted in

- IT Computing

Introducing the T2000 server

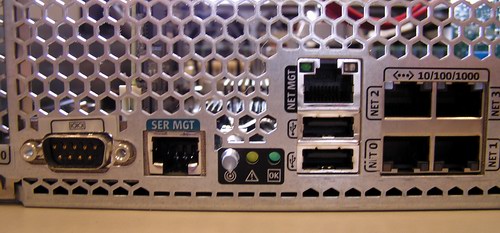

The Sun fire T2000 is more than just a server with the out-of-the-ordinary UltraSPARC T1 CPU. 16 DIMM slots support up to 32Gbytes of DDR2-533 memory. Internally, there is room for 4 SAS 73GB, 2.5" disks. Don't confuse these server grade disks with your average notebook 2.5" disks. These are fast 10.000 RPM Serial Attached SCSI (SAS) hard disk drives. With SAS, you can also use SATA disks, but you will be probably limited to 7200 RPM disks.

The T2000 in practice

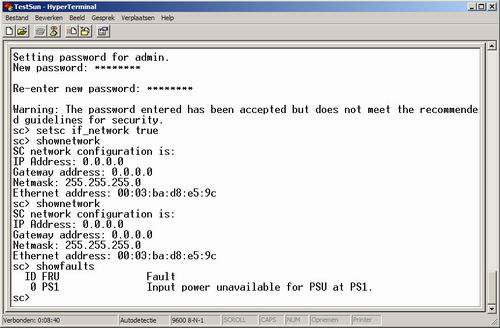

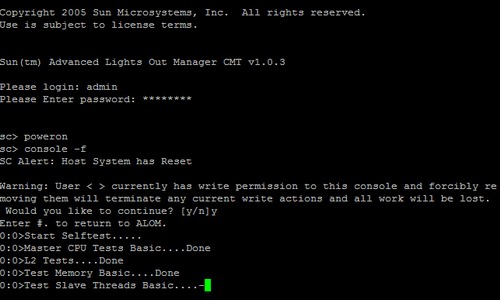

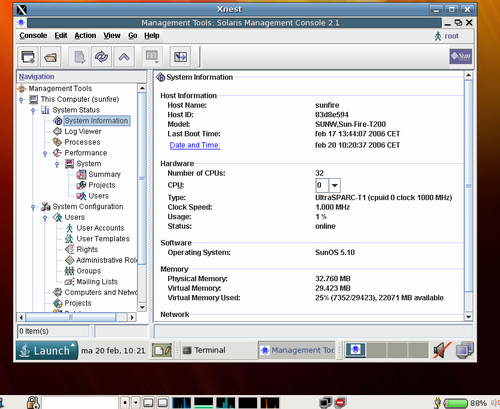

The T2000 is a headless server. To get it up and running, you first access the serial management port with Hyperterminal or a similar tool. The necessary RJ-45 serial cable was included with our server.

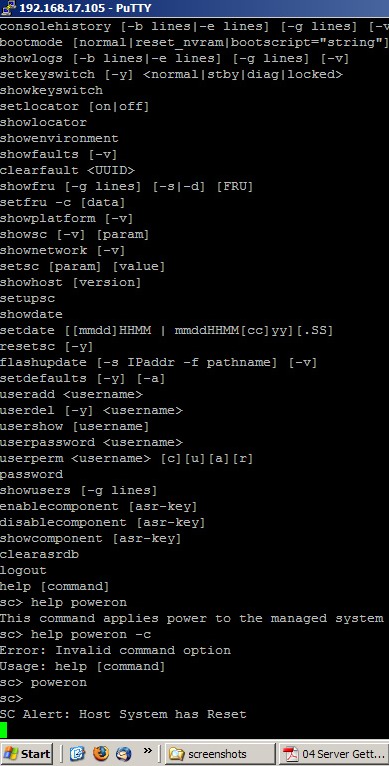

The console software offers you plenty of different commands to administer the T2000 server.

RAS

Traditionally, Sun systems have been known for excellent RAS capabilities. Besides the obligatory redundant hot swappable fans, the T2000 also incorporates dual redundant hot swap Power.

Sun also claims that the T1 CPU excels in RAS. See below for a comparison between the RAS capabilities of the current Xeon and UltraSparc T1 (source: Sun).

In fact, current Intel production Xeon Paxville processor - which find a place in similarly priced servers as the T2000 - do not support Parity checking on the L1 I-Cache - Tag. Intel also pointed out that the upcoming Woodcrest CPU will have improved RAS features.

So, it seems that right now, the Ultrasparc T1 outshines its x86 competitors.

The T2000 also features Chipkill(memory), which complements standard ECC. According to Sun, this provides twice the level of reliability of standard ECC. Chipkill detects failed DRAM, then DRAM Sparing reconfigures a DRAM channel to map out failed DIMM.

Each of UltraSPARC T1's 4x memory controllers implements background error scanner/scrubber to reduce multi-nibble errors - programmable to adjust frequency of error scanning.

When it comes to RAS, especially the cheaper T2000 (with 1 GHz CPU), there is not an equal at their price point in the server market.

The Sun fire T2000 is more than just a server with the out-of-the-ordinary UltraSPARC T1 CPU. 16 DIMM slots support up to 32Gbytes of DDR2-533 memory. Internally, there is room for 4 SAS 73GB, 2.5" disks. Don't confuse these server grade disks with your average notebook 2.5" disks. These are fast 10.000 RPM Serial Attached SCSI (SAS) hard disk drives. With SAS, you can also use SATA disks, but you will be probably limited to 7200 RPM disks.

The T2000 in practice

The T2000 is a headless server. To get it up and running, you first access the serial management port with Hyperterminal or a similar tool. The necessary RJ-45 serial cable was included with our server.

The console software offers you plenty of different commands to administer the T2000 server.

RAS

Traditionally, Sun systems have been known for excellent RAS capabilities. Besides the obligatory redundant hot swappable fans, the T2000 also incorporates dual redundant hot swap Power.

Sun also claims that the T1 CPU excels in RAS. See below for a comparison between the RAS capabilities of the current Xeon and UltraSparc T1 (source: Sun).

In fact, current Intel production Xeon Paxville processor - which find a place in similarly priced servers as the T2000 - do not support Parity checking on the L1 I-Cache - Tag. Intel also pointed out that the upcoming Woodcrest CPU will have improved RAS features.

So, it seems that right now, the Ultrasparc T1 outshines its x86 competitors.

The T2000 also features Chipkill(memory), which complements standard ECC. According to Sun, this provides twice the level of reliability of standard ECC. Chipkill detects failed DRAM, then DRAM Sparing reconfigures a DRAM channel to map out failed DIMM.

Each of UltraSPARC T1's 4x memory controllers implements background error scanner/scrubber to reduce multi-nibble errors - programmable to adjust frequency of error scanning.

When it comes to RAS, especially the cheaper T2000 (with 1 GHz CPU), there is not an equal at their price point in the server market.

26 Comments

View All Comments

phantasm - Wednesday, April 5, 2006 - link

While I appreciate the review, especially the performance benchmarks between Solaris and Linux on like hardware, I can't help but feel this article falls short in terms of an enterprise class server review which, undoubtedly, a lot of enterprise class folks will be looking for.* Given the enterprise characteristics of the T2000 I would have liked to see a comparison against an HP DL385 and IBM x366.

* The performance testing should have been done with the standard Opteron processors (versus the HE). The HP DL385 using non HE processors have nearly the same power and thermal characteristics as the T2000. DL385 is a 4A 1615 BTU system whereas the T2000 is a 4A 1365 BTU system.

* The T2000 is difficient in serveral design areas. It has a tool-less case lid that is easily removable. However, our experience has been that it opens too easily and given the 'embedded kill switch' it immediately shuts off without warning. Closing the case requires slamming the lid shut several times.

* The T2000 only supports *half height* PCI-E/X cards. This is an issue with using 3rd party cards.

* Solaris installation has a nifty power savings feature enabled by default. However, rather than throtteling CPU speed or fans it simply shuts down to the OK prompt after 30 minutes of a 'threshold' not being met. Luckily this 'feature' can be disabled through the OS.

* Power button -- I ask any T2000 owner to show me one that doesn't have a blue or black mark from a ball point pen on their power button. Sun really needs to make a more usable power button on these systems.

* Disk drives -- The disk drives are not labeled with FRU numbers or any indication to size and speed.

* Installing and configuring Solaris on a T2000 versus Linux on an x86 system will take a factor of 10x longer. Most commonly, this is initially done through a hyperterm access through the remote console. (Painful) Luckily subsequent builds can be done through a jumpstart server.

* HW RAID Configuration -- This can only be done through the Solaris OS commands.

I hope Anandtech takes up the former call to begin enterprise class server reviews.

JohanAnandtech - Thursday, April 6, 2006 - link

DL385 will be in our next test.All other issues you adressed will definitely be checked and tested.

That it falls short of a full review is clearly indicated by "first impressions" and it has been made clear several times in the article. Just give us a bit more time to get the issues out of our benchmarks. We had to move all our typical linux x86 benchmarks to Solaris and The T1 and keep it fair to Sun. This meant that we had to invest massive amounts of time in migrating databases and applications and tuning them.

davem330 - Friday, March 24, 2006 - link

You aren't seeing the same kind of performance that Sun is claimingregarding Spec Web2005 because Sun specifically choose workloads

that make heavy use of SSL.

Niagara has on-chip SSL acceleration, using a per-core modular

arithmetic unit.

BTW, would be nice to get a Linux review on the T2000 :-)

blackbrrd - Saturday, March 25, 2006 - link

Good point about the ssl.I can see both ssl and gzip beeing used quite often, so please include ssl into the benchmarks.

As mentioned in the article 1-2% of FP operations affect the server quite badly, so I would say that getting one FPU per core would make the cpu a lot better, looking forward to seeing results from the next generation.

.. but then again, both Intel and AMD will probably have launched quad cores by then...

Anyway, its interesting seeing a third contender :)

yonzie - Friday, March 24, 2006 - link

Nice review, a few comments though:I think that should have been , although you might mean dual channel ECC memory, but if that's the case it's a strange way to write it IMHO.

No mention of the Pentium M on page 4, but it shows up in benchmarks on page 5 but not further on... Would have been interesting :-(

And the second scenario is what exactly? ;-) (yeah, I know it's written a few paragraphs later, but...)

Oh, and more pretty pictures pls ^_^

sitheris - Friday, March 24, 2006 - link

Why not benchmark it on a more intensive application like Oracle 10gJohanAnandtech - Friday, March 24, 2006 - link

We are still tuning and making sure our results are 100% accurate. Sounds easy, but it is incredible complex.But they are coming

Anyway, no Oracle, we have no support from them so far.

JCheng - Friday, March 24, 2006 - link

By using a cache file you are all but taking MySQL and PHP out of the equation. The vast majority of requests will be filled by simply including the cached content. Can we get another set of results with the caching turned off?ormandj - Friday, March 24, 2006 - link

I would agree. Not only that, but I sure would like to know what the disk configuration was. Especially reading from a static file, this makes a big difference. Turn off caching and see how it does, that should be interesting!Disk configurations please! :)

kamper - Friday, March 31, 2006 - link

No kidding. I thought that php script was pretty dumb. Once a minute you'll get a complete anomaly as a whole load of concurrent requests all detect an out of date file, recalculate it and then try to dump their results at the same time.How much time was spent testing each request rate and did you try to make sure each run came across the anomaly in the same way, the same number of times?