SandForce Announces Next-Gen SSDs, SF-2000 Capable of 500MB/s and 60K IOPS

by Anand Lal Shimpi on October 7, 2010 9:30 AM ESTNAND Support: Everything

The SF-2000 controllers are NAND manufacturer agnostic. Both ONFI 2 and toggle interfaces are supported. Let’s talk about what this means.

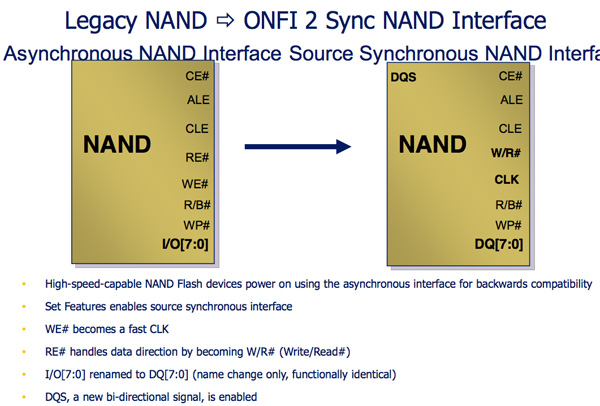

Legacy NAND is written in a very straight forward manner. A write enable signal is sent to the NAND, once the WE signal is high data can latch to the NAND.

Both ONFI 2 and Toggle NAND add another bit to the NAND interface: the DQS signal. The Write Enable signal is still present but it’s now only used for latching commands and addresses, DQS is used for data transfers. Instead of only transferring data when the DQS signal is high, ONFI2 and Toggle NAND support transferring data on both the rising and falling edges of the DQS signal. This should sound a lot like DDR to you, because it is.

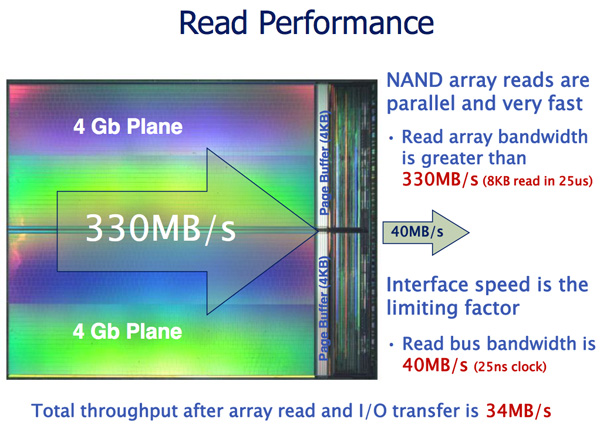

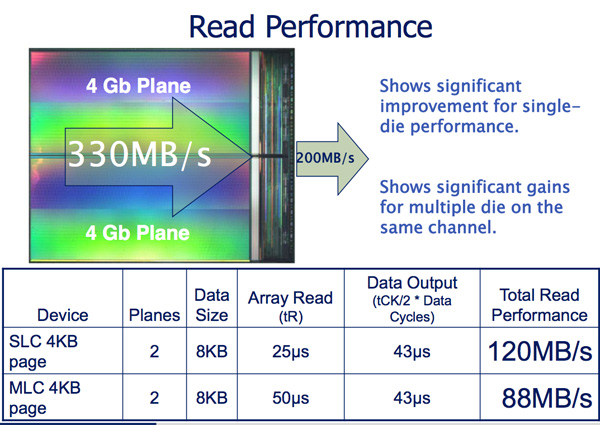

The benefit is tremendous. Whereas the current interface to NAND is limited to 40MB/s per device, ONFI 2 and Toggle increase that to 166MB/s per device.

Micron indicates that a dual plane NAND array can be read from at up to 330MB/s and written to at 33MB/s. By implementing an ONFI 2 and Toggle compliant interface, SandForce immediately gets a huge boost in potential performance. Now it’s just a matter of managing it all.

The controller accommodates the faster NAND interface by supporting more active NAND die concurrently. The SF-2000 controllers can activate twice as many die in parallel compared to the SF-1200/1500. This should result in some pretty hefty performance gains as you’ll soon see. The controller is physically faster and has more internal memory/larger buffers in order to enable the higher expected bandwidths.

Initial designs will be based on 34nm eMLC from Micron and 32nm eMLC from Toshiba. The controller does support 25nm NAND as well, so we’ll see a transition there when the yields are high enough. Note that SandForce, like Intel, will be using enterprise grade MLC instead of SLC for the server market. The demand pretty much requires it and luckily, with a good enough controller and NAND, eMLC looks like it may be able to handle a server workload.

84 Comments

View All Comments

karndog - Thursday, October 7, 2010 - link

Put two of these babys in RAID0 for 1GB/s reads AND writes. Very nice IF it lives up to expectations!Silenus - Thursday, October 7, 2010 - link

Indeed. We will have to wait and see. Hopefully the numbers are not too optimistic. Hopefully there are not too many firmware pains. Still...it's an exciting time for SSD development. Beginning of next year is when I will be ready to buy an SSD for my desktop (have one in my laptop already). Should be nice new choices by then!Nihility - Thursday, October 7, 2010 - link

It'll be 1 GB/s only on non-compressed / non-random data.Still, very cool.

mailman65er - Thursday, October 7, 2010 - link

better yet, put that behind Nvelo's "Dataplex" software, and use it as a cache for your disk(s). Seems like a waste to use it as a storage drive, most bits sitting idle most of the time...vol7ron - Thursday, October 7, 2010 - link

"most bits sitting idle most of the time... "Thus, the extenuation life.

mailman65er - Thursday, October 7, 2010 - link

Thus, the extenuation life.Well yes, you could get infinite life out of it (or any other SSD) if you never actually used it...

The point is that if you are going to spend the $$'s for the SSD that uses this controller (I assume both NAND and controller will be spendy), then you want to actually "use" it, and get the max efficiency out of it. Using it as a storage drive means that most bits are sitting idle, using it as a cache drive keeps it working more. Get that Ferrari out of the barn and drive it!

mindless1 - Tuesday, October 19, 2010 - link

Actually no, the last thing you want to use a MLC flash SSD drive for is mere, constant write, caching.Havor - Friday, October 8, 2010 - link

I really don't get the obsession whit raid specially raid 0Its the IOPs that count for how fast your PC boots ore starts programs and whit 60k IOPs i think you're covert.

Putting these drives in R0 could actually for some data patterns slow them down as data is divided over 2 drives they have to arrive at the same time ore one of the drives have to wait for the other to catch up.

Yes you will see a huge boost in sequential reads/writs but whit small random data the benefit would negative, and the overall benefit would be around up to a 5% benefit. and the down side would be the higher risk of data loss if one of the drives breaks down.

mindless1 - Tuesday, October 19, 2010 - link

No it isn't. Typical PC boot and app loading is linear in nature, it's only benchmarks that try to do several things (IOPS) simultaneously, very limited apps or servers which need IOPS significantly more than random read/write performance.You are also incorrect about slowing them down waiting because if not the drives' DRAM cache, there is the system main memory cache, and on some raid controllers (mid to higher end discrete cards) there is even the *3rd* level of controller cache on the card.

Overall benefit 5%? LOL, if you are going to make up numbers at least try harder to get close or, get ready for it, actually try it as-in actually RAIDing two then run typical PC usage representative benchmarks.

Overall the benefit will depend highly on task, or to put it another way you probably don't need to speed up things that are already reasonably quick, rather to focus on the slowest or most demanding tasks on that "PC".

Golgatha - Thursday, October 7, 2010 - link

DO WANT!!!