The AMD FreeSync Review

by Jarred Walton on March 19, 2015 12:00 PM ESTFreeSync Features

In many ways FreeSync and G-SYNC are comparable. Both refresh the display as soon as a new frame is available, at least within their normal range of refresh rates. There are differences in how this is accomplished, however.

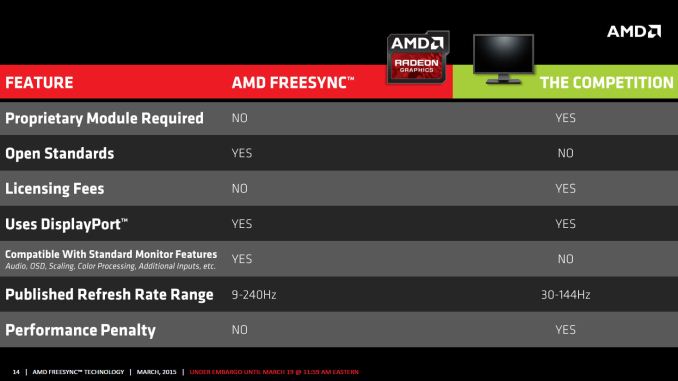

G-SYNC uses a proprietary module that replaces the normal scaler hardware in a display. Besides cost factors, this means that any company looking to make a G-SYNC display has to buy that module from NVIDIA. Of course the reason NVIDIA went with a proprietary module was because adaptive sync didn’t exist when they started working on G-SYNC, so they had to create their own protocol. Basically, the G-SYNC module controls all the regular core features of the display like the OSD, but it’s not as full featured as a “normal” scaler.

In contrast, as part of the DisplayPort 1.2a standard, Adaptive Sync (which is what AMD uses to enable FreeSync) will likely become part of many future displays. The major scaler companies (Realtek, Novatek, and MStar) have all announced support for Adaptive Sync, and it appears most of the changes required to support the standard could be accomplished via firmware updates. That means even if a display vendor doesn’t have a vested interest in making a FreeSync branded display, we could see future displays that still work with FreeSync.

Having FreeSync integrated into most scalers has other benefits as well. All the normal OSD controls are available, and the displays can support multiple inputs – though FreeSync of course requires the use of DisplayPort as Adaptive Sync doesn’t work with DVI, HDMI, or VGA (DSUB). AMD mentions in one of their slides that G-SYNC also lacks support for audio input over DisplayPort, and there’s mention of color processing as well, though this is somewhat misleading. NVIDIA's G-SYNC module supports color LUTs (Look Up Tables), but they don't support multiple color options like the "Warm, Cool, Movie, User, etc." modes that many displays have; NVIDIA states that the focus is on properly producing sRGB content, and so far the G-SYNC displays we've looked at have done quite well in this regard. We’ll look at the “Performance Penalty” aspect as well on the next page.

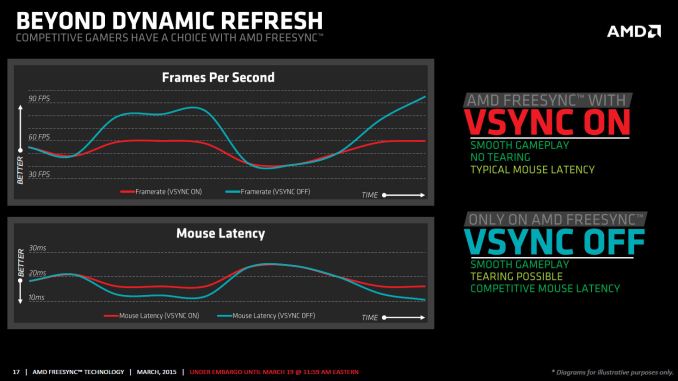

One other feature that differentiates FreeSync from G-SYNC is how things are handled when the frame rate is outside of the dynamic refresh range. With G-SYNC enabled, the system will behave as though VSYNC is enabled when frame rates are either above or below the dynamic range; NVIDIA's goal was to have no tearing, ever. That means if you drop below 30FPS, you can get the stutter associated with VSYNC while going above 60Hz/144Hz (depending on the display) is not possible – the frame rate is capped. Admittedly, neither situation is a huge problem, but AMD provides an alternative with FreeSync.

Instead of always behaving as though VSYNC is on, FreeSync can revert to either VSYNC off or VSYNC on behavior if your frame rates are too high/low. With VSYNC off, you could still get image tearing but at higher frame rates there would be a reduction in input latency. Again, this isn't necessarily a big flaw with G-SYNC – and I’d assume NVIDIA could probably rework the drivers to change the behavior if needed – but having choice is never a bad thing.

There’s another aspect to consider with FreeSync that might be interesting: as an open standard, it could potentially find its way into notebooks sooner than G-SYNC. We have yet to see any shipping G-SYNC enabled laptops, and it’s unlikely most notebooks manufacturers would be willing to pay $200 or even $100 extra to get a G-SYNC module into a notebook, and there's the question of power requirements. Then again, earlier this year there was an inadvertent leak of some alpha drivers that allowed G-SYNC to function on the ASUS G751j notebook without a G-SYNC module, so it’s clear NVIDIA is investigating other options.

While NVIDIA may do G-SYNC without a module for notebooks, there are still other questions. With many notebooks using a form of dynamic switchable graphics (Optimus and Enduro), support for Adaptive Sync by the Intel processor graphics could certainly help. NVIDIA might work with Intel to make G-SYNC work (though it’s worth pointing out that the ASUS G751 doesn’t support Optimus so it’s not a problem with that notebook), and AMD might be able to convince Intel to adopt DP Adaptive Sync, but to date neither has happened. There’s no clear direction yet but there’s definitely a market for adaptive refresh in laptops, as many are unable to reach 60+ FPS at high quality settings.

350 Comments

View All Comments

chizow - Saturday, March 21, 2015 - link

You seem to be taking my comments pretty seriously Jarred, as you should, given they draw a lot of questions to your credibility and capabilities in writing a competent "review" of the technology being discussed. But its np, no one needs to take me seriously, this isn't my job, unlike yours even if it is part time. The downside is, reviews like this make it harder for anyone to take you or the content on this site seriously, because as you can see, there are a number of other individuals that have taken issue to your Engadget-like review. I am sure there are a number of people that will take this review as gospel, go out and buy FreeSync panels, discover ghosting issues not covered in this "review" and ultimately, lose trust in what this site represents. Not that you seem to care.As for being limited in equipment, that's just another poor excuse and asterisk you've added to the footnotes here. It takes a max $300 camera, far less than a single performance graphics card, and maybe $50 in LED, diodes and USB input doublers (hell you can even make your own if you know how to splice wires) at Digikey or RadioShack to test this. Surely, Ryan and your new parent company could foot this bill for a new test methodology if there was actually interest in conducting a serious review of the technology. Numerous sites have already given the methodology for input lag and ghosting with a FAR smaller budget than AnandTech, all you would have to do is mimic their test set-up with a short acknowledgment which I am sure they would appreciate from the mighty AnandTech.

But its OK, like the FCAT issue its obvious AT had no intention of actually covering the problems with FreeSync, I guess if it takes a couple of Nvidia "zealots" to get to the bottom of it and draw attention to AMD's problems to ultimately force them to improve their products, so be it. Its obvious the actual AMD fans and spoon-fed press aren't willing to tackle them.

As for blanket-statements lol, that's a good one. I guess we should just take your unsubstantiated points of view, which are unsurprisingly, perfectly aligned with AMD's, at face value without any amount of critical thinking and skepticism?

It's frankly embarrassing to read some of the points you've made from someone who actually works in this industry, for example:

1) One shred of confirmation that G-Sync carries royalties. Your "semantics" mean nothing here.

2) One shred of confirmation that existing, pre-2015 panels can be made compatible with a firmware upgrade.

3) One shred of confirmation that G-Sync somehow faces the uphill battle compared to FreeSync, given known market indicators and factual limitations on FreeSync graphics card support.

All points you have made in an effort to show FreeSync in a better light, while downplaying G-Sync.

As for the last bit, again, if you have to sacrifice your gaming quality in an attempt to meet FreeSync's minimum standard refresh rate, the solution has already failed, given one of the major benefits of VRR is the ability to crank up settings without having to resort to Vsync On and the input lag associated with it. For example, in your example, if you have to drop settings from Very High to High just so that your FPS don't drop below 45FPS for 10% of the time, you've already had to sacrifice your image quality for the other 90% it stays above that. That is a failure of a solution if the alternative is to just repeat frames for that 10% as needed. But hey, to each their own, this kind of testing and information would be SUPER informative in an actual comprehensive review.

As for your own viewpoints on competition, who cares!?!?!? You're going to color your review and outlook in an attempt to paint FreeSync in a more favorable light, simply because it aligns with your own viewpoints on competition? Thank you for confirming your reasoning for posting such a biased and superficial review. You think this is going to matter to someone who is trying to make an informed decision, TODAY, on which technology to choose? Again, if you want to get into the socioeconomic benefits of competition and why we need AMD to survive, post this as an editiorial, but to put "Review" in the title is a disservice to your readers and the history of this website, hell, even your own previous work.

steve4king - Monday, March 23, 2015 - link

Thanks Jarred. I really appreciate your work on this. However, I do disagree to some extent on the low-end FPS issue. The biggest potential benefit to Adaptive Refresh is smoothing out the tearing and judder that happens when the frame rate is inconsistent and drops. I also would not play at settings where my average frame-rate fell below 60fps.. However, my settings will take into account the average FPS, where most scenes may be glassy-smooth, while in a specific area the frame-rate may drop substantially. That's where I really need adaptive-sync to shine. And from most reports, that's where G-Sync does shine. I expect low end flicker could be solved with a doubling of frames, and understand you cannot completely solve judder if the frame-rate is too low.tsk2k - Friday, March 20, 2015 - link

Thanks for your reply Jarred.I was just throwing a tantrum cause I wanted a more in-depth article.

5150Joker - Friday, March 20, 2015 - link

I own a G-Sync ASUS ROG PG278Q display and while it's fantastic, I'd prefer NVIDIA just give up on G-Sync and go with the flow and adapt ASync/FreeSync. It's clearly working as well (which was my biggest hesitation) so there's no reason to continue forcing users to pay a premium on proprietary technology that more and more display manufacturers will not support. If LG or Samsung push out a 34" widescreen display that is AHVA/IPS with low response time and 144 Hz support, I'll probably sell my ROG Swift and switch, even if it is a FreeSync display. Like Jared said in his article, you don't notice tearing with a 144 Hz display so G-Sync/FreeSync make little to no impact.chizow - Friday, March 20, 2015 - link

And what if going to Adaptive Sync results in a worst experience? Personally I have no problems if Nvidia uses an inferior Adaptive Sync based solution, but I would still certainly want them to continue developing in and investing in G-Sync, as I know for a fact I would not be happy with what FreeSync has shown today.wira123 - Friday, March 20, 2015 - link

inferior / worst experience ?meanwhile the review from anandtech, guru3d, hothardware, overclock3d, hexus, techspot, hardwareheaven, and the list could goes on forever. They clearly stated that the experiences with both freesync & g-sync are equal / comparable, but freesync cost less as an added bonus.

Before you accuse me as an AMD fanboy, i own an intel haswell cpu & zotac GTX 750 (should i take pics as prove ?).

Based of review from numerous tech sites. my conclusion is : either g-sync will end up just like betamax, or nvdia forced to adopt adaptive sync.

chizow - Friday, March 20, 2015 - link

These tests were done in some kind of limited/closed test environment apparently, so yes all of these reviews are what I would consider incomplete and superficial. There are a few sites however that delve deeper and notice significant issues. I have already posted links to them in the comments, if you made it this far to comment you would've come upon them already and either chose to ignore them or missed them. Feel free to look them over and come to your own conclusions, but it is obvious to me, the high floor refresh and the ghosting make FreeSync worst than G-Sync without a doubt.wira123 - Friday, March 20, 2015 - link

Yeah since pcper gospel review was apparently made by jesus.And 95% reviewer around the world who praised freesync are heretic, isn't that right ?

V-sync can be turned off-on as you wish if the fps surpass the monitor refresh cap range, unlike G-sync. And none report ghostring effect SO FAR, you are daydreaming or what ?

Still waiting for tomshardware review, even though i already know what their verdict will be

chizow - Saturday, March 21, 2015 - link

Who needs god when you have actual screenshots and video of the problems? This is tech we are talking about, not religion, but I am sure to AMD fans they are one and the same.Crunchy005 - Saturday, March 21, 2015 - link

@chizow anything that doesn't say Nvidia is God is incomplete and superficial to you. You are putting down a lot of hard work out into a lot of reviews for one review that pointed out a minor issue.Show us more than one and maybe we will loom at it but your paper gospel means nothing when there are a ton more articles that contradict it. Also what makes you the expert here when all these reviews say it is the same/comparable and you yourself has not seen freesync in person. If you think you can do a better job start a blog and show us. Otherwise stop with your anti AMD pro Nvidia campaign and get off your high horse. In these comments you even attacked Jarred who works hard in his short time that he gets hardware to give us as much relevant info that he can. You don't show respect to others work here and you make blanket statements with nothing to support yourself.