Itanium - is there light at the end of the tunnel?

by Johan De Gelas on November 9, 2005 12:05 AM EST- Posted in

- CPUs

Introduction

On HP's website, these prophetic words are hidden, but can still be found:

As the Itanium market is still limited to HPC and (ultra) high-end servers, Microsoft is losing interest in the Itanium. IA 64 versions of Longhorn are low priority and only the future of the High Performance Computing version for Itanium seems certain. Visual Studio 2005 does not even support the Itanium platform. Dell and IBM are no longer interested. It is not going too well for Itanium.

A few years ago, analysts predicted doom for Sun; not completely without reason, as the Intel Itanium 2 and IBM Power 5 clearly wipe the floor performance-wise with the UltraSparc CPUs. However, Sun's revenge is very sweet. Sun's newest Galaxy servers with up to 16 Opteron cores are a very competitive platform for the expensive Itanium servers. The Galaxy servers are well suited for clustering, so even in the market niche that requires more than 16 CPUs, are the Itanium based machines threatened by a cheaper alternative?

Although the AMD Opteron targets a different market than the Intel Itanium, the Opteron market is expanding towards the high end, thanks to Sun, which in turn forces Intel to expand the feature set of the Xeon. Back in 2004 when EM64T was introduced, Intel pointed out that EM64T was only introduced on the Xeon DP. Intel probably expected the Opteron to be limited to workstations and entry level servers. However, the Opteron was very successful in the quad CPU market, and then it entered the 8-way and 16-way CPU market too. Intel had no choice then to counter attack and equip the Xeon MP with EMT64 and much higher clockspeeds than before the Opteron era, better RAS features and massive (for x86) L3 caches, up to 8MB big.

Is Itanium nothing more than over an ambitious project that resulted in a CPU of titanic proportions? In this article, we try to answer the question of whether or not the EPIC CPU has a bright future ahead. To answer that question, we'll focus on the technical advantages and disadvantages of the chip, and look ahead to see if the architecture can still grow enough to outpace the competition.

The End of a Generation

Indeed, you might ask yourself, why do we even bother writing articles about Itanium? It is, after all, a massive CPU that ends up in very expensive machines, mostly huge database servers and HPC machines for scientific purposes; machines that most of us will never consider buying, not even for business purposes.

Still, despite its rather dull reputation of a big iron CPU, and the flood of negative predictions, the EPIC has something fascinating. From a purely technical and academic point of view - completely ignoring the economical and business logic - there are some strong indications that time may well be on the side of the EPIC CPU despite all doom scenarios. That might sound insane right now, but allow me to explain this statement.

As we stated in the "The Quest for More Processing Power, Part One", the CPU performance increase that we enjoyed during the golden era of the PC from 1981 to 2002 has hit the brakes, and is decreasing quickly. Back in the nineties, Intel and others introduced techniques like superscalar wide issue, out of order execution with big reorder buffers, speculative execution, integrated L2-caches, register renaming and dynamic branch prediction, which all increased the number of instructions that could be processed per cycle (IPC) on average. The AMD Athlon, which was introduced in 1999, and the Thunderbird incarnation in 2000 could be considered as the last representatives of this superscalar generation. Macro ops fusion, introduced in the Athlon, where two operations are travelling down the pipeline together until they get separated to get executed, was one of the last major tricks of this generation.

Since then, only one improvement has really pushed performance per cycle forward: the on die memory controller (ODMC). Sure, there have been other "little tricks" that have steadily improved performance, but nothing spectacular. The CPU engineers still have a few tricks upon their sleeves that can improve IPC somewhat, but are limited to those that do not increase leakage and dynamic power loss. The focus is no longer on IPC or Instruction Level Parallelism (ILP). It is on Thread Level Parallelism (TLP).

A good example of how the engineering focus has shifted is branch prediction. Quite a bit of resources have been spent on the Pentium 4's branch predictor, involving a whole team of Intel engineers. The result was that, on average, the Pentium 4 branch predictor is accurate 95-97% of the time, while the P6 BPU was accurate only 90% of the time.

At Spring IDF 2005, when Anand, Derek and I asked Justin Rattner what Intel is doing in the field of even more advanced Branch prediction, he smiled. He told us that the current team who works on branch prediction is very small...around one person.

There is no doubt that the whole industry has shifted their focus away from ramping clock speed and improving ILP to increasing performance by exploiting TLP. So, how does this affect Itanium and its EPIC foundation? Before we answer that, let us quickly review the basics behind the Itanium/EPIC philosophy.

On HP's website, these prophetic words are hidden, but can still be found:

"EPIC is the old term for what is now known as the ItaniumTM processor family architecture, co-developed by HP and Intel®. This design philosophy will one day replace RISC and CISC. It is a gateway into the 64-bit future but it still remains completely 32-bit compatible."These sentences showed how bullish HP and Intel were a few years ago about their new creation. But in 2005, the reality is somewhat different:

"Dell will phase out its remaining computer based on Intel's Itanium microprocessor, in another sign of the waning interest in a chip that cost an estimated several billion dollars to develop." The Wall Street Journal, September 15th 2005.While it is hardly news that Dell, who doesn't believe in "big iron" anyway, is dropping Itanium, the rest of the sentences that the WSJ journalist wrote down seem to spell doom.

As the Itanium market is still limited to HPC and (ultra) high-end servers, Microsoft is losing interest in the Itanium. IA 64 versions of Longhorn are low priority and only the future of the High Performance Computing version for Itanium seems certain. Visual Studio 2005 does not even support the Itanium platform. Dell and IBM are no longer interested. It is not going too well for Itanium.

A few years ago, analysts predicted doom for Sun; not completely without reason, as the Intel Itanium 2 and IBM Power 5 clearly wipe the floor performance-wise with the UltraSparc CPUs. However, Sun's revenge is very sweet. Sun's newest Galaxy servers with up to 16 Opteron cores are a very competitive platform for the expensive Itanium servers. The Galaxy servers are well suited for clustering, so even in the market niche that requires more than 16 CPUs, are the Itanium based machines threatened by a cheaper alternative?

Although the AMD Opteron targets a different market than the Intel Itanium, the Opteron market is expanding towards the high end, thanks to Sun, which in turn forces Intel to expand the feature set of the Xeon. Back in 2004 when EM64T was introduced, Intel pointed out that EM64T was only introduced on the Xeon DP. Intel probably expected the Opteron to be limited to workstations and entry level servers. However, the Opteron was very successful in the quad CPU market, and then it entered the 8-way and 16-way CPU market too. Intel had no choice then to counter attack and equip the Xeon MP with EMT64 and much higher clockspeeds than before the Opteron era, better RAS features and massive (for x86) L3 caches, up to 8MB big.

Is Itanium nothing more than over an ambitious project that resulted in a CPU of titanic proportions? In this article, we try to answer the question of whether or not the EPIC CPU has a bright future ahead. To answer that question, we'll focus on the technical advantages and disadvantages of the chip, and look ahead to see if the architecture can still grow enough to outpace the competition.

The End of a Generation

Indeed, you might ask yourself, why do we even bother writing articles about Itanium? It is, after all, a massive CPU that ends up in very expensive machines, mostly huge database servers and HPC machines for scientific purposes; machines that most of us will never consider buying, not even for business purposes.

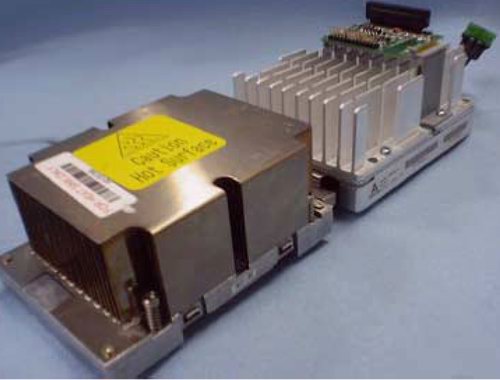

Sturdy heatsinks for the Itanium

Still, despite its rather dull reputation of a big iron CPU, and the flood of negative predictions, the EPIC has something fascinating. From a purely technical and academic point of view - completely ignoring the economical and business logic - there are some strong indications that time may well be on the side of the EPIC CPU despite all doom scenarios. That might sound insane right now, but allow me to explain this statement.

As we stated in the "The Quest for More Processing Power, Part One", the CPU performance increase that we enjoyed during the golden era of the PC from 1981 to 2002 has hit the brakes, and is decreasing quickly. Back in the nineties, Intel and others introduced techniques like superscalar wide issue, out of order execution with big reorder buffers, speculative execution, integrated L2-caches, register renaming and dynamic branch prediction, which all increased the number of instructions that could be processed per cycle (IPC) on average. The AMD Athlon, which was introduced in 1999, and the Thunderbird incarnation in 2000 could be considered as the last representatives of this superscalar generation. Macro ops fusion, introduced in the Athlon, where two operations are travelling down the pipeline together until they get separated to get executed, was one of the last major tricks of this generation.

Since then, only one improvement has really pushed performance per cycle forward: the on die memory controller (ODMC). Sure, there have been other "little tricks" that have steadily improved performance, but nothing spectacular. The CPU engineers still have a few tricks upon their sleeves that can improve IPC somewhat, but are limited to those that do not increase leakage and dynamic power loss. The focus is no longer on IPC or Instruction Level Parallelism (ILP). It is on Thread Level Parallelism (TLP).

A good example of how the engineering focus has shifted is branch prediction. Quite a bit of resources have been spent on the Pentium 4's branch predictor, involving a whole team of Intel engineers. The result was that, on average, the Pentium 4 branch predictor is accurate 95-97% of the time, while the P6 BPU was accurate only 90% of the time.

At Spring IDF 2005, when Anand, Derek and I asked Justin Rattner what Intel is doing in the field of even more advanced Branch prediction, he smiled. He told us that the current team who works on branch prediction is very small...around one person.

There is no doubt that the whole industry has shifted their focus away from ramping clock speed and improving ILP to increasing performance by exploiting TLP. So, how does this affect Itanium and its EPIC foundation? Before we answer that, let us quickly review the basics behind the Itanium/EPIC philosophy.

43 Comments

View All Comments

fitten - Thursday, November 10, 2005 - link

I'm guessing they'll write an article on it when it actually exists... it's at least two years out still before they expect to have *real* silicon for it and a lot can change between now and then.fic - Thursday, November 10, 2005 - link

Hmmm, their press release says Q3 '06. I know that dates can and do slip, but I doubt they will slip a year.Besides, most of the Itanic stuff that was talked about in the article isn't shipping and probably never will. How late is the "next" version - 2+ years? - with no real expected ship date in the forseeable future. It would be nice to see an article about the architecture of the chips, decisions made and trade offs for the power efficiency that they are driving toward. Also, this was started a few years ago, what lead them down the power efficiency path before some of the major companies (notably intel) even realized it was an issue.

fitten - Friday, November 11, 2005 - link

From the press release:"It will sample in the third calendar quarter of 2006, with single-core and quad-core versions due in early and late 2007, respectively, and an eight-core version planned for 2008."

Sampling doesn't mean general avialability... not even close. The closest thing they have to availability is "early and late 2007" for availability of single- and quad-core versions.

xelpmoc - Wednesday, November 9, 2005 - link

"TLP, caches and power consumption" is more than three words!Interesting article, though.

Questar - Wednesday, November 9, 2005 - link

Excelent article.I've been telling people for years the Itanium architecture is the future (not the chip). In 20 years there will be no OOE chips on the market, everything will be similar to EPIC. AMD will be there too.

highlandsun - Wednesday, November 9, 2005 - link

I don't see any need for EPIC or VLIW. The Itanium is basically using a 41 bit instruction word. The allocation of bits is only slightly different from the allocation used in a 32 bit RISC instruction. Indeed, point a 128-bit memory channel at a stream of 32 bit instructions and you'll get higher instruction dispatch rates and greater code density. EPIC is philosophically the same as hyperthreading - running multiple instruction streams in parallel in a single CPU core. But that just makes CPU designs unnecessarily complex. With the trend to multi-core CPUs, you get parallelism by using separate cores. Let each core crunch on a single instruction stream at a time, and all of that extra baggage is unnecessary. What is the point of having 11 execution units in a single core if you can only feed it 3 instructions per cycle? An efficient design would keep the number of execution units matched to the number of instructions available, any more is just wasted.Personally I would have invested more effort into scaling speeds on the MIPS design. The Itanium's predicated instructions are cool, but the MIPS architecture has those too. Anything you can do to avoid branching is definitely a win. But if you can pre-fetch 4 32-bit instructions in one cycle and decode and detect branches in advance, that's going to give higher IPC than this VLIW implementation.

Questar - Wednesday, November 9, 2005 - link

You don't know what EPIC is. It's not hyperthreading, and it makes CPU's LESS complex as there is no need for all the hardware needed to support OOE. Cell and Xenos are examples.Think what you want, but the brightest mins in the CPU world are all looking this way.

highlandsun - Wednesday, November 9, 2005 - link

Actually, having handwritten IA64 assembly code I'm acutely aware of what EPIC is and isn't. The point is that it's another lame attempt at increasing parallelism in one core. The problem is that it tries to give the illusion of indepent execution units, just as hyperthreading tries to give the illusion of multiple execution units, and neither implementation is sufficiently flexible. You would get more throughput from truly independent cores, letting the programmer (or some layer above the processor) explicitly allocate instructions to execution units.roymbrown - Thursday, November 10, 2005 - link

"it's another lame attempt at increasing parallelism in one core"It sounds like you are confusing different types of parallelism here. You are referring to TLP (thread level), but EPIC attempts to address ILP (instruction level). Hyperthreading is focused on running multiple independent threads on a single core. Hyperthreading improves TLP, often, at the expense of ILP. EPIC is focused on executing non-dependent instructions within a single thread in parallel. This is more analagous to the work done by complex out-of-order scheduler. EPIC attempts to push this scheduling work onto the compiler.

"You would get more throughput from truly independent cores"

Yes, you would, if you have lots of independent threads. Adding more independent cores improves TLP, but does nothing about ILP.

Thunder 57 - Monday, May 6, 2019 - link

It may not be 20 years later, but OoOE is very much alive and Itanium is dead. We've been hearing for years now that ARM will kill off x86-64. I wonder where we will be in another 20 years.